On April 24, the preview version of DeepSeek’s new series model DeepSeek-V4 was officially launched and open sourced simultaneously. According to reports, DeepSeek-V4 has a million-word ultra-long context and leads the domestic and open source fields in terms of agent capabilities, world knowledge and reasoning performance.

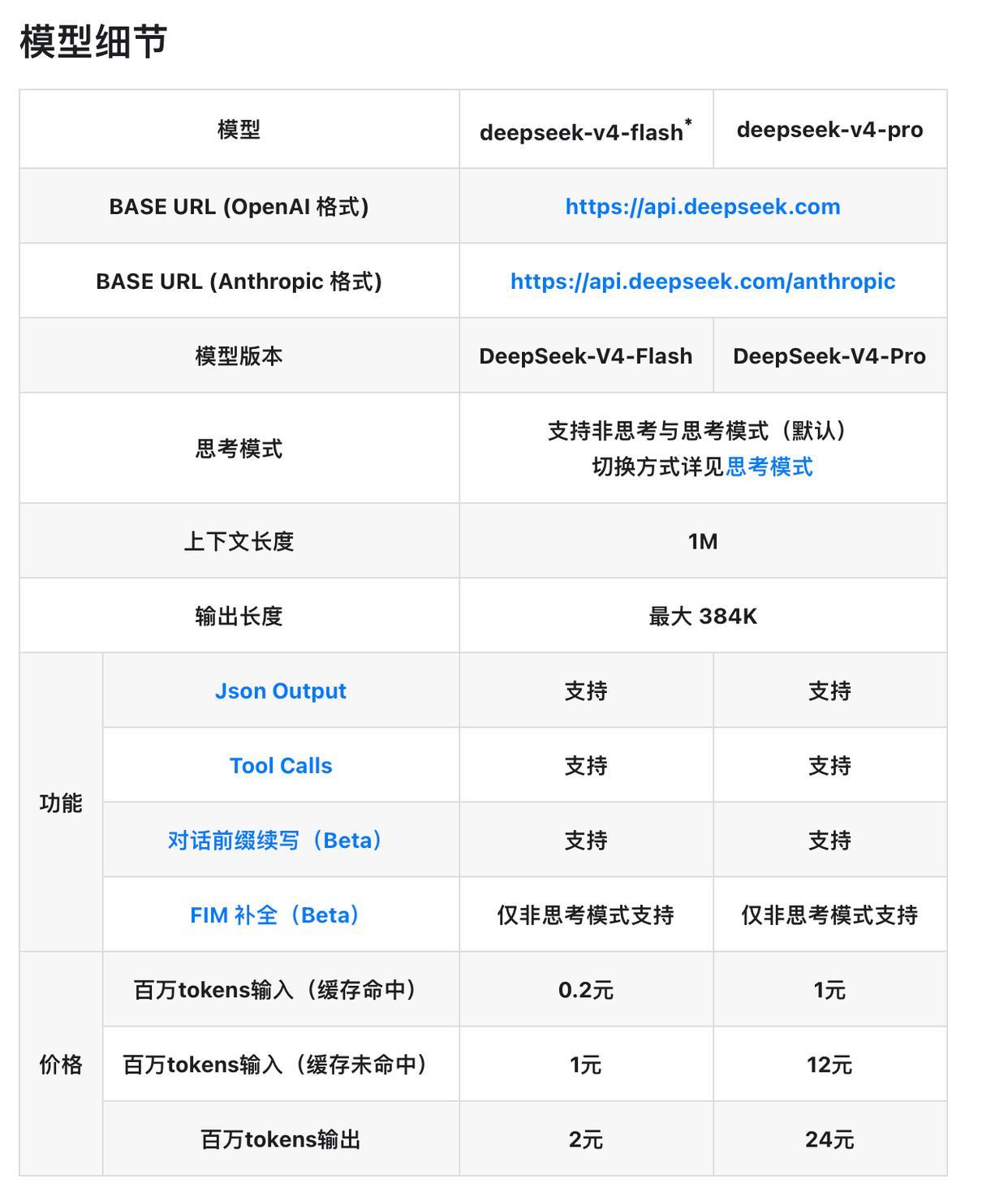

Log in to the official website chat.deepseek.com or the official App from now on to talk to the latest DeepSeek-V4 and explore the new experience of 1M ultra-long context memory. The API service has been updated synchronously and can be called by modifying the model_name to deepseek-v4-pro or deepseek-v4-flash.

In addition, DeepSeek-V4 has adapted and optimized mainstream Agent products such as Claude Code, OpenClaw, OpenCode, and CodeBuddy, improving its performance in tasks such as code and document generation. Both V4-Pro and V4-Flash support 1M context length, and provide both non-thinking mode and thinking mode. The latter can set the reasoning_effort parameter (high / max). For complex Agent scenarios, the official recommendation is to use thinking mode and set the intensity to max.