This time, Ultraman did not stand up and say, "The first time I experienced it, I was so scared that I fainted and collapsed. At that moment, it was like seeing an atomic bomb explode." Instead, he hired a group of substitutes (early test users). Among them was an Nvidia engineer who briefly lost access to GPT-5.5 after early testing and said this:

Losing GPT-5.5 is like having an amputation.

Let's talk, let's make trouble.

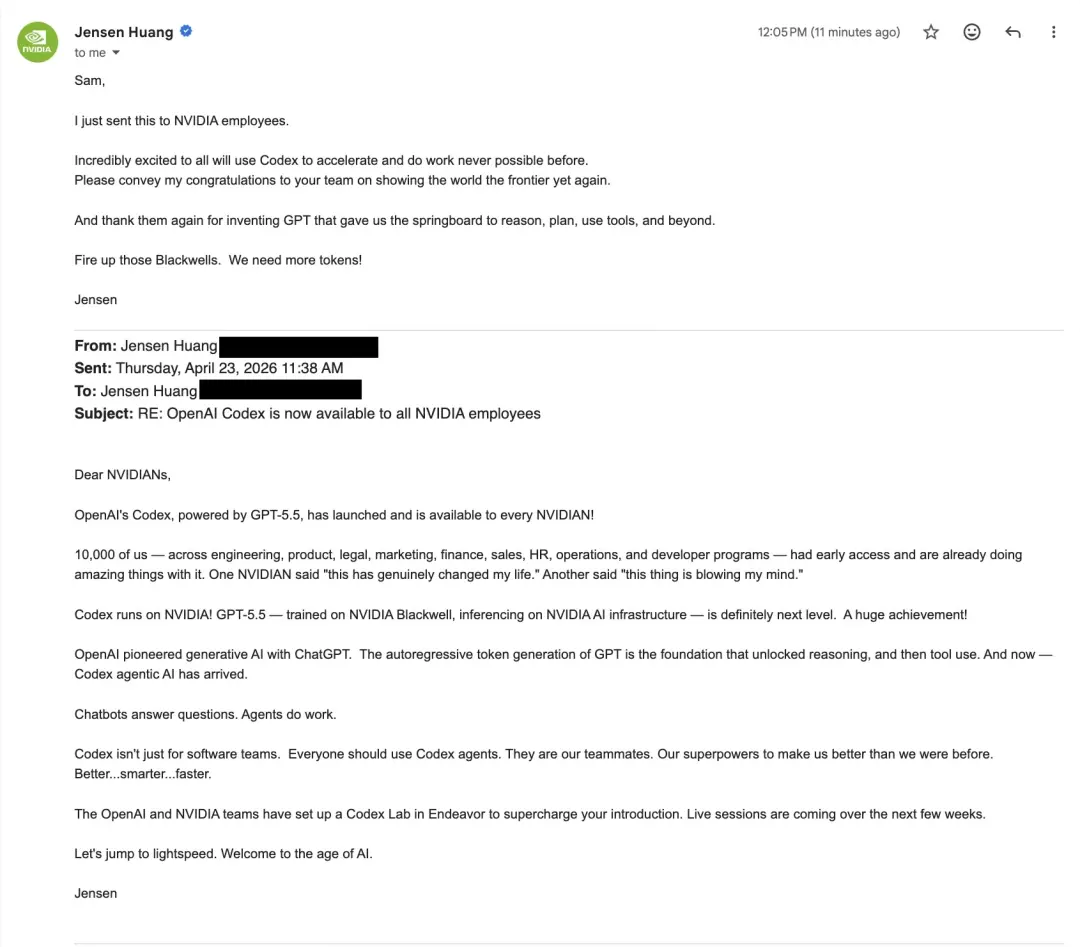

This cooperation between OpenAI and NVIDIA is unprecedented.

First, GPT-5.5 and NVIDIA GB200 and GB300 NVL72 systems are jointly designed. From training to deployment, the relationship between model and hardware has been bidirectional since its birth.

Second, to promote Codex to the entire NVIDIA company, Ultraman also posted an email with Lao Huang.

Let’s look at the results of the cooperation first.

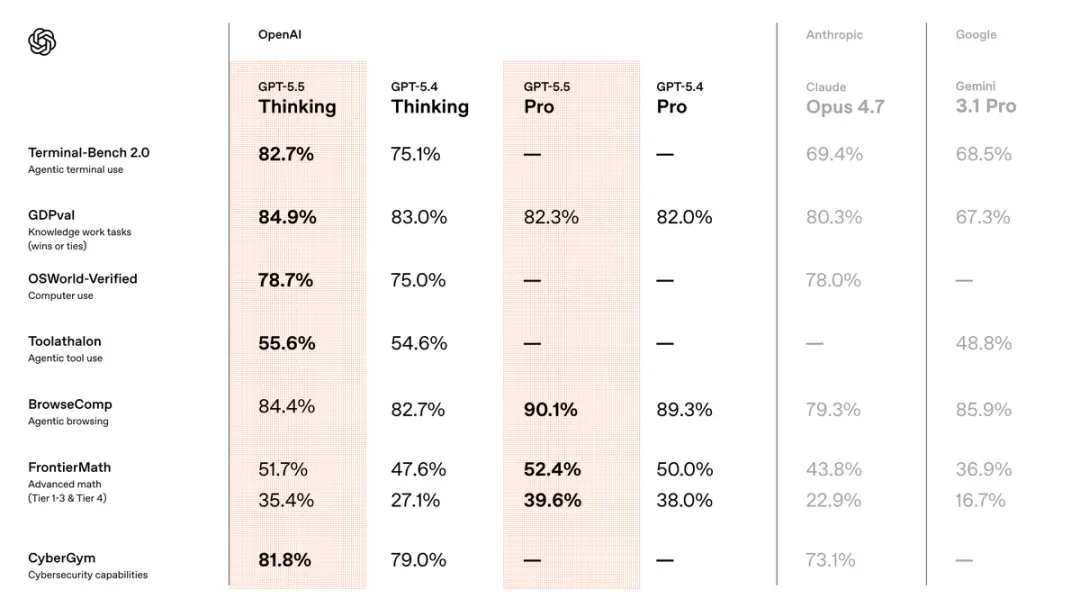

Compared with the previous version GPT5.4, the new model has taken the lead in all three fields: code, knowledge work, and scientific research.

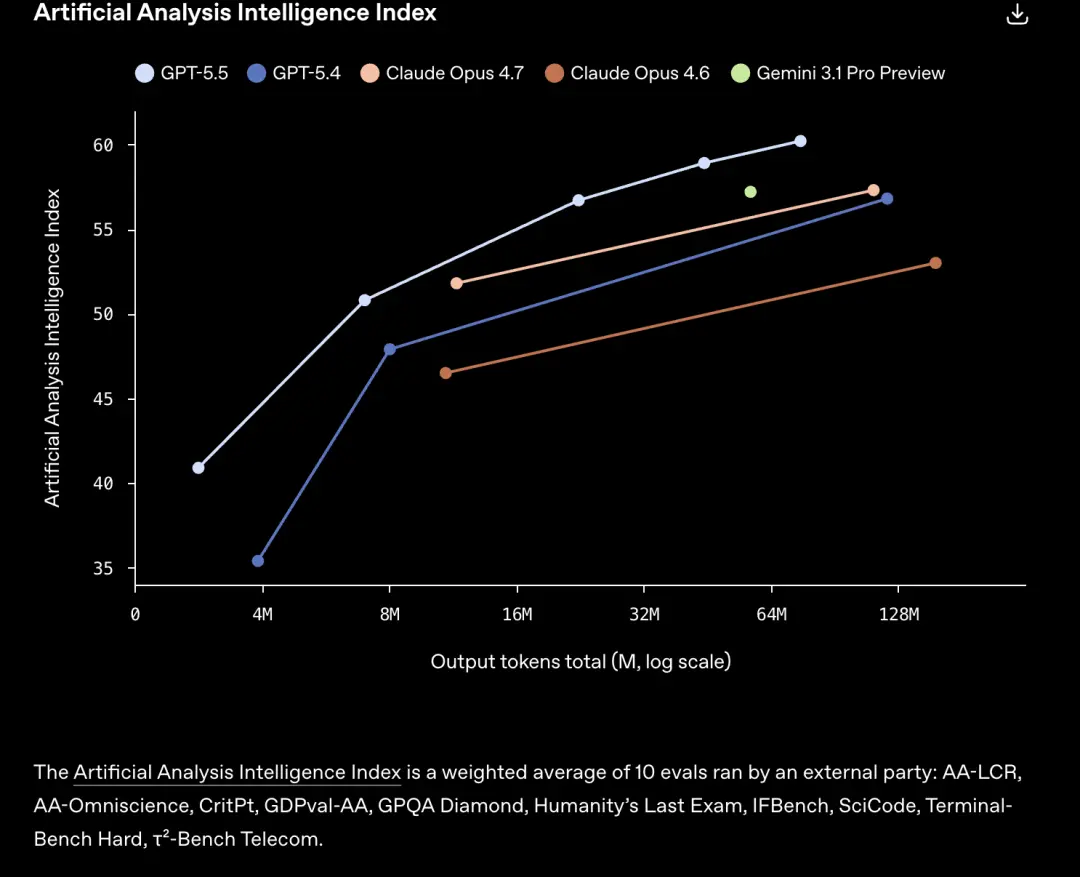

Comprehensive test Artificial Analysis Intelligence Index results, there are two ways to interpret:

GPT-5.5 obtains the same score and consumes less tokens than Claude Opus 4.7 and other models.

Or consuming the same token, GPT-5.5 can complete more tasks.

But the most surprising thing is not the running score.

In the past, every model upgrade, "stronger" and "slower" were almost sold in a package.

This is the price of Scaling Law, a larger model, more parameters, and longer thinking time. When users pay for intelligence, they also pay for delay.

GPT-5.5 breaks this iron law.

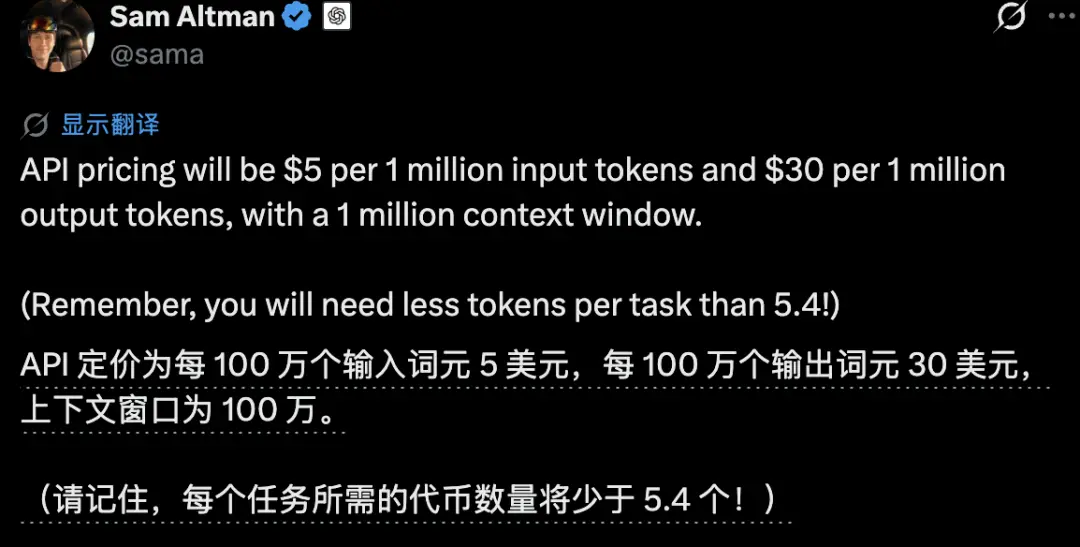

In a real production environment, its token-by-token latency is equivalent to GPT-5.4, and fewer tokens are needed to complete the same task than GPT5.4.

is more efficient and more powerful.

(but the price is doubled)

As of press time, the latest version of Codex update can already use GPT-5.5.

The context window has also been upgraded to 400K

[GT32 GT]

Programming hacks

Programming is the area where GPT-5.5 has improved the most.

When using the previous generation model, you still have to carefully break down the tasks, watch it step by step, and be ready to correct deviations at any time.

GPT-5.5 is different. You throw the requirements over and it disassembles, executes and checks itself. You just have to look at the results.

OpenAI demonstrated a 3D action game generated by GPT-5.5 under Codex, running directly on the web page.

includes using TypeScript/Three.js to implement the combat system, enemy encounters, HUD feedback, and GPT-generated environment textures.

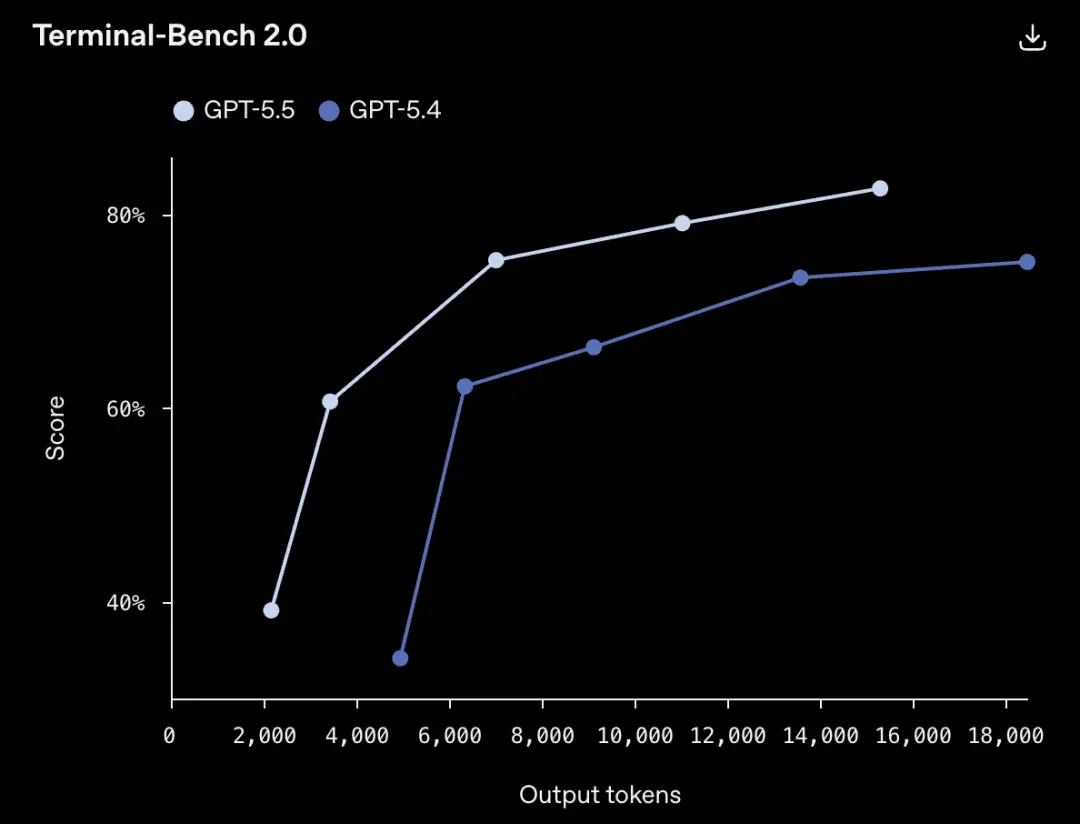

Terminal-Bench 2.0, a hard-core test that measures complex command line workflows, GPT-5.5 got 82.7%.

The GPT-5.4 of the previous version was 75.1%, and the current strongest competitor, Claude Opus 4.7, was 69.4%.

It can be understood that when encountering this level of difficulty, nearly one-third of the previous generation models would get stuck, but now this proportion has dropped to less than a quarter.

Next, please give your mouth a substitute:

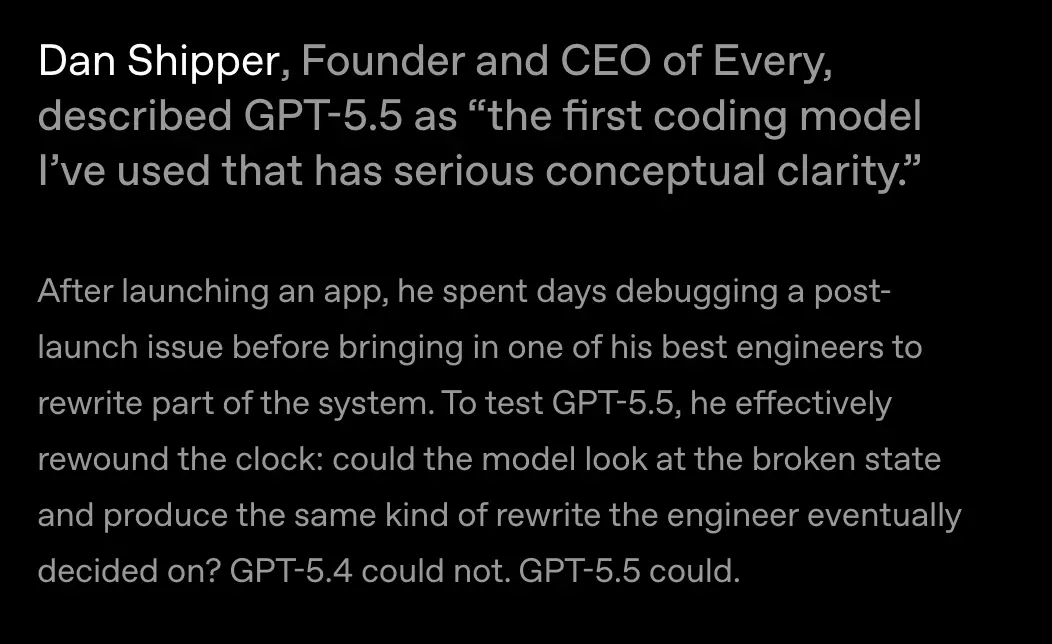

Early tester Dan Shipper did an experiment. He is a startup CEO and an active AI product developer.

There was a bug in his App after it was launched, so he hired a top engineer to reconstruct it. The engineers worked hard and finally came up with a solution.

Then Shipper turns the clock back: throw the buggy code to the model and see if it can independently make the same decision as the engineer.

GPT-5.4 can't do it. GPT-5.5 did it.

Shipper said this was the first time he had experienced true "conceptual clarity" in a programming model.

is not answering the call, but understanding the problem and figuring out how to solve it.

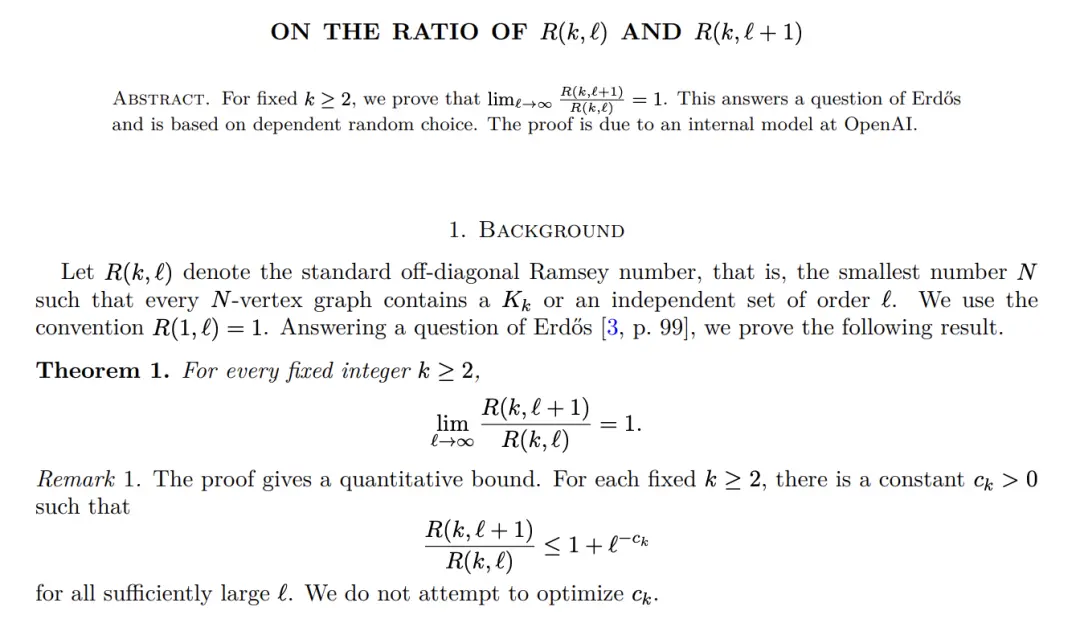

More and more senior engineers are reporting the same thing: GPT-5.5 is significantly stronger than GPT-5.4 and Claude Opus 4.7 in reasoning and autonomy.

It detects problems in advance and anticipates testing and review needs without explicit prompting.

Programming is just the beginning. The same capability leap is spreading to both knowledge work and scientific research.

In addition to programming

What GPT-5.5 does in Codex is far more than just writing programs. Generate documents, organize forms, and make PPT.

OpenAI has emphasized many times that it understands what you want better than the previous generation.

What’s more important is that will use its own tools to check whether the output is correct. You give me a vague idea and it can help you fill in the rest.

There is very interesting data here. More than 85% of OpenAI’s employees work on Codex every week. (What’s going on with the other 15%?)

Let’s look at the evaluation results first.

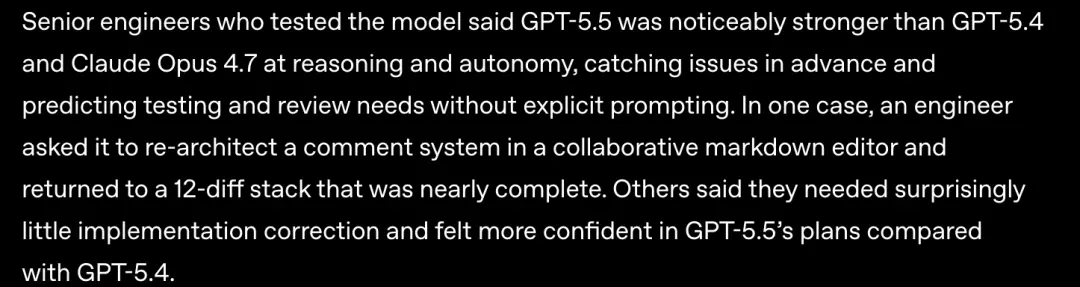

On the knowledge work benchmark GDPval , GPT-5.5 got 84.9%, 4.6 percentage points higher than Claude Opus 4.7.

FrontierMath Tier 4, One of the most difficult mathematics benchmarks currently, the questions come from unpublished papers and open problems from top researchers.

GPT-5.5 Pro scored 39.6% in this test. Claude Opus 4.7 is 22.9%, the gap is nearly double.

What’s really interesting is how scientists use it.

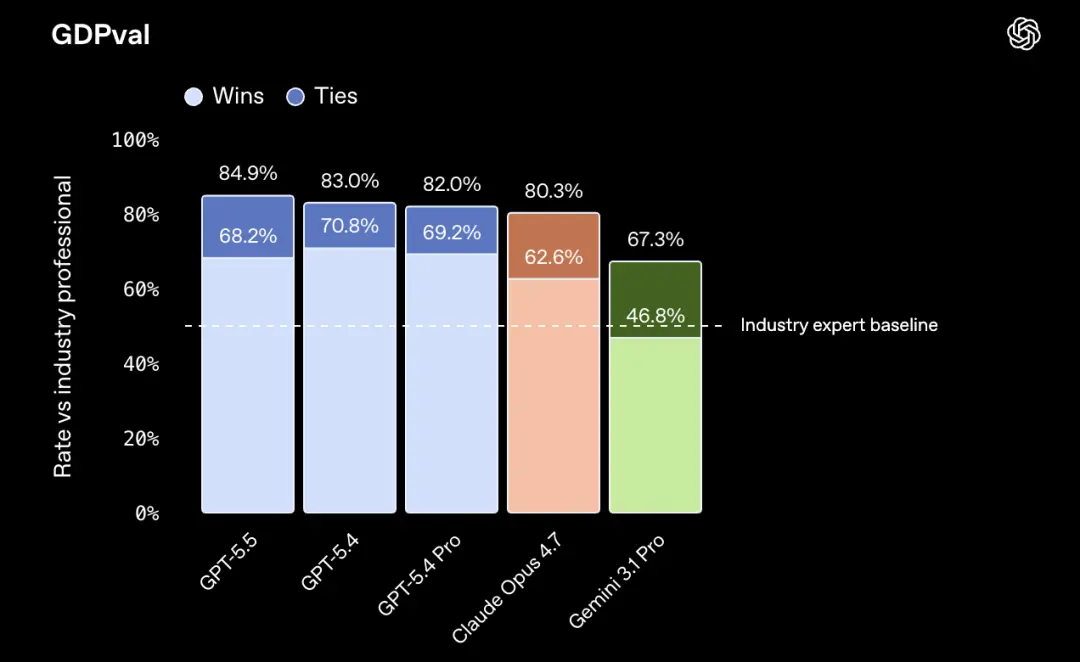

Bartosz Naskręcki is Assistant Professor of Mathematics at Adam Mickiewicz University in Poland. He wrote a sentence to Codex, and 11 minutes later, an algebraic geometry visualization application was running.

This application can draw the intersection line of two quadratic surfaces, marked in red, and can also use the Riemann-Roch theorem to convert the intersection line into the standard form of the Weierstrass curve. Later he expanded it with more stable singularity visualization capabilities.

In one sentence, 11 minutes. In the past, just setting up the project framework would take half a day.

Derya Unutmaz is Professor of Immunology at the Jackson Laboratory for Genomic Medicine. He used GPT-5.5 Pro to analyze a gene expression data set: 62 samples, nearly 28,000 genes. Finally, a complete research report was produced.

It would have taken the team several months, he said.

OpenAI’s positioning of GPT-5.5 in scientific research can be summarized accurately in one sentence. It is no longer like a one-time answer engine, but more like a "research partner".

Early testers are using it for more than just looking up information. Multiple rounds of correcting the paper, identifying loopholes in the argument one by one, and proposing new analysis plans. It remembers your entire research context, and each conversation builds on the previous one.

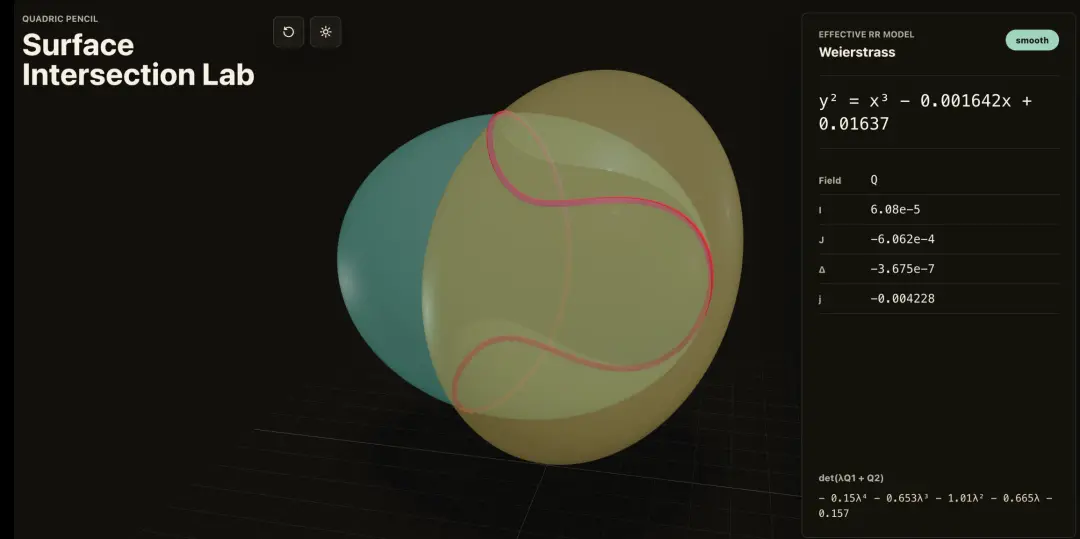

GPT-5.5 did a big thing in the field of mathematics.

Ramsey number, one of the core problems in combinatorial mathematics.

In layman’s terms, it studies: How large must a network be to ensure that a certain order will inevitably appear?

For example, three of the six people must know each other, or three people must not know each other. This is the simplest Ramsey theorem.

It has been a hard nut in the field of mathematics for decades, and the asymptotic properties of off-diagonal Ramsey numbers have been unresolved for a long time.

GPT-5.5 finds a new proof path. Rather than reproducing a known method, we discovered a new path. Subsequently, this proof was confirmed by Lean, one of the most rigorous formal verification tools in mathematics.

An AI that has made original contributions verified by formal tools in the core field of pure mathematics.

A year ago, this would have been unthinkable.

The secret of being stronger but not faster

How to achieve "stronger but not faster"?

The answer is not to optimize a certain link. OpenAI overturned the entire reasoning system and started over.

As mentioned earlier, GPT-5.5 and NVIDIA GB200 and GB300 NVL72 systems are jointly designed. As a result, under the same delay, the intelligence level has jumped significantly.

But there is another story.

The GPT-5.5 driven Codex system analyzed several weeks of production traffic data and then wrote a load-balancing partition heuristic algorithm.

Before, requests were split into a fixed number of chunks and distributed to accelerators for processing. However, a fixed chunking strategy is not always optimal under different traffic patterns. Sometimes the blocks are divided too coarsely, sometimes too thinly, and the resource utilization rate is high and low.

Codex looked at a few weeks of real traffic data and wrote a set of adaptive partitioning algorithms. Dynamically adjust the blocking strategy based on actual traffic patterns.

Token generation speed has been increased by more than 20%.

The model optimizes the infrastructure for running itself, and the AI is making itself run faster.

The overall reconstruction of the inference system, coupled with the model's participation in its own optimization, two things overlapped to bring such results.

OpenAI says this is "a step toward a new way of getting things done with computers."

But when the model has begun to optimize the infrastructure for its own operation -

How far has it gone?

One More Thing

With GPT-5.5, OpenAI expects that model release data will accelerate in the future.

We see quite significant progress in the short term and extremely significant progress in the medium term.

I think progress has been surprisingly slow over the past few years.

It was chief scientist Jakub Pachocki who said this during a conference call with reporters.