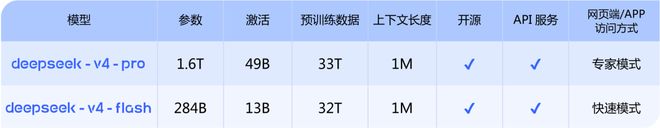

The highly anticipated DeepSeek V4 is finally released! Just now, the long-awaited preview version of DeepSeek V4 has officially launched. Two versions - V4-Pro and V4-Flash, the entire series comes standard with 1M (million words) ultra-long context, synchronized open source model weights and technical reports .

In the two days before May Day, large models have entered a new wave of releases.

At noon on April 23, "Genius Boy" Yao Shunyu handed over his first model answer sheet after joining Tencent. Tencent Hunyuan Hy3 preview version was unveiled. It has a 295 billion parameter MoE architecture, activated parameters 21B, inference efficiency increased by 40%, and the input price was reduced to 1.2 yuan/million tokens.

Early this morning, OpenAI launched GPT-5.5 for paying users and officially announced the API plan, which focuses on Agent workflow and multi-step task completion. The context window has been extended to 1 million tokens, and API pricing has also increased - input $5, output $30/million tokens.

On the surface, the three companies have different paths: OpenAI takes the high-end closed-source route and continues to raise the price ceiling; Tencent plugs the model into its own ecosystem and uses cost-effectiveness to leverage large-scale commercialization; DeepSeek continues the open source tradition and at the same time pushes the context length to a new inclusive critical point.

At the same time, Agent capabilities, ultra-long context, code and tool calls, these three keywords,It appears repeatedly in the new models released by the three companies. They are all focusing on the same direction: allowing the model to process longer information, operate autonomously in more complex task chains, and be truly embedded in the workflow 「」.

01

DeepSeek V4's "Pragmatism"

DeepSeek This release has changed the context of Million Words from "high-end optional" to "basic standard".

Prior to this, 1M-level context lengths were more common in high-end versions of flagship closed-source models. The high calling cost was enough to prohibit most developers and small and medium-sized enterprises.

DeepSeek’s approach is very clear: both V4-Pro and V4-Flash versions are equipped with a 1M context length as standard. The former anchors ultimate performance, while the latter provides an inclusive economic option, fully covering users with different needs. This strategy of “indiscriminate decentralization of core capabilities” essentially completely lowers the industry acquisition threshold for long text processing capabilities.

Image source: DeepSeek official website

The Flash version focuses on extremely low latency and high cost performance, and is DeepSeek's core solution for lightweight high-frequency scenarios . With 13B activation parameters, a new token compression attention mechanism and DSA sparse attention architecture optimization, it achieves extremely fast response speed while ensuring close to the core reasoning capabilities of the Pro version. For real-time dialogue interactions, function call pipelines, and even all lightweight scenarios that are sensitive to response speed, this feature can bring about a substantial improvement in experience.

What’s more critical is the competitive cost structure .

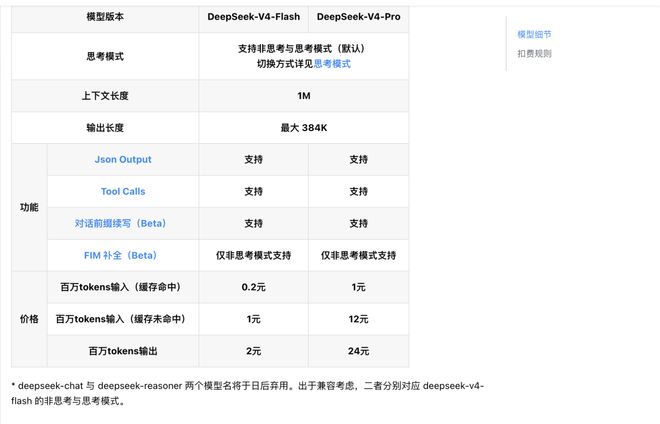

According to DeepSeek's official API pricing document, the Flash version adopts a tiered billing rule: the input token for a cache hit is as low as 0.2 yuan/million tokens, the input token for a cache miss is 1 yuan/million tokens, and the output token is priced at 2 yuan/million tokens.

Various versions of DeepSeek V4 have become | Image source: DeepSeek API document

Such user-friendly pricing, combined with the 1M contextual capability that comes standard with the entire series, makes "cost per call" no longer a core constraint in engineering design - developers can prioritize product experience and architectural design without repeatedly making trade-offs between the number of calls and costs.

Flash solves the universal demand of "affordable and fast", while V4-Pro is answering another core question: how far can the boundaries of the capabilities of open source large models be pushed.

The most intuitive ability improvement still revolves around long context. DeepSeek directly increases the model context length from 128K in the previous generation V3.2 to 1M (one million tokens). In conjunction with the innovation of the underlying architecture, it greatly reduces long context calculation and video memory requirements while ensuring that the performance of the full context window is intact.

At this scale, developers can directly import complete code bases, ultra-long industry documents, multi-round project files, and even complete books with millions of words for end-to-end processing, without the need to build additional complex retrieval augmentation generation (RAG) systems, which greatly simplifies the technical link of long text processing.

In terms of underlying architecture, the Pro version uses a MoE architecture with a total parameter of 1.6T and an activation parameter of 49B. The amount of pre-training data reaches 33T, which is a comprehensive deepening of the DeepSeek hybrid expert route. Official evaluation data shows that in core reasoning evaluations such as mathematics, STEM, and competition-level code, it has surpassed all currently publicly evaluated open source models and reached a level comparable to the world's top closed source models.

In terms of Agent capabilities, its delivery quality is close to Claude Opus 4.6 non-thinking mode , internal usage feedback is better than Anthropic Sonnet 4.5, and it has become the main Agentic Coding tool for DeepSeek’s internal employees.

At the functional level, both versions of the V4 series support both non-thinking mode and thinking mode. Developers can customize the thinking intensity through the reasoning_effort parameter. At the same time, they fully support Json Output, Tool Calls, and conversation prefix continuation capabilities.

In terms of pricing, the Pro version also continues the cost-effective route . The official pricing is: input token 1 yuan/million tokens for cache hits, input token 12 yuan/million tokens for cache misses, and output token pricing 24 yuan/million tokens, which is significantly lower than overseas flagship closed-source models of the same level.

API access has also achieved an extremely low threshold. Developers do not need to modify the original base_url. They only need to replace the model parameter with the corresponding version name to complete the access. It is also compatible with OpenAI ChatCompletions and Anthropic interface formats.

This combination of "increased capabilities + lowered costs" makes top-notch large model capabilities no longer the exclusive resource of a few manufacturers. As the industry gradually falls into a vicious circle of parameter arms race, DeepSeek provides a new model for the universalization of large models with the standard configuration of millions of contexts and full-link open source options.

At the same time, DeepSeek V4 has made special adaptations and optimizations for mainstream Agent products such as Claude Code, OpenClaw, OpenCode, and CodeBuddy, and its performance has been improved in actual scenarios such as coding tasks and document generation. The value of the model must ultimately be tested in real development and work processes.

02

continues to be open source, and the API is fully open

DeepSeek continues the open source route and directly opens all API calls.

Currently, the model weights of DeepSeek-V4 have been simultaneously opened for download on the Hugging Face and ModelScope platforms, and the supporting technical reports have also been made public, supporting developers for local deployment and secondary development.

Different from the industry practice of "open source castrated version, closed source full version" by some manufacturers, the two open source versions fully retain all the capabilities consistent with the official cloud API - including non-thinking/thinking dual mode, 1M ultra-long context lossless processing, Agent special optimization and full tool calling capabilities, without any functional castration.

This means that whether you are a small or medium-sized startup, an individual developer, or a scientific research institution, you can obtain a large model base with millions of contexts, top-level reasoning, and agent capabilities at zero threshold. You no longer need to pay high closed-source interface fees for high-end model capabilities.

In order to further lower the implementation threshold, DeepSeek has simultaneously open-sourced the full-process tool chain for model fine-tuning, quantification, and inference acceleration, completed Day 0 native adaptation of mainstream inference frameworks such as vLLM and TGI, and mainstream Agent frameworks such as LangChain and LlamaIndex. It has also opened up a full-stack deployment solution for domestic computing platforms, allowing developers to quickly implement applications in different hardware environments.

At the same time, DeepSeek has also given a clear model iteration transition plan: the old API interface model names deepseek-chat and deepseek-reasoner will cease to be used in three months (July 24, 2026). At the current stage, these two model names point to the non-thinking mode and thinking mode of deepseek-v4-flash respectively, leaving sufficient time for developers to migrate smoothly.

03

Determined to make AI "infrastructure model"

Looking at the releases of the past two days, one trend is clear: each company is accelerating Agent capabilities.

In the past two years, the public and capital market's attention to large models has largely focused on "smartness," but now it has turned to "who can get things done more stably." The focus of the release of GPT-5.5 is not how much multi-modal understanding has been improved, but its continuous execution capabilities in scenarios such as Agent programming, computer use, and knowledge work. The core selling point of Tencent Hunyuan Hy3 is also its "ability to act" in the real world. DeepSeek V4 directly focuses on Agent capabilities and long context processing, with a clear goal of actual workloads.

Behind this change is the fact that the entire industry is moving towards competition in "model utility". Nowadays, users and enterprise customers are less and less concerned about where your model ranks in a certain evaluation. What they care about is how much work the model and product can help them do: whether this model can help me write code, whether it can handle complex documents, whether it can perform multi-step tasks without errors, and whether it can run at a reasonable cost.

Image source: DeepSeek official website

At the end of the article published today, DeepSeek Quoting a sentence from "Xunzi": " Don't be tempted by praise, don't be afraid of slander, follow the path, and be upright ", continuing to anchor its own technical route. In the current context of large model competition, the meaning of this sentence is very clear - don't be disturbed by external evaluation and noise, and focus on doing things right.

DeepSeek's actions over the past year or so have indeed implemented this logic: using open source and openness to establish global developer ecological influence, using the ultimate cost-effectiveness to break the barriers to the use of high-end AI capabilities, and using solid underlying architecture innovation to solve the most real pain points of developers and enterprise users.

From the emergence of the R1 inference model to V4 pushing long context capabilities to the inclusive range for the first time, DeepSeek has been doing a more difficult thing in a relatively "slow" way - Turn top model capabilities from a tool for a few people into an infrastructure that more people can directly call .