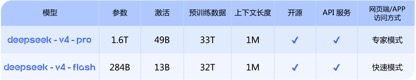

The preview version of DeepSeek-V4 is finally released. Today, DeepSeek officially announced that two models, deepseek-v4-pro and deepseek-v4-flash, with million-word ultra-long context, have been released and open source. From now on, you can log in to the official website or official app to talk to the latest DeepSeek-V4 and explore the new experience of 1M (million) ultra-long context memory. The API service has been updated simultaneously.

Article 丨 "BUG" column Zhou Wenmeng

According to the official benchmark test, in terms of context length, knowledge, reasoning and Agent capabilities, DeepSeek The performance of V4 is comparable to the top international closed-source models and has reached the first-class level of international open-source models. A comparison in the "BUG" column found that in terms of API call prices, the V4 version of DeepSeek, which single-handedly drove price cuts in the domestic large model industry last year, once again set the "lowest price" in the industry.

"Although the call price per million Tokens of domestic models has not dropped much, the long context length and good performance give it a very competitive advantage!" Some insiders expressed their feelings during the communication with the "BUG" column Regret: "That big model price butcher is back!"

The performance is comparable to the top closed-source model, and the knowledge and reasoning capabilities are leading

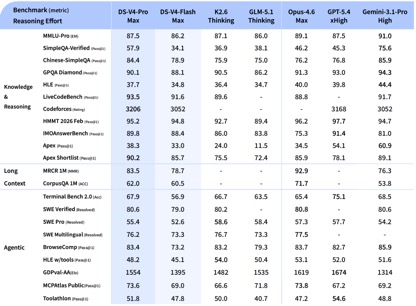

According to DeepSeek’s official introduction, the V4 series includes two versions of the model: DeepSeek-V4-Pro with 1.6T total parameters, 49B activation parameters, and 33T pre-training data; DeepSeek-V4-Flash with 284B total parameters, 13B activation parameters, and 32T pre-training data; both natively support 1 million token contexts.

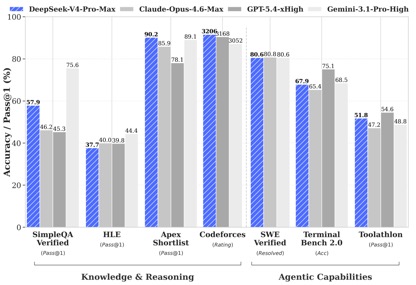

According to the benchmark test data disclosed by DeepSeek, in the knowledge and reasoning tests, DeepSeek-V4-Pro-Max achieved the best performance in the Apex Shortlist and Codeforces tests, surpassing Claude-Opus-4.6-Max, GPT-5.4-xHigh, Gemin-3.1-Pro-Hight and other international models, demonstrating extremely strong logic and algorithm capabilities; in SimpleQA In the Verified test, it is slightly behind Gemini-3.1-Pro-High but ahead of Claude and GPT.

In the Agentic capability evaluation, the three models V4, Opus-4.6, and Gemin-3.1-pro were tied on the SWE Verified task, and DeepSeek achieved a level second only to GPT-5.4-xHigh on the Toolathlon task, and on Terminal Bench 2.0 has achieved a level better than Opus-4.6, reflecting its advantages in complex instruction execution and tool calling scenarios.

Currently DeepSeek-V4 has become the Agentic Coding model used by employees within the company. According to evaluation feedback, the usage experience is better than Sonnet 4.5, and the delivery quality is close to Opus 4.6 non-thinking mode.

In the evaluation of mathematics, STEM, and competitive codes, DeepSeek-V4-Pro surpassed most of the open source models that have been publicly evaluated and achieved results comparable to the world's top closed source models.

Overall, in terms of knowledge processing and reasoning capabilities, DeepSeek-v4 has achieved an all-round lead over domestic open source models and is comparable to international evaluation capabilities. However, in terms of Agentic capabilities, although the latest DeepSeek-v4 has made good improvements, the gap between the domestic and international first-tier capabilities has not widened, and each is ahead.

" Standard" 1 million context, Price butcher "is back"

Compared with the performance advantages reflected in various benchmark tests, the biggest feature of this V4 release is the breakthrough in long text capabilities and the further reduction in API call prices.

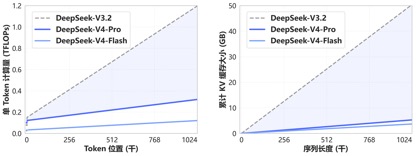

Thanks to the new attention mechanism pioneered by DeepSeek-V4, V4 achieves world-leading long context capabilities by compressing the token dimension and combining it with DSA sparse attention (DeepSeek Sparse Attention). Compared with traditional methods, it significantly reduces the requirements for computing and video memory, making 1M (one million) context the standard for all official DeepSeek services.

A year ago, 1 million contexts was Gemini’s exclusive trump card. Even in most of the recently released mainstream domestic open source models, the length of the model contexts was mostly in the 128K-200K range. DeepSeek directly transformed the million contexts from "high-end closed source functions" into open source standard configurations.

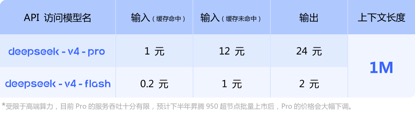

In terms of API price calls, compared with the current GLM-5.1 input unit price of 1.3 yuan-2 yuan/million tokens (cache hit), and Kimi-K2.6 1.1 yuan/million tokens (cache hit), DeepSeek-v4 -For the pro and flash versions, the input unit prices are 1 yuan/million tokens and 0.2 yuan/million tokens respectively. Although the prices have not dropped much, they are both the lowest, and the context length has been expanded several times.

(DeepSeek-v4 series model API call price)

[GT20GT ]

(Kimi-k2.6 model API call price)

(GLM-5.1 model API call price)

"The performance breakthrough brought by the release of DeepSeek-v4 is less impactful than the release of DeepSeek-R1. The performance is still in the first echelon, but the lead has not been fully extended." In the view of industry insiders, "The release of the V4 model is more about the improvement of long text capabilities and the further reduction of price."

[ GT1GT] This person lamented: "After the previous release of DeepSeek-V3 and R1 models, the performance advantages brought by the underlying technological innovation directly promoted the collective price reduction of the entire domestic large model industry. Although the call price per million Tokens of the V4 version has not dropped much compared with domestic peers, it is still competitive. The big model price butcher is back!""Huawei's computing power will be added in batches in the second half of the year, and the Pro price will be significantly reduced."

It is worth noting that at the bottom of the API price information released by DeepSeek-v4, the official specifically noted: "Limited by high-end computing power, the current service throughput of Pro is very limited, and it is expected to Ascend 950 super nodes in the second half of the year After mass launch, the price of Pro will be significantly reduced. "

This means that the v4 series models released this time have been adapted for Huawei's Ascend 950 super node. As long as the Ascend 950 is launched, the majority of users can use DeepSeek-v4 based on domestic computing power that is comparable to the top international closed-source models.

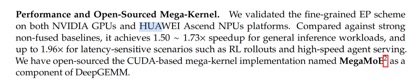

In the official open source technical document, DeepSeek also mentioned this, saying that v4 has been implemented on NVIDIA GPU and HUAWEI Ascend The fine-grained EP (expert parallelism) scheme has been verified on the NPUs platform. Compared with the powerful non-fusion baseline, it can achieve an acceleration effect of 1.50-1.73 times on general reasoning tasks, and can achieve an acceleration effect of 1.96 times in delay-sensitive scenarios (such as RL deduction and high-speed proxy services).

After the release of V4, Huawei Ascend also announced that "the entire range of super node products supports DeepSeek V4 series models." It is reported that Ascend 950 reduces Attention calculation and memory access overhead by integrating kernel and multi-stream parallel technology, greatly improving inference performance, and combining multiple quantization algorithms to achieve high throughput and low latency DeepSeek V4 model inference deployment.

Earlier this month, NVIDIA founder Huang Jensen was accepting Dwarkesh In an exclusive interview, Patel said: "If DeepSeek is released on the Huawei platform first, it will be disastrous for our country (the United States)." In Huang Renxun's view, although DeepSeek is an open source model and can also be used on NVIDIA products, if DeepSeek is specifically optimized for Huawei's computing power, NVIDIA will be at a disadvantage due to limitations such as restrictions on the purchase of high-end computing power.

Now it seems that although DeepSeek has also verified the EP solution for Nvidia's computing power, what Huang Renxun was worried about has still happened. In the eyes of industry insiders, "V4 is a product forced by computing power. In the next year, large domestic models will gradually mature when running on domestic cards."

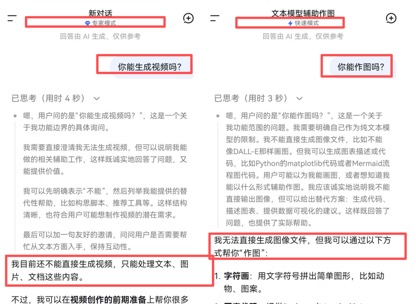

Multi-modal capabilities have not yet appeared

Unfortunately, although DeepSeek V4 has been released, this version is still a pure text model without many multi-modal capabilities such as Vincent pictures and Vincent videos. This also allows ordinary users to quickly experience and evaluate a model, which adds a lot of difficulty.

After all, as the capabilities of large language models continue to improve and the hallucination rate gradually decreases, it is difficult for conventional and single knowledge question and answer to objectively reflect the comprehensive capabilities of a model. For most users, if they want to intuitively experience the capabilities of the V4 model, they have to download it and use it personally for a while.

At the same time as the release of the V4 series of models, DeepSeek has also recently revealed that it plans to raise 50 billion yuan. People close to DeepSeek revealed that DeepSeek's pre-financing valuation is 300 billion yuan, approximately US$44 billion. Currently, Tencent Holdings and Alibaba Group are negotiating to invest in DeepSeek. However, DeepSeek has not responded directly to media inquiries regarding financing-related matters.

Perhaps, for DeepSeek founder Liang Wenfeng, it is a wise move to use the release of V4 to raise timely financing to strengthen its strength when the growth of the "intelligence" of global large models is slowing down, competition for industry talents is intensifying, and the industry's multi-modal and agentic trends are increasingly highlighted.