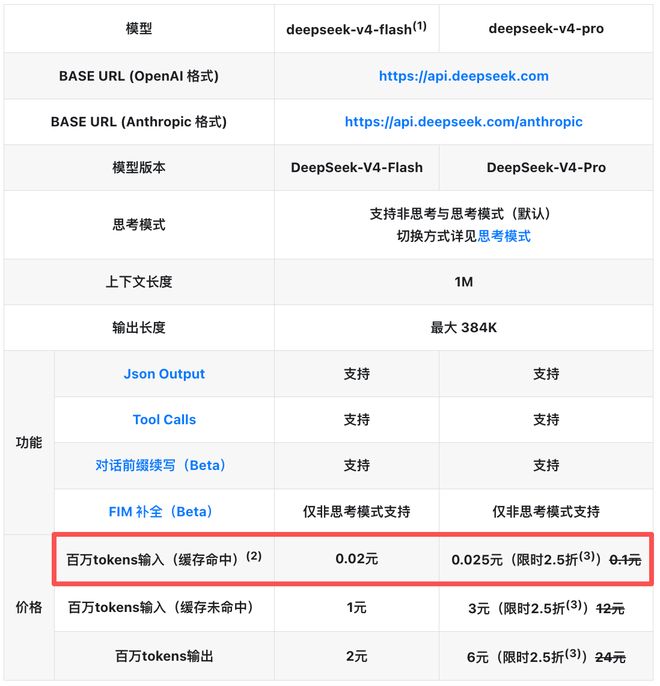

last night,DeepSeek-V4 has been reduced in price again, the price of the input cache hit of the two models of the whole series is directly reduced to the initial price.1/10. After the latest price adjustment, the price per million tokens input (cache hit) of DeepSeek-V4-Flash is0.02 yuan, DeepSeek-V4-Pro is0.025 yuan.

On the evening of April 25, DeepSeek-V4-Pro announced that the price of DeepSeek-V4-Pro has plummeted.75%. Currently, the input price (cache miss) of DeepSeek-V4-Pro per million tokens is3 yuan, the output price is6 yuan. This promotion will last until 23:59 on May 5th.

The other prices of DeepSeek-V4-Flash remain unchanged, the input price per million tokens (cache miss) is 1 yuan, and the output price is 2 yuan.

After the price reduction, DeepSeek has a greater price advantage than domestic large model companies:

▲Domestic large model enterprise model price comparison table

Some Weibo users combined their own actual data analysis and found that DeepSeek’s price reduction can save a lot of money.73%.

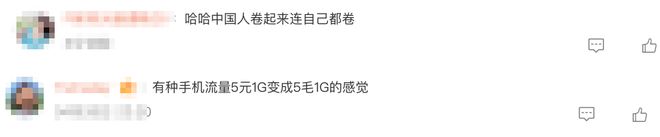

Some Weibo netizens lamented, “There is a kind of mobile phone traffic5 yuan 1G becomes 5 cents 1Gfeeling".

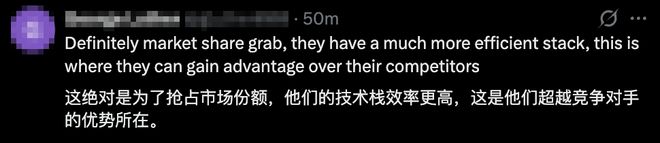

Some netizens speculated under the tweet of the DeepSeek X account that this isDeepSeek is grabbing market share, because they have technical advantages.

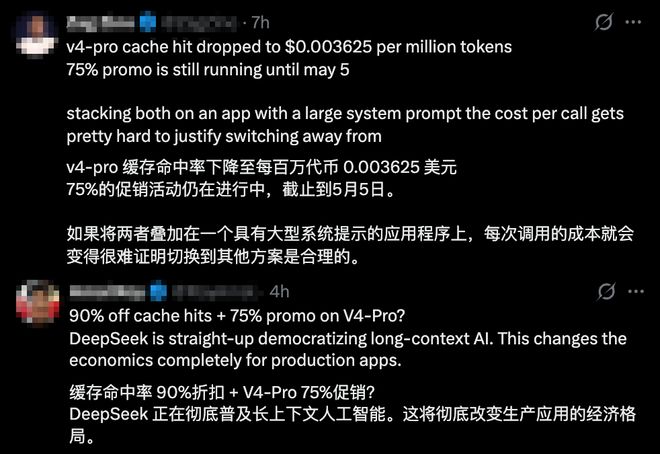

Some netizens bluntly said that the token cache price war has begun, DeepSeek loves us too much, and now it is time for developers to evaluate the workflow.The best window to migrate from Claude or GPT to DeepSeek.

Other netizens affirmed the significance of DeepSeek's price reduction and believed that such a big discountWill completely change the economic landscape of production and application.

This time, DeepSeek-V4 has reduced the input cache hit price to 1/10 of the initial price, and added a limited-time 75% discount for the Pro version. At the same time, with the multiple advantages of open source and long context, it is quickly gathering developers and application ecosystems, which may mean that small and medium-sized teams can also use top-level models to run business models.