NVIDIA is accelerating the launch of its next-generation AI flagship platform Vera Rubin. The latest news shows that the platform will begin shipping to major North American cloud services and AI customers as early as July this year, and will enter full mass production in the second half of 2026. Previous rumors about Vera Rubin having problems with its design and specifications have now been basically denied by the new production and shipping schedule.

A few days ago, news circulated in the industry about possible adjustments or even problems in the design and specifications of Vera Rubin, which was described as similar to the turmoil that the Blackwell GPU server encountered before its release. However, relying on the experience accumulated in the delivery of next-generation AI racks and servers with supply chain partners, Nvidia has once again demonstrated its ability to quickly resolve technical flaws before mass production. A report from Taiwan's "Economic Daily" citing industry chain sources pointed out that Nvidia has finalized the final mass production version of Vera Rubin with its ODM partners and has set a clear introduction rhythm.

According to this report, Nvidia will launch trial production of the Vera Rubin platform in June this year, and then starting in July, the first batch of servers will be shipped to multiple large cloud service providers and AI data center customers in North America. The first list of customers includes Microsoft, Google, Amazon, Meta and Oracle. Nvidia is likely to highlight its in-depth cooperation with these cloud giants around Vera Rubin in the upcoming Computex 2026 keynote speech. The report also mentioned that TSMC has launched the 3nm process earlier this year to start mass production of Vera Rubin chips, while foundry partners such as Foxconn, Quanta, and Wistron will fully roll out the production of complete machines and frames from the second half of this year, and achieve large-scale shipments as soon as the third quarter of 2026.

As the dust settles on the final production specifications, previous statements that the Vera Rubin platform may undergo significant design or specification changes are deemed to be "inconsistent with the facts or based on early information that has been subsequently revised." The industry estimates that the cost of each Vera Rubin AI server rack is as high as approximately US$180 million. With this platform, Nvidia's potential penetration in the global AI infrastructure market is expected to reach the US$1 trillion level. This will not only significantly expand profit margins, but also bring a new round of growth momentum to partners, including storage and memory suppliers.

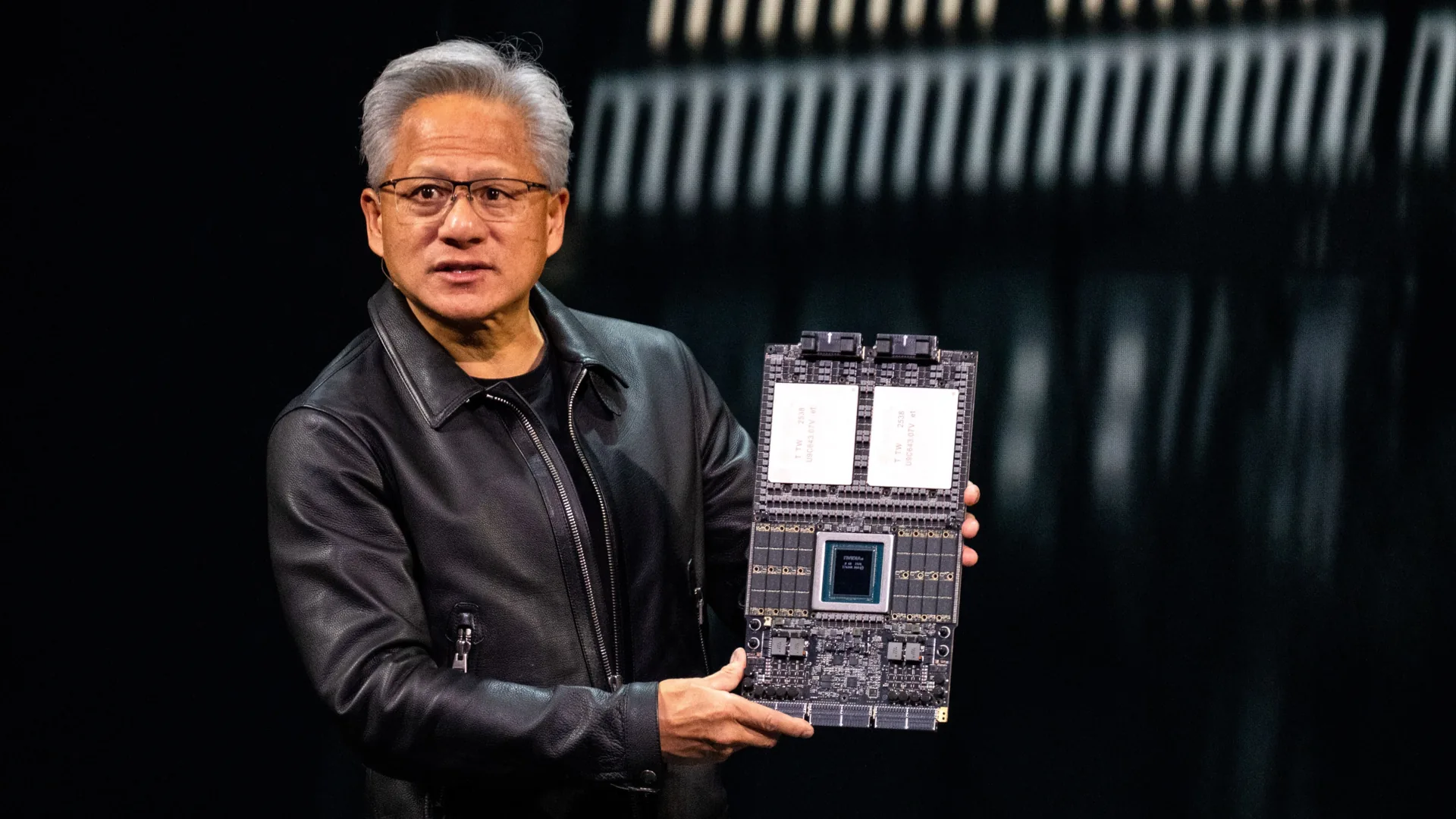

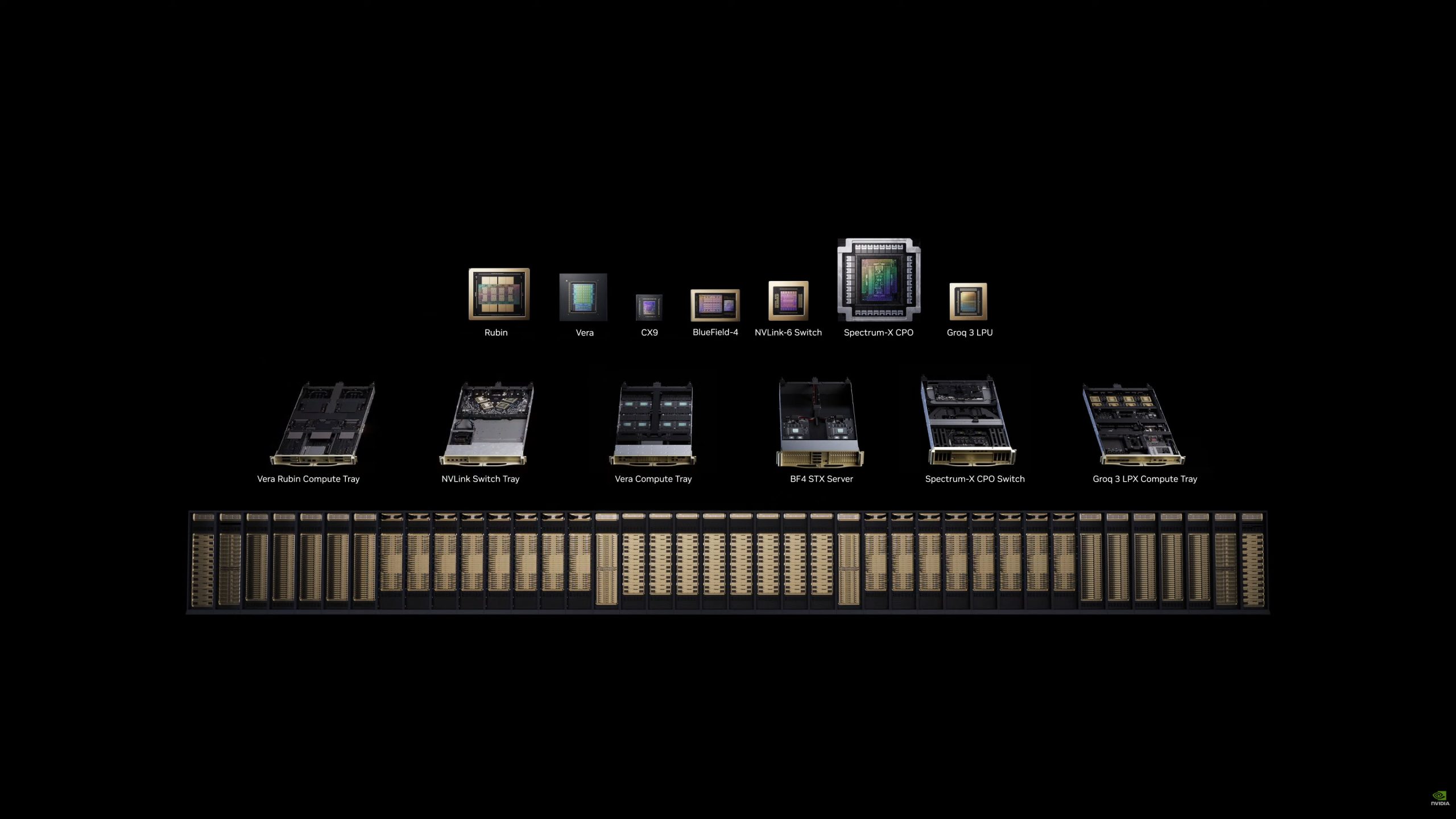

Around the Vera Rubin platform, the chip and memory ecology are being upgraded simultaneously: partner manufacturers plan to introduce a new generation of HBM4 high-bandwidth memory for Rubin GPU, and at the same time provide a SOCAMM2 LPDDR5X solution with a capacity of up to 256GB on the CPU side to meet the increasingly stringent demands for bandwidth and capacity in large-scale model training and inference. At the hardware architecture level, Vera Rubin is described as a complex platform composed of seven chips, supported by a powerful software stack. It is considered to be temporarily unrivaled in the industry. Nvidia has announced that relying on Vera Rubin, it is expected to increase its computing power output to 40 million times the current level in the next ten years. Judging from earlier technology previews, the industry also generally expects that this platform will bring a new round of leapfrog leaps in AI computing power.

Judging from the timetable, Vera Rubin is moving away from rumors and uncertainty and entering the substantive trial production and shipment stage. With the first batch of racks landing in North American cloud service provider data centers starting in July, and Taiwanese OEMs going into full mass production in the second half of the year, Vera Rubin will become NVIDIA's core weight in the next stage of AI infrastructure competition, and will also have a profound impact on the global cloud computing and AI industry landscape.