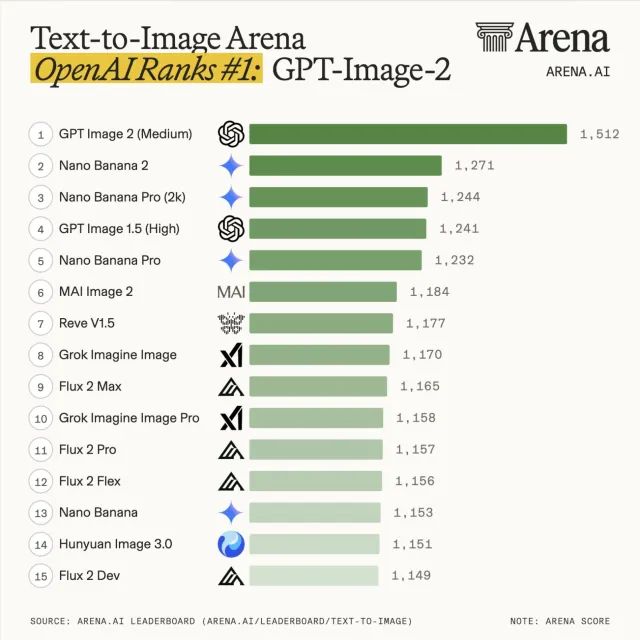

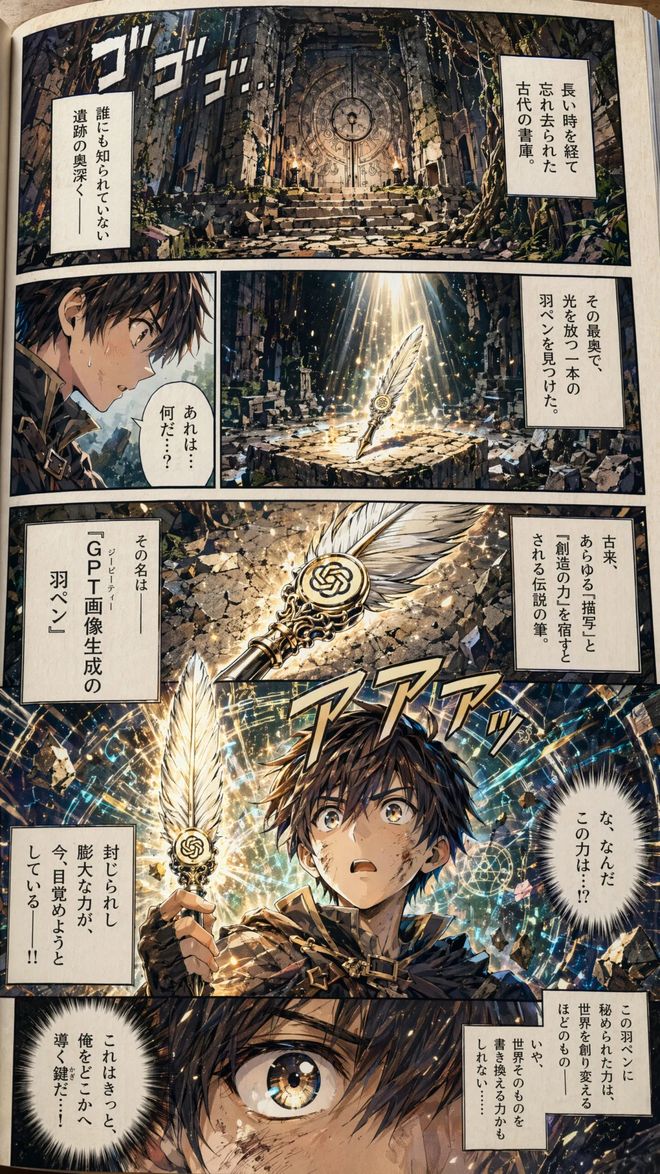

On the day of release, all three lists were killed. Within 12 hours of GPT Image 2 being online, the three sub-lists of Text-to-Image, Single-Image Edit, and Multi-Image Edit all topped the list. Arena's official words: "a clean sweep".

On the main list of Wenshengtu, GPT Image 2 scored 1512 points and Nano Banana 2 scored 1271 points. The 241-point gap is the largest in Arena history.

“No model has ever dominated Image Arena with this disparity,” Arena officials said.

In all blind test matchups in Image Arena, GPT Image 2’s winning rate was 93%: 100 pictures were paired in a blind test, and 93 people chose the OpenAI one.

"If you think of DALL-E as cave paintings and Images 1.0 as ancient art, then Images 2.0 is the Renaissance."

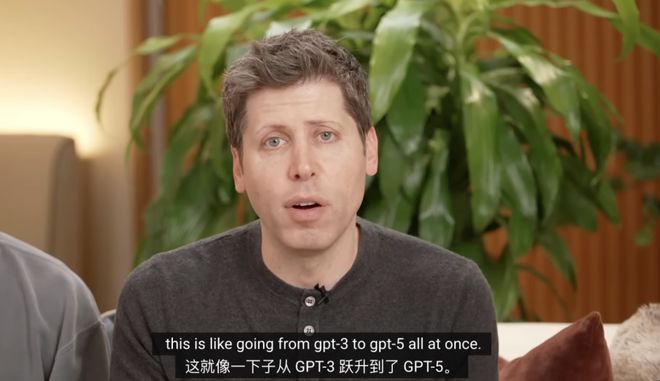

OpenAI introduced Images 2.0 at the opening of the conference, and Ultraman even called it a cross-generation upgrade:

This seems to jump from GPT-3 to GPT-5 all of a sudden.

https://www.youtube.com/watch?v=sWkGomJ3TLI

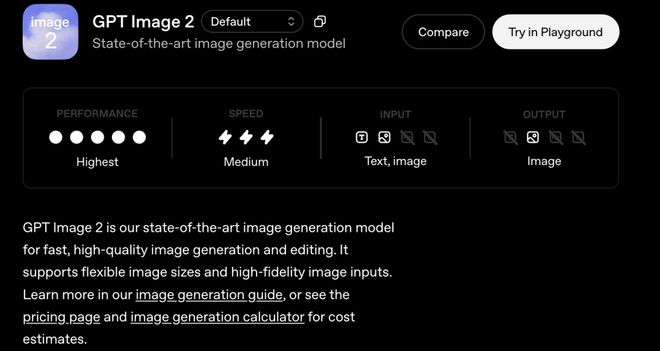

The official OpenAI API document gives a superlative evaluation of Images 2.0.

https://developers.openai.com/api/docs/models/gpt-image-2

But the real story is not in the data.

quiltGooglepresshalf a year

OpenAI finally comes back

Time goes back to August 2025.

Google released Nano Banana. This image generation model embedded in Gemini instantly exploded on the C-side.

At the Q3 financial report three months later, Google CEO Sundar Pichai personally disclosed a set of figures: Gemini monthly activity increased from 450 million in July to 650 million in October.

Josh Woodward, head of Google Labs, said that much of this growth comes from the image generation boom driven by Nano Banana.

In November, Google released Nano Banana Pro again. The text rendering ability is amazing, AI images can write words correctly for the first time, and OpenAI is surpassed on the C side.

On November 18, Google made another move. Gemini 3 reached the top of LM Arena immediately after its release, with 1501 points, becoming the first cutting-edge model to break 1500 points.

At the end of this month, Altman issued an internal "code red" memo to the entire company.

According to The Information, Altman privately told employees that Gemini 3 could bring economic headwinds to OpenAI. Yahoo Finance subsequently disclosed: Under code red, OpenAI suspended the research and development of other products such as AI Agent, and all resources were allocated to ChatGPT.

In December, OpenAI rushed out GPT Image 1.5. Arena ranked first, but the C-side failed to detonate.

In February 2026, Google made another move, Nano Banana 2 appeared, and Arena took the lead again.

OpenAI loses again.

It was not until April 21, when GPT Image 2 went online, that OpenAI achieved a lead and regained the lead.

Drawing AI will be redefined

Why does GPT Image 2 lead by 241 points?

The core answer lies at the architectural level.

GPT Image 2 is not a diffusion model of the Stable Diffusion generation.

OpenAI research director Boyuan Chen calls this a "generalist model" that is "revamped from scratch" (reconstructed from scratch). OpenAI's internal name is the "image version of GPT."

However, Chen refused to publicly admit whether it was a diffusion or autoregressive architecture during the press briefing.

The outside world generally understands it as an "image generation system with inferential planning": plan before painting, and then write. This is the biggest difference between GPT Image 2 and the previous generation image model.

OpenAI gave it a new label in its official description: the first image model with native thinking capabilities.

Think before you draw, check after you draw, search for information online when needed, and produce 8 coherent pictures at a time.

This isn’t a paintbrush, it’s a thinking visual assistant.

Arena ranking breakdown data shows:

In the text rendering (Text Rendering) category, GPT Image 2 has increased by 316 points compared with the previous generation; cartoon animation and portraits have each increased by 296 points; the three product/3D/realistic categories have an overall range of +247 to +277.

Text rendering was a problem first solved by Nano Banana Pro in November 2025, but the accuracy at that time was 94%. GPT Image 2 pushed it to 99%.

Live demonstration at the OpenAI conference: Let GPT Image 2 draw a bowl of rice, in which only one grain of rice has the model name written on it.

Specific to ability demonstration, OpenAI President Greg Brockman gave a demonstration on his X account.

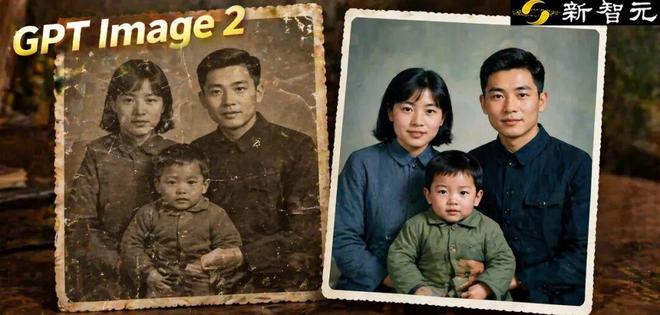

The first case is restoration of old photos.

Faded and yellowed old family photos can be instantly transformed into high-definition color versions with a prompt word.

The phrase "high-fidelity image inputs" in the OpenAI official API document refers to the model's ability to retain the details of the original image: the input end can accurately read the details of faded, damaged, and blurred old photos, and the output end can re-render a clear version.

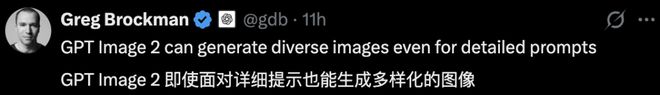

In the second case, Brockman forwarded a set of test pictures from user @doodlestein: using the same complex prompt word to ask GPT Image 2 to draw a mathematical explanation diagram.

He commented that GPT Image 2 can generate images with different styles even for complex prompt words.

@doodlestein Test GPT Image 2 Draw a linear algebra explanation diagram using the same prompt word. The model draws 4 completely different versions in one go: the same Mona Lisa + eigenvector teaching, and the composition, color matching, and information density of each version are completely different.

The real value of this case is not "being able to draw mathematical graphs", but solving an important pain point of AI graph generation in the past two years: single output and poor controllability of variants.

GPT Image 2 makes "one prompt gives me 4 completely different directions" a product-level capability for the first time.

A senior LM Arena tester in the industry commented:

The gap between GPT Image 2 and Nano Banana Pro is as big as the gap between Nano Banana Pro and DALL-E.

A whole generation has passed.

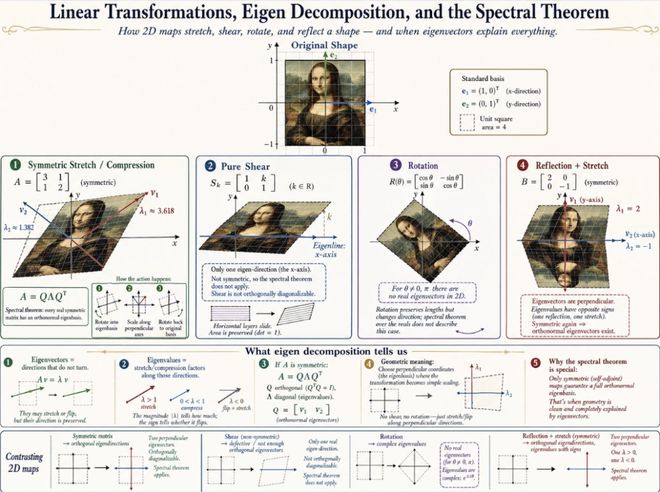

GPT Image 2 Manga-style comic page generated by Thinking mode: starting from a simple prompt word, the model maintains character consistency and lays out multi-frame plots.

DALL-E retired

Adobe Canva is backed into a corner

On launch day, downstream tool integration was faster than the tech community expected.

Figma, Canva, Adobe Firefly, fal, and Hermes Agent were all integrated on April 21st.

API pricing is even more dangerous:

High-quality images cost $0.21 per picture; ChatGPT Plus costs $20 a month, and image generation is included in the package.

Behind this price difference, it may bring about the largest industrial restructuring in the image generation industry in 2026.

Photorealistic candid generated by GPT Image 2. Coast, cloudy sky, retro cars, film texture - this kind of visual effect that used to require professional photographers to shoot outdoors and post-production can now be achieved with an API of $0.21. OpenAI researcher Gabriel Goh said photorealism is the capability that excites him most about the model.

On May 12, DALL-E 2 and DALL-E 3 were officially retired.

They are the founders of the entire AIGC visual revolution in 2022. Three years later, it was passed down into history by OpenAI’s own successor.

OpenAI mentioned in the official release notes:

Images are not decoration, they are language. A good picture does the same thing as a good sentence: selects, arranges, reveals.

This represents a shift in product philosophy.

Of course, there are no opposing voices. ZDNet found in actual testing that GPT Image 2 could not accurately reproduce brand logos, and even ZDNet's own logo was distorted.

Nano Banana 2 still has advantages in portrait realism and multi-reference consistency.

Although GPT Image 2 is not perfect yet, the track pattern has changed.

The era of rendering is over

The age of reasoning has just begun

Google plugs inference into image models. OpenAI plugs image tools into inference models. The 242-point Elo gap measures the difference in architecture between the two.

This comment from implicator.ai divides the two eras of image generation.

2022 to 2025 is the era of rendering.

DALL-E, Midjourney, Stable Diffusion, all aim to "paint like". The model is the brush, the user is the painter, and the prompt is the drawing.

GPT Image 2 represents an era of reasoning.

The model thinks before writing, can search, self-check, and complete tasks. It's not a paintbrush, it's an assistant that can draw.

What really deserves attention with the release of GPT Image 2 is the fact that image generation is moving towards "thinking".

In the short term, Black Forest Labs (Flux 2) may be in the most trouble.

Kingy AI bluntly stated: As a diffusion-first manufacturer, the entire technical pipeline of Flux 2 is architecturally in conflict with the "token-by-token" reasoning line.

Either fuse or rewrite, there is no third way.

In the medium term, Google may counterattack next quarter. Nano Banana 3, or Imagen-Reason, won’t be around for long.

In the long term, the impact of this goes far beyond image generation.

When AI begins to use "thinking" to produce images, videos, audios, and codes, the entire generative AI paradigm will change accordingly.

When Ultraman typed "code red" in his memo in December last year, he probably didn't expect that he would return to the top of Arena in this way five months later.

But the real significance of this counterattack may not be that OpenAI defeated Google, but that OpenAI rewrote the rules of the image generation track.

Arena.AI single image editing list (Image Edit Arena): GPT Image 2 (medium) continues to top the list with 1510+ points. The second, third, fourth and fifth places are all occupied by OpenAI's own model and Google Gemini series. https://arena.ai/leaderboard/image-edit

When will Google make its next punch? This issue determines the direction of the AI landscape in the second half of 2026.

And before that punch is thrown, no one knows how long GPT Image 2 will sit at the top of Arena.

References:

https://x.com/gdb/status/2048449695622586576

https://arena.ai/leaderboard/image-edit