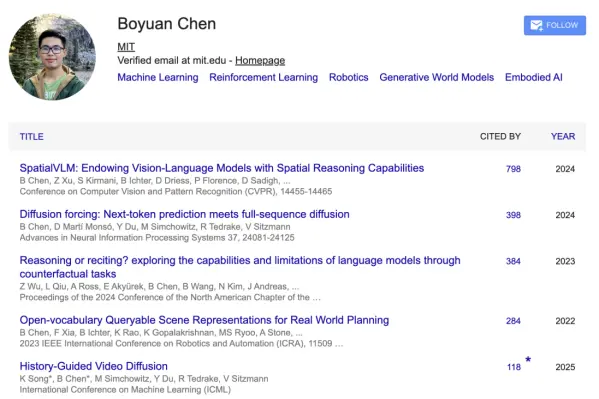

OpenAI research scientist Chen Boyuan posted an article on Zhihu, which begins very directly:"Hello everyone, I am Chen Boyuan, a research scientist on the GPT Image team. The GPT image generation model released last week was my main training!"He also mentioned that the Chinese rendering of the model was finally fixed this time. If Chinese users have any feedback, you can reply to them directly.

After the release of ChatGPT Images 2.0, many people’s first reaction was:This model's Chinese ability is a bit unreasonable.

Image models in the past were somewhat "incomprehensible". They can draw landscapes and figures, but once Chinese characters are involved, they can easily turn into an indecipherable mess of ghostly symbols. But GPT-image-2 is different. It can not only write the correct words, but also typeset, segment, and generate Chinese information graphics with a logical structure.

The old method of "looking at the text to determine whether it was generated by AI" no longer works in this generation.

Chen Boyuan is one of the people who really stood at the front desk in GPT Image 2 training and ability demonstration. At the press conference, he and Ultraman demonstrated the text rendering capabilities. After the release, he explained many tidbits behind the official website pictures on Zhihu: During the double-blind test of LMArena, GPT Image 2 used "duct-tape" as the code name; many pictures on the official website blog were made by him with models; Chinese comics, rice grain engravings, multi-lingual texts, visual proofs, and automatically generated QR codes. These pictures that look like promotional materials are actually designed to test abilities again and again.

He used a very interesting explanation for this "duct-tape" tape:

"As for why it's called duct tape...of course it's because you can use duct tape to stick bananas on the wall!"

01

He's asking a slower question

Chen Boyuan is not the kind of researcher who can be remembered at a glance. There is no frequent public speaking and no deliberate management of personal expression. He writes blogs and posts light-hearted content, but these are more like records than building influence.

In contrast, his presence comes more from the model itself.

He is now a researcher at OpenAI, involved in training image models. Prior to that, he completed a PhD in electrical engineering and computer science at MIT with a minor in philosophy. He also participated in the research of multi-modal models at Google DeepMind.

These experiences are eye-catching enough, but more importantly are his long-term concerns.

From DeepMind to OpenAI, Chen Boyuan’s research direction has hardly changed. When most people are still discussing whether the model can be written better and drawn more closely, they are concerned about a more basic level: what the model is "understanding".

Specifically, it can be seen as three questions: How does the model understand the image? What is the relationship between image and language? When a model faces the real world, is it generating results or simulating the world?

These questions sound abstract, but they pretty much define the boundaries of today's generation of models.

On his personal homepage, he writes about his research direction very directly:World models, embodied intelligence, and reinforcement learning.

The so-called world model can be understood as one thing: allowing AI to form a judgment about the world internally.

It must not only know what is happening in front of you, but also be able to predict what will happen next.

This is a little different from today's common LLM (large language model). LLM is more like processing language, while the world model is closer to a structure: it needs to understand space, time, cause and effect, and the results of behavior.

To use a very simple example, if AI really "understands" the world, it should know that a plastic cup will bounce when it is dropped on the ground, while a glass cup will break.

Embodied intelligence and reinforcement learning can be understood as an extension of this problem - if a model really understands the world, it should not just answer questions, but should also be able to act and constantly revise its judgment during action.

The work he is involved in is often not a single task optimization, but an attempt to connect generative models, visual understanding and decision-making systems together.

One of his most representative works is a study called Diffusion Forcing.

This research attempts to solve a very basic question: Is the model generated step by step, or is it generated all at once?

LLM is the former, which is good at flexible generation, but prone to errors in long content; the diffusion model is closer to the latter, which is more stable but lacks structure.

Chen Boyuan's approach is to put these two methods in the same model, so that the model can be generated gradually and constrain the whole.

If Diffusion Forcing is about unifying in the time dimension, then SpatialVLM, another work he participated in, is about complementing capabilities in the spatial dimension.

This work addresses a long-standing problem: although the model can look at pictures and speak, it does not really understand spatial relationships. It does not know distance, size, or the relative positions of objects.

In order to solve this problem, his team built a three-dimensional spatial reasoning system so that the model can not only "see" but also "reason".

Similar ideas have also appeared in other work, such as the History-Guided method that uses historical information to guide generation, or research on unified modeling of vision, action and language. These efforts may seem scattered, but they all point in the same direction: making the model not just output results, but form a stable representation internally.

In addition to his serious research direction, Chen Boyuan also occasionally reveals a very vivid personal interest.

For example, this time he published an article on Zhihu, and for example, he specifically introduced on his personal homepage that his interest is making boba, and even his name on Zhihu is "MIT Milk Tea Store Manager."

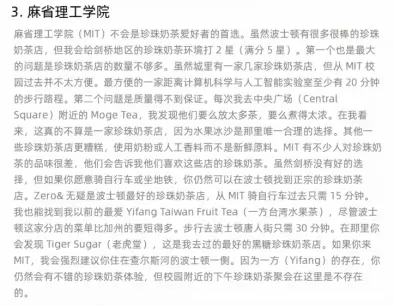

He also wrote a blog to rank the top computer science schools in the United States. The criterion was not scientific research strength but bubble milk tea.

He ranked Berkeley first because the campus is “almost surrounded by high-quality milk tea shops,” while MIT received a low score because “there are too few milk tea shops nearby and the quality is unstable.”

This type of expression is very relaxed, but it can be seen that his research habits are: dismantle complex problems, find comparable dimensions, and then make judgments.

His work itself is doing something similar, but the object is replaced by a model.

02

He avoided the easier direction

If you only look at the development path of image models, the logic in the past is actually very clear: larger data, higher resolution, and more stable generation process. Most of the improvements focus on "drawing more like".

But as the model begins to process more complex content, this path also reaches a bottleneck: when the image contains not only visual elements, but also text, structure and even logical relationships, the question is no longer just like or unlike, but how this information is established at the same time.

The issue shifts from production quality to structural consistency.

Not all researchers will do this type of problem. It does not directly correspond to a certain evaluation indicator, and it is difficult to translate it into product effects in the short term. In contrast, it is often easier to see improvements when working on resolution, style, and details.

Chen Boyuan's path happened to avoid those "easier" directions: from the beginning of his research in the academic stage, his focus was not on single-modal abilities, but on how different abilities are connected together.

For a long time, visual models, language models and decision-making systems have developed independently. They can be connected via interfaces, but are often separate internally. Therefore, although the model can "call on capabilities", it is difficult to demonstrate consistent understanding.

Chen Boyuan's work is to try to change this situation.

Many of the capabilities of the model this time were demonstrated at the intersection of "images, text, memes, real objects and cultural context".

Chen Boyuan said that many of the pictures on the official blog were made by him. The entire blog is generated using images, with no ordinary text at all. In other words, many of the examples users see on the official website are not just promotional materials, but part of the model capabilities themselves.

For example, that Chinese easter egg comic.

He wanted to make a very funny cartoon, so he used "catch stalk" and "banana stalk". In order to demonstrate his writing ability, he specially asked the model to add text in multiple languages to the picture, and also generated very small Chinese characters in the lower right corner of the hometown poster to test how fine the details the model could handle.

More importantly, this picture is not spliced - according to him, the entire picture, including picture-in-picture and picture-in-picture-in-picture, is generated at once. He was worried that people would think it was a spliced picture, so he deliberately added a note at the bottom of the picture.

This just illustrates the difficulty of GPT Image 2. If the image model of the past could write a few large characters without making mistakes, it would be considered very good. But GPT Image 2 has to deal with a whole set of levels: it needs to know that this is a comic book photo, there are pictures in the comic book, and there are pictures in the pictures; it needs to put text in different languages in different levels; it also needs to make the relationship between these words and the picture established, rather than being randomly scattered in the picture.

Another example is rice grain engraving.

Chen Boyuan said that at first he felt that ordinary text rendering was not stunning enough, so he made a 4K picture after being prompted by his teammates: the picture showed a pile of rice grains, one of which had words engraved on it.

This tests the model's ability to control text at extremely small scales.

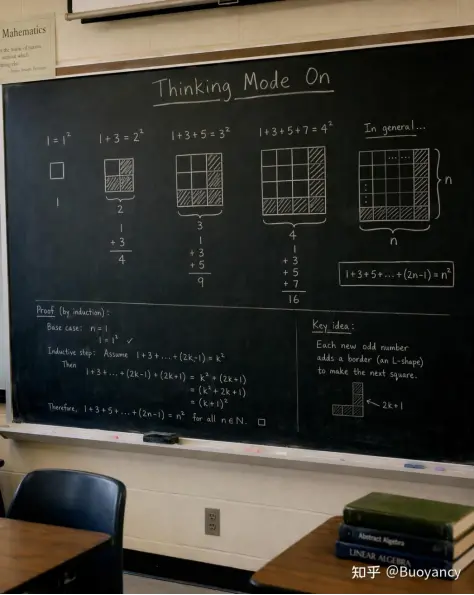

And that blackboard visual proof.

Chen Boyuan said: "It seems too simple if you ask him to solve ordinary mathematics equations and the like. Nano banana seems to be able to do it through thinking mode + text rendering. So I thought of a visual proof that I like very much to really test GPT Image 2. Unique visual reasoning effect. The prompt in the picture is to use vision (rather than algebra) on the blackboard to prove that the sum of odd numbers starting from 1 is a square. It is actually easy to reason about the algebraic solution, but the graphical solution can only be done with the visual model. "

This is also one of the most noteworthy changes in the release of GPT Image 2: it can begin to turn an abstract relationship into an image structure, and then express this structure visually.

Therefore, rather than saying that GPT Image 2 is "generating images", it is better to say that it is generating a visual expression with structure.

Comics, posters, visual proofs... none of these things are purely pictures in nature. They also contain text, typography, hierarchy, object relationships, task goals and aesthetic judgments.

Past image models tend to break down here because they treat images as pixel results. This generation of stronger image models must treat images as a structured expression.

03

he is not alone

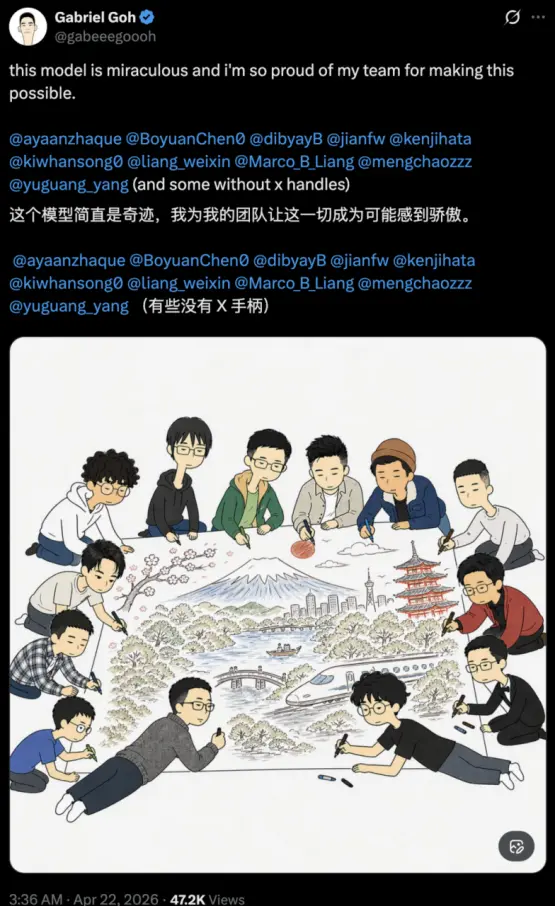

Within OpenAI, there are not many people actually involved in model training. After the release of GPT-image-2, research leader Gabriel Goh publicly thanked their team members on social media.

The list is not long, only a dozen people.

This is more like a small team than a large engineering system.

Team members are scattered in different directions, some do vision, some do generation mechanisms, and some deal with system structure, but they ultimately point to the same thing: giving the model a set of capabilities that can handle images, language, and structure at the same time.

The illustration in the tweet is also like a metaphor to some extent: a group of people gather together, each person is responsible for a part, and finally they form the same picture.

The structure of the model, the boundaries of capabilities, and even "what the image should be" are all made bit by bit in such a team.

One thing worth noting is that among the core team of more than a dozen people, we can see a considerable number of Chinese names.

In addition to Chen Boyuan, it also includes Jianfeng Wang who does visual language modeling, Weixin Liang who does model evaluation and data issues, Yuguang Yang who has been engaged in image generation for a long time, and many researchers involved in image generation and system training.

Chen Boyuan did not write this incident as a personal victory. At the end of the Zhihu article, he specially thanked the entire team. He said that everyone has done many, many things. At the end of the pre-launch period, in addition to fixing some small things, he worked with colleagues in the marketing department and art colleagues to prepare the press conference and website.

In other words, GPT Image 2 is a joint completion of research, products, aesthetics and communication. The model team needs to create the capabilities, the art team needs to know what kind of pictures can display the capabilities, and the marketing team needs to translate these capabilities into pictures that ordinary users can understand, be willing to test, and are willing to spread.

That's why many of the examples in this release are special. They do not end up just generating a beautiful picture, but actively creating problems: multiple languages, very small text, picture-in-picture, real objects, visual proof, search-generated posters, and QR code embedding.

Every picture tells the user: What you thought the image model couldn't do before, you can try again now.

From this perspective, Chen Boyuan's position is very special.

He is on both the model training side and the publishing narrative side; he not only participated in making the model, but also personally designed many pictures to let the outside world understand the model's capabilities.

GPT Image 2 is certainly not the work of Chen Boyuan alone, but judging from public information, Chen Boyuan is indeed one of the names most worthy of attention from the Chinese community in this image model release.

On the one hand, the GPT graph generation model released this time was his main training; on the other hand, he happened to be responsible for a breakthrough that is most easily perceived by Chinese users: Chinese rendering.

When AI was finally able to write Chinese into complex images, the researcher behind it who had long studied world models, spatial understanding and generative consistency came to the forefront.

"Hopefully this time we have caught everyone securely," he said.