Google disclosed for the first time that its security team discovered and successfully blocked a zero-day exploit suspected to be developed by artificial intelligence during an ongoing cyber attack. According to a report released by the Google Threat Intelligence Group (GTIG), the attack was orchestrated by a "well-known cybercriminal threat actor" in an attempt to launch a "large-scale exploitation event" and target an unnamed "open source, web-based system management tool" and use it to bypass the platform's two-factor authentication (2FA) mechanism.

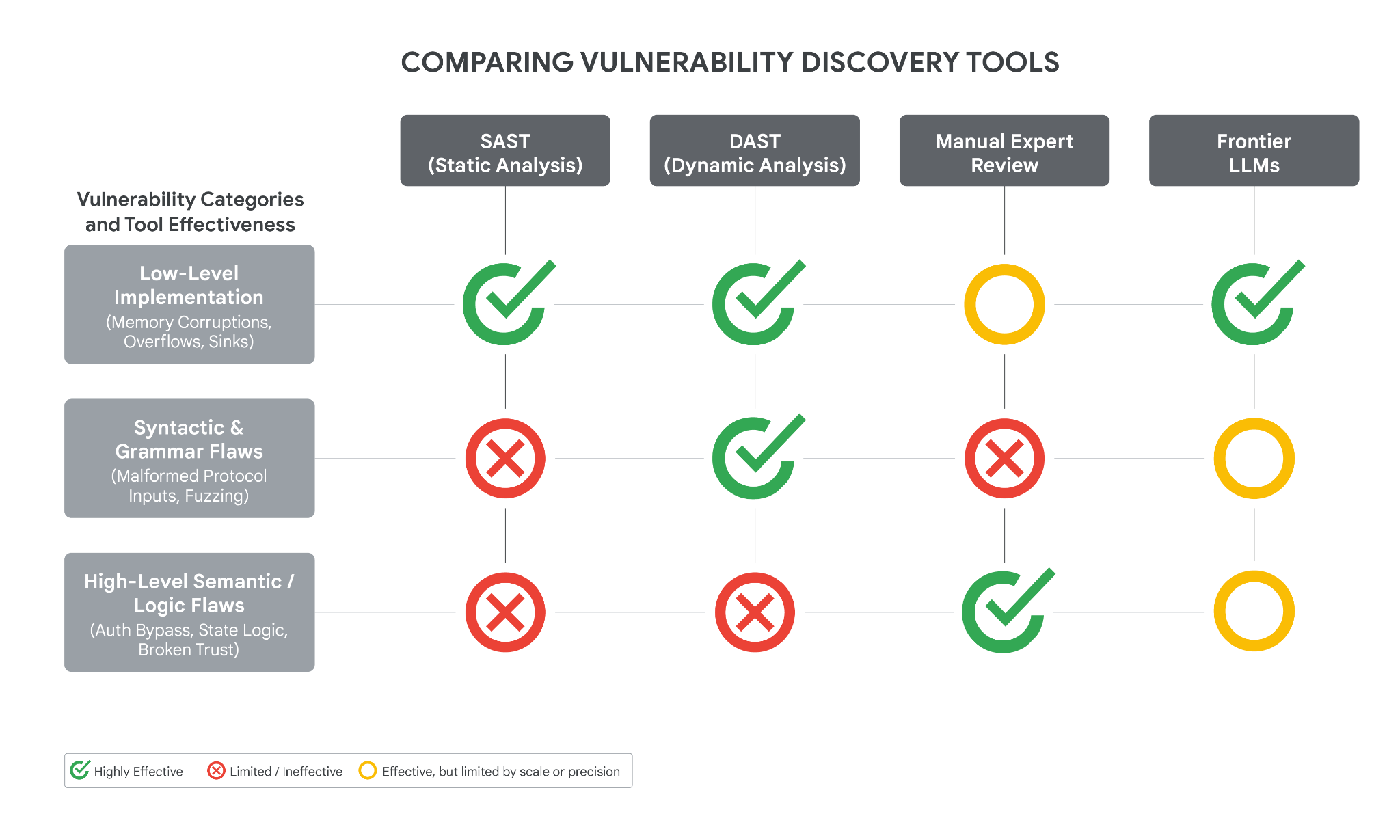

Google researchers found multiple clues in the Python exploit script used to carry out the attack that were suspected of being generated by AI, including an "illusory CVSS score" and an overall textbook-like structured layout style. These features are highly similar to the common training data formats of large language models. The report states that the vulnerability is essentially a "high-level semantic logic flaw" caused by "hard-coding a trust assumption" in the platform's 2FA design, providing attackers with an entry point that can be amplified by automated tools.

The incident occurred at a time when the industry is fiercely debating the capabilities of AI models focused on cybersecurity scenarios, such as the Mythos model launched by Anthropic, and a recent AI-assisted discovery of a Linux kernel vulnerability, all of which have triggered continued attention to the role of AI on both offense and defense. Google said that this is the first time it has found clear evidence that AI is directly involved in the vulnerability exploitation process in an actual attack, but the research team also pointed out that it currently "does not believe that Google's own Gemini model was used in this attack."

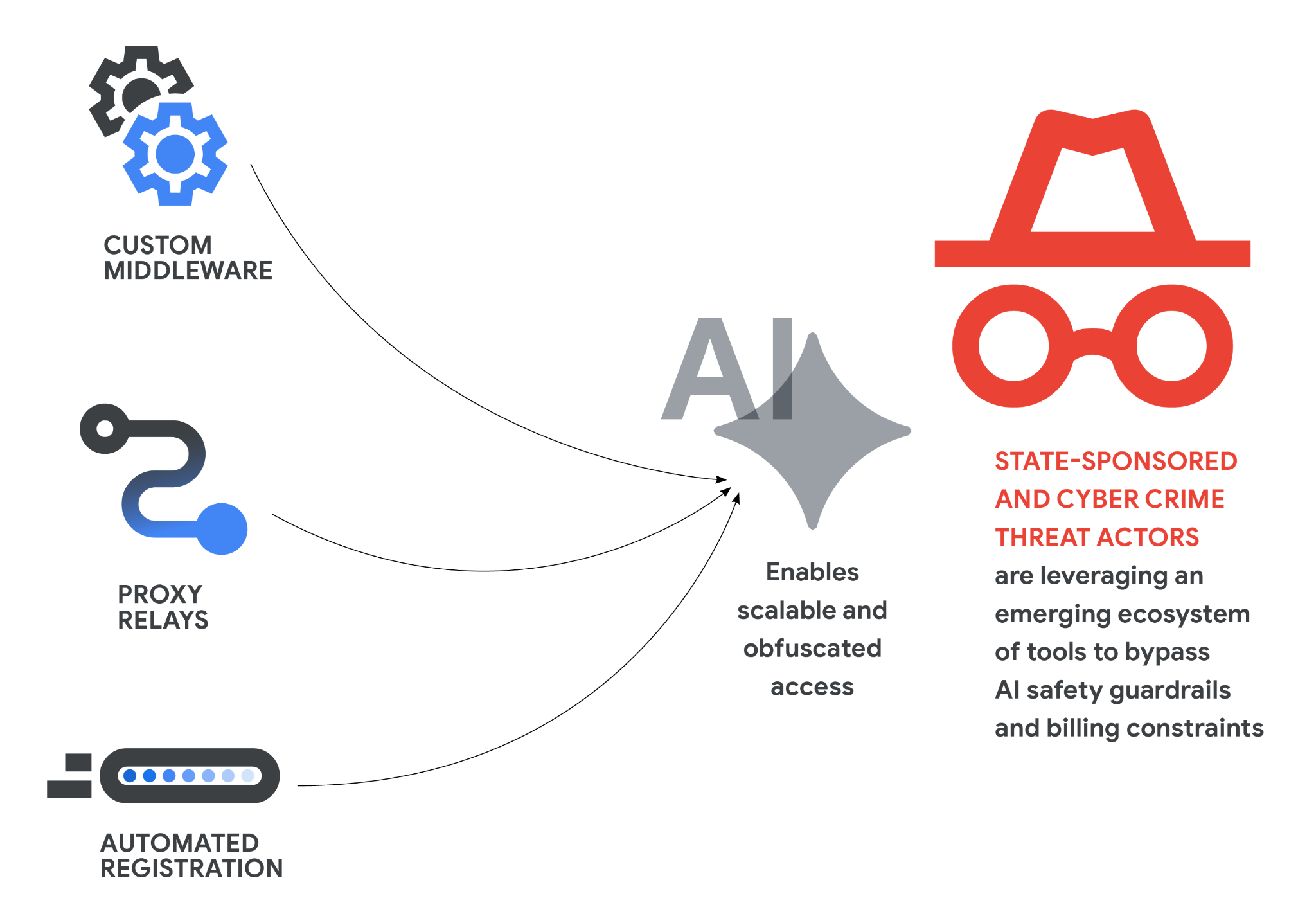

Google said that it had successfully "disrupted and blocked" this specific attack, but it also warned that hackers are increasingly systematically using AI to discover and exploit security vulnerabilities, and automation is accelerating from early intelligence collection to vulnerability mining and exploit code writing. The report also reminds that the AI system itself and its ecosystem are also becoming a new attack surface: attackers are beginning to target more integrated components that provide capabilities for AI, such as external tool interfaces and third-party data connectors that perform tasks autonomously, in order to find new intrusion paths.

In addition to using AI to write attack code, Google also named a type of spreading technique in the report-"personality-driven jailbreak". The attacker will carefully construct prompt words to let the model "act" as a senior security researcher or penetration testing expert, thus inducing it to output content that should be intercepted by security policies, including helping to locate potential security vulnerabilities in the system or generating exploitation ideas. Google emphasized that this type of attack pattern shows that the role of AI in the field of network security is rapidly evolving from a simple defensive tool to a new "multiplier" for both offense and defense. In the future, such zero-day attacks with deep involvement of AI may no longer be an exception.