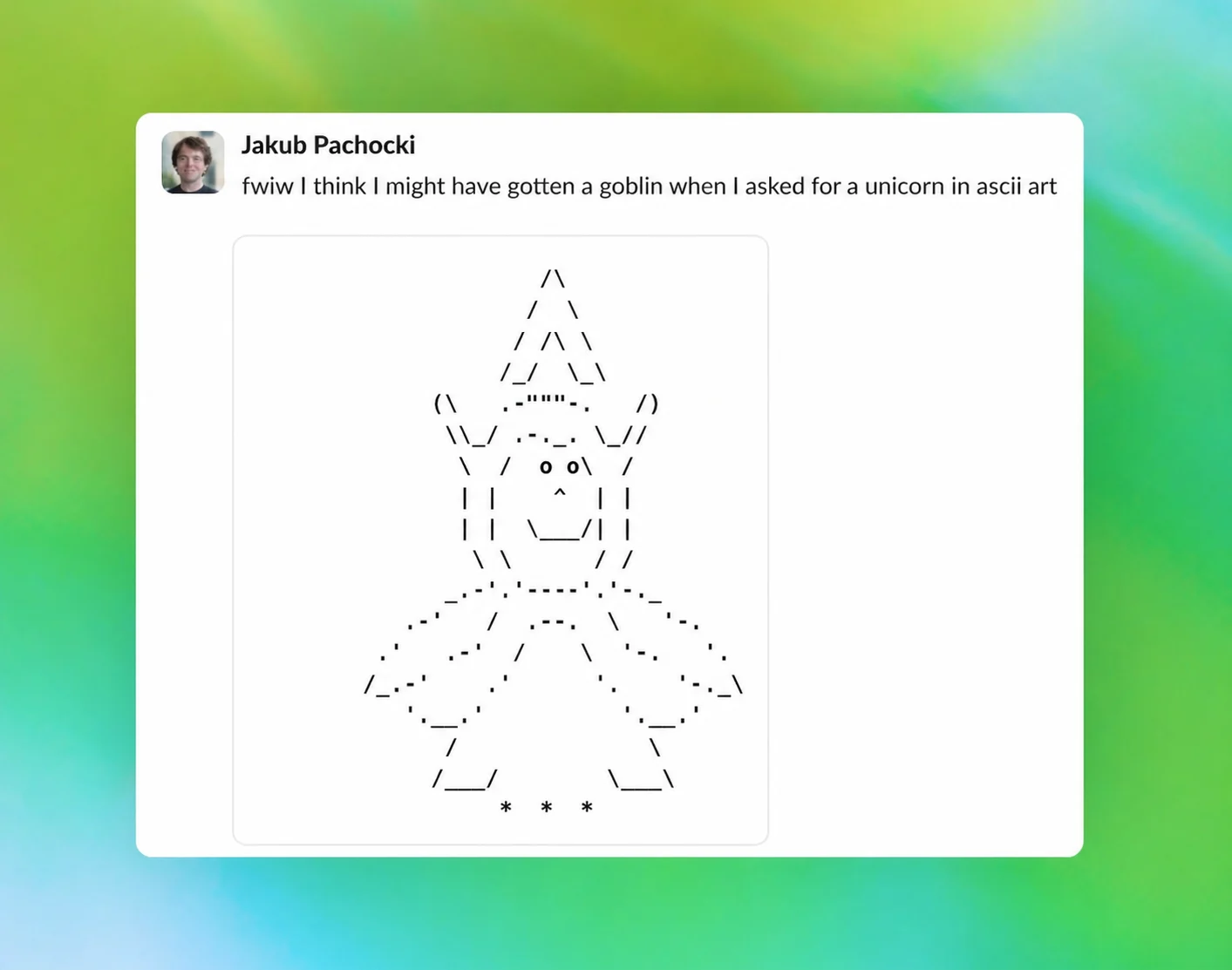

After "Wired" revealed that OpenAI had given its programming model an internal instruction to "never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures," OpenAI has issued an article on its official website to explain this phenomenon, saying that this is a "strange habit" formed by the model during the training process.

OpenAI stated that this type of metaphorical expression involving goblins and other creatures was first clearly noticed on the GPT-5.1 model, especially when the "Nerdy" personality option is enabled. According to the company, as subsequent models continue to iterate, this way of expression has not disappeared, but has gradually spread.

OpenAI pointed out in the description that the root of the problem is related to reinforcement learning training: although the relevant rewards are initially only imposed under the "Nerdy" personality condition, reinforcement learning does not guarantee that the learned behavior is always strictly limited to the conditions that triggered it. Once a certain language style or expression quirk is rewarded, subsequent training processes may propagate it to other scenarios, especially when these outputs are reused for supervised fine-tuning or preference data training. This tendency will be further strengthened.

According to reports, as OpenAI stopped providing the "Nerdy" personality in March this year, such expressions about goblins and gremlins have indeed decreased, but they have not completely disappeared. Especially in the GPT-5.5 model used by the Codex programming tool, because OpenAI started training the model before identifying the "root cause", relevant expressions still remain in it.

Because of this, OpenAI ultimately had to add very specific constraints to the Codex, explicitly requiring it not to mention these mythical creatures again. However, the report also mentioned that if someone wants their AI to retain a bit of this "Goblin style" when writing code, OpenAI has even publicly shared a method that can be used to revoke the relevant restrictions.

Judging from this response, behind this seemingly absurd "Goblin problem", it actually reflects a more realistic problem in large model training: some language habits that should only appear in specific personality settings may spill over to a wider range of model behaviors under the superimposed effect of the reward mechanism and subsequent training. For OpenAI, this is not only a public explanation of the out-of-control model style, but also a glimpse into the complexity it faces when correcting subtle behavioral deviations in generative AI.