DeepSeek released a multi-modal reasoning model and technical report on GitHub, titled "Thinking with Visual Primitives (Thinking with Visual Primitives)". This model is built based on DeepSeek V4-Flash (284B total parameters, 13B MoE architecture activated during inference), and proposes a new multi-modal reasoning paradigm.

The paper points out that there is a fundamental bottleneck in existing multimodal large models that has been ignored: the "reference gap" (Reference Gap), that is, the model can "see" the content of the picture, but when using natural language to build a thought chain during the reasoning process, vague descriptions such as the large red object on the left near the center cannot accurately locate the visual object in a dense scene, causing attention to drift and drawing wrong conclusions.

Previously, the mainstream response direction in the academic community was to improve the perceptual resolution, but the paper believes that seeing and being able to tell clearly what is being said are two different things.

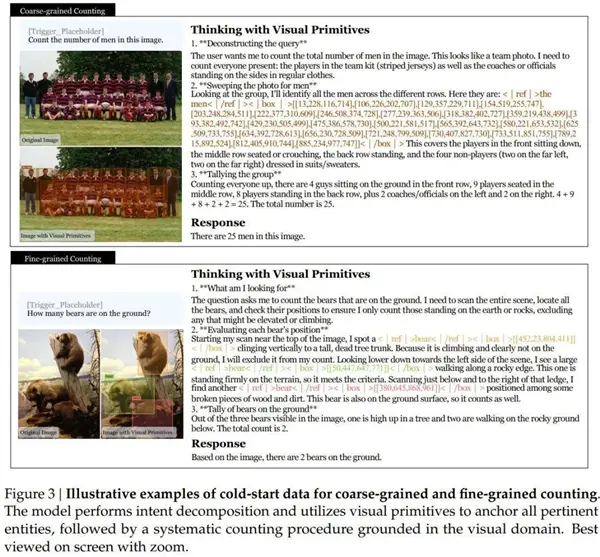

The core innovation of this model is to embed point coordinates and bounding boxes into the reasoning process itself, making them the basic unit of the thinking chain. Each time the model mentions a visual object during inference, its coordinates are output synchronously.

For example, "Find a bear [452, 23, 804, 411], climbing a tree, exclude it, then look down to the left and find another one [50, 447, 647, 771], standing on the edge of the rock, meeting the conditions." Coordinates are no longer answers marked after the fact, but spatial anchors to eliminate ambiguity during the reasoning process.

At the architectural level, the model achieves 7056 times visual compression, a 756×756 picture After ViT processing, 2916 image block tokens were generated, which were merged into 324 tokens through 3×3 spatial compression. The KV cache was further compressed 4 times through the Compressed Sparse Attention (CSA) mechanism, and finally only 81 visual KV entries remained.

As a reference, the same size picture Claude Sonnet 4.6 requires about 870 pieces, and Gemini-3-Flash requires about 1100 pieces.

In terms of training data, the team screened out approximately 31,700 high-quality data sources from nearly 100,000 target detection data sets and generated more than 40 million training samples, covering four types of tasks: counting, spatial reasoning, maze navigation, and path tracking.

Post-training adopts the expertization first and then unification strategy. Two expert models, bounding box and point coordinates, are trained separately. After reinforcement learning optimization, they are merged into a unified model through online policy distillation.

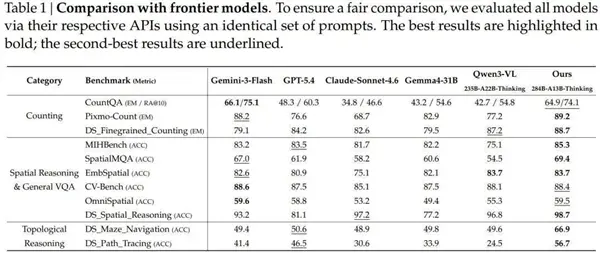

The experimental results were compared with mainstream models such as Gemini-3-Flash, GPT-5.4, and Claude Sonnet 4.6 on 11 benchmark tests.

On the counting task, Pixmo-Count's exact matching score is 89.2%, exceeding Gemini-3-Flash's 88.2%, and significantly ahead of GPT-5.4's 76.6% and Claude's 68.7% of Sonnet 4.6.

The most representative gap appears in topological reasoning: the maze navigation score is 66.9%, GPT-5.4 is 50.6%, Gemini-3-Flash is 49.4%, Claude Sonnet 4.6 is 48.9%, an increase of about 17 percentage points; the path tracking score is 56.7%, GPT-5.4 is 46.5%.

However, the paper also points out the current limitations: the model needs to explicitly trigger words to enable the visual primitive mechanism, the coordinate accuracy in extremely fine-grained scenes is limited, and there is still room for improvement in cross-scene generalization capabilities.