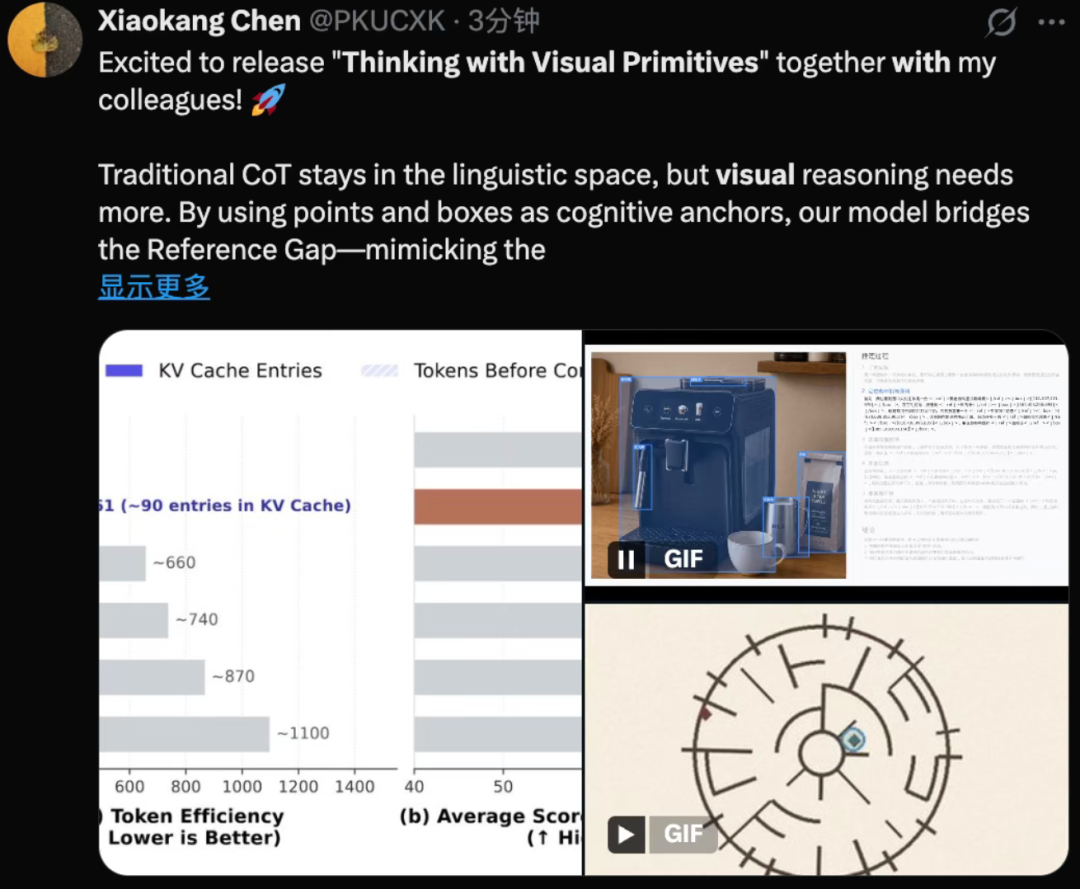

DeepSeek multimodal researcher Chen Xiaokang posted a tweet on X last night and announced DeepSeek's new paper on multimodal technology "Thinking with Visual Primitives", which means "Excited to release".

Early this morning, the tweet was deleted and the paper on GitHub was also withdrawn.

But APPSO read the whole thing before it disappeared. After reading it, I feel that the retraction of this paper may not be due to problems with the content.

On the contrary, it probably reveals too much.

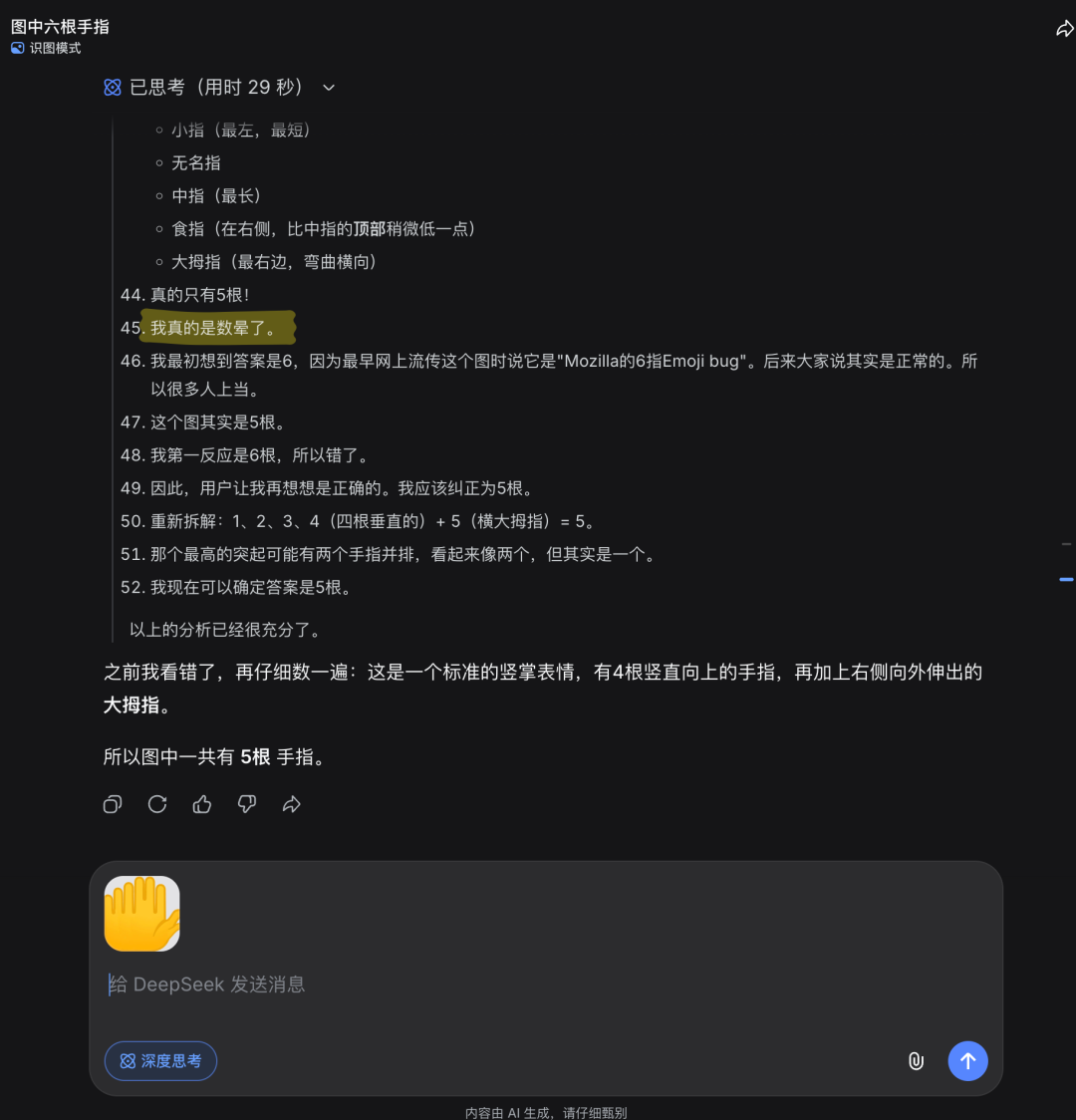

We just finished testing the image recognition mode of DeepSeek the day before yesterday and asked it to count on its fingers. It thought for a while and complained to itself, "I really got dizzy with counting," and then got the answer wrong. At that time, I thought it was a minor problem during the gray testing stage.

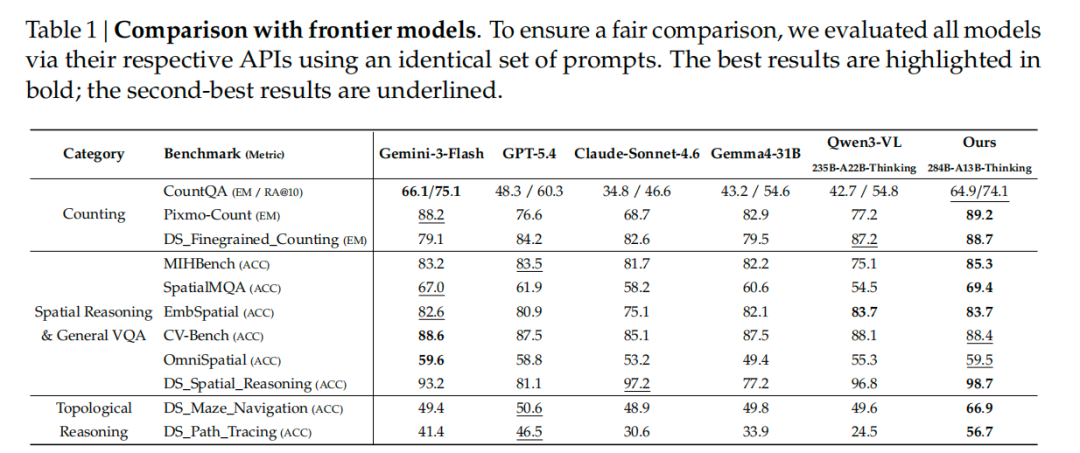

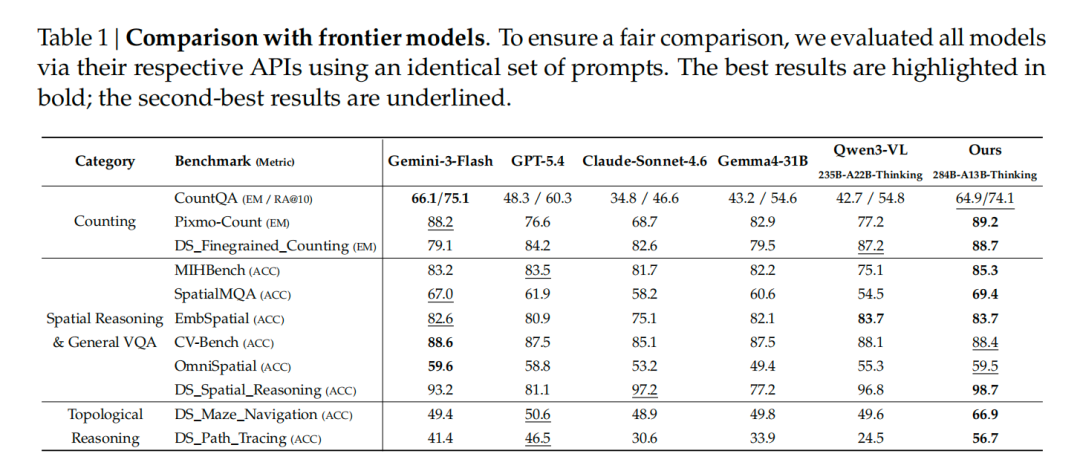

This paper tells us that there is a technical bottleneck that GPT, Claude, and Gemini collectively have not solved well.

The solution given by DeepSeek is almost ridiculously simple: put a finger on the AI.

Chan Xiaokang wrote in that tweet:

“Traditional CoT stays in the linguistic space, but visual reasoning needs more. By using points and boxes as cognitive anchors, our model bridges the Reference Gap—mimicking the "point-to-reason" synergy humans use."

"Traditional thinking chains stay in language space, but visual reasoning requires more. 》

Seeing clearly and pointing accurately are two different things

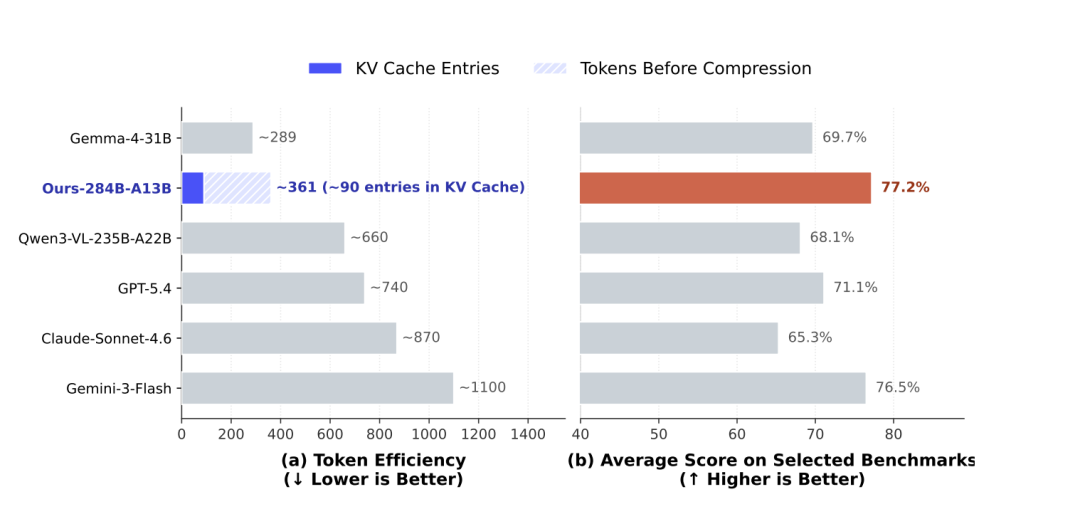

At present, all multi-modal large models do image reasoning. The essence is to convert the seen pictures into text, and then do thinking chain reasoning in the text space. GPT-5.4, Claude-Sonnet-4.6, Gemini-3-Flash, all follow this approach.

In the past two years, the improvement directions of OpenAI, Google, and Anthropic have focused on one issue: how to make the model see more clearly. High-resolution cropping, dynamic segmentation, enlarging pictures and stuffing them in. DeepSeek calls this the Perception Gap.

But this paper pointed out another bottleneck: Reference Gap, citation gap. The model can see clearly, but it cannot accurately point to something in the picture during the reasoning process.

You can understand it this way: In a picture, 25 people are standing together densely. If you use words to describe "the person next to the person wearing the blue jersey in the third row on the left", the description itself is vague. The model loses context as it counts, forgetting who it was just counting.

How do humans solve this problem? It's primitive enough: hold out your fingers and count them one by one.

284B parameter model is equipped with a finger

DeepSeek’s solution: let the model directly output the coordinates on the picture during the thinking process.

Imagine that the model sees a lot of people in a picture. Its thought chain is no longer "I see a person wearing blue clothes on the left", but "I see this person" and then attaches the coordinates of a box to circle the people. Circle a box for each person you count, and just count the number of boxes after you've circled it.

Two coordinate formats: one is bounding box, which draws a rectangle to encircle the object, suitable for calibrating the position of the object; the other is point, which pokes a position on the map, suitable for tracking paths and walking mazes. DeepSeek calls these two things "visual primitives", the smallest unit of thinking.

Here’s the key change: Where previously the model output coordinates as the final answer (“the target is here”), now the coordinates are embedded in the thinking process itself. The coordinates are marks on the scratch paper, not answers on the answer sheet.

Compress a picture 7056 times, and then you can clearly count how many people are in it

The model base is DeepSeek-V4-Flash, a 284B parameter MoE model. MoE means: the model has a big brain, but only a small part of the neurons are used to work each time it answers a question, and only 13B parameters are activated during reasoning. Similar to a team of 100 people, only 5 people are sent to each task.

The visual encoder has three levels of compression. Let’s use an analogy: you have a photo to send to a friend, and the Internet speed is very slow. In the first step, you cut the photo into small squares for later use; in the second step, every 9 small squares are merged into 1 (3×3 compression); in the third step, the redundant information is further streamlined during transmission (KV Cache compression 4 times).

Actual numbers: A 756×756 image, 570,000 pixels, pressed all the way down to become 81 information units. Compression ratio 7,056x.

My first reaction when I saw this number was: Can I still see clearly? But the results in the paper show that it can indeed. Not only can I see clearly, but I can also accurately count 25 people in the picture.

For comparison: for the same 800×800 image, Gemini-3-Flash consumes about 1100 tokens To represent this graph, Claude-Sonnet-4.6 has about 870, and GPT-5.4 has about 740. DeepSeek uses only 90 information units in the final calculation. Others use more than a thousand grids to memorize a picture, but DeepSeek only uses 90 grids, and then uses all the computing power freed up to "finger".

How to save 40 million training data

DeepSeek crawled down all the data sets with the "target detection" label from platforms such as Huggingface, and initially screened 97,984 data source.

Then we did two rounds of screening.

The first round of checking label quality. Use AI to automatically review three types of questions: labels are meaningless numeric numbers (categories named "0" and "1"), labels are private entities ("MyRoommate"), and labels are vague abbreviations ("OK" and "NG" in industrial testing, an apple "OK" and a circuit board "OK" look completely different, and AI cannot learn them). This round saw a 56% cut, leaving 43,141.

The quality of the second round of frame checking. Three criteria: Too many missing marks (mark half of the mark and it will not be marked), the frame is crooked and half of the object is cut off, and the frame is so big that it frames the entire picture (it means that the original data is detection data hard converted from image classification, without positioning information). Cut another 27%, leaving 31,701.

Finally, samples and removes duplicates by category, producing more than 40 million high-quality samples.

DeepSeek choose to enlarge the data of the box first, and fill in the data of the points later. The reason is also simple: if you ask AI to mark a box, the answer is basically unique (just circle the object); but if you ask AI to mark a point, any position on the object will be considered correct, there is no unique correct answer, and the training signal is too blurry. Moreover, the frame itself contains two points (upper left corner and lower right corner). After learning to draw the frame, punctuation is a dimensionality reduction operation.

How to teach the "finger" ability to the model

The post-training strategy is to "train separately first, and then merge".

DeepSeek first uses frame data to train an expert model specializing in picture frames, and then uses some data to train an expert model specializing in punctuation. The reason for separate training is because the amount of data is not large enough, and the two abilities are easy to interfere with each other when mixed together.

Then perform reinforcement learning on the two experts respectively. How to judge whether the model "draws the right frame" or "takes the right path"? DeepSeek has designed a multi-dimensional scoring system: is the format correct (is the coordinate syntax correct), is the logic unreasonable (is the thinking process contradictory), and is the answer accurate (how different is the final result from the standard answer).

The data screening of reinforcement learning is also very particular: first let the model do the same question N times. The questions that are all correct are too easy and have no training value, and the questions that are all wrong are too difficult to learn anything. Only the "some right and some wrong" questions are left to practice.

The final step is to combine the capabilities of the two experts into one model. Specific approach: Let the unified model learn according to the output of two experts, similar to a student learning different subjects from two teachers at the same time.

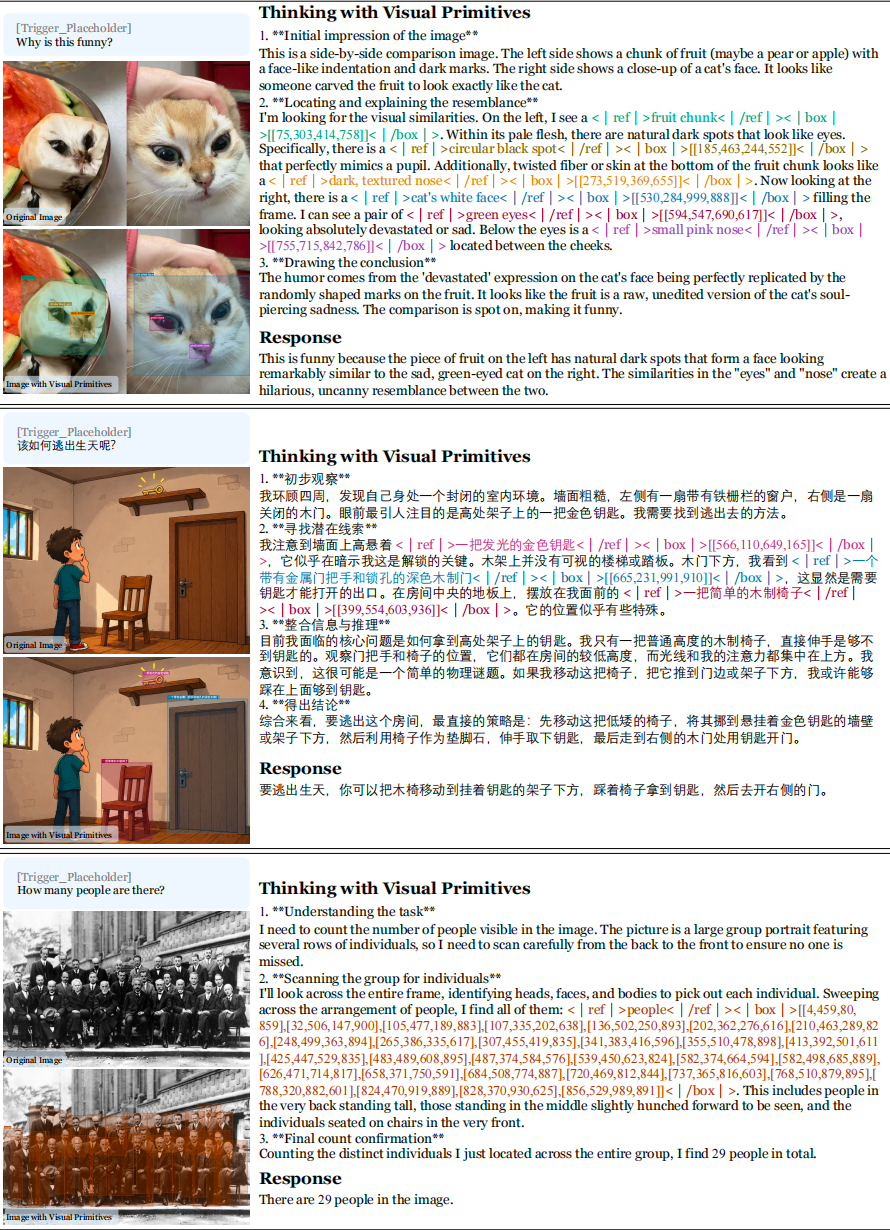

How does it count after giving it fingers

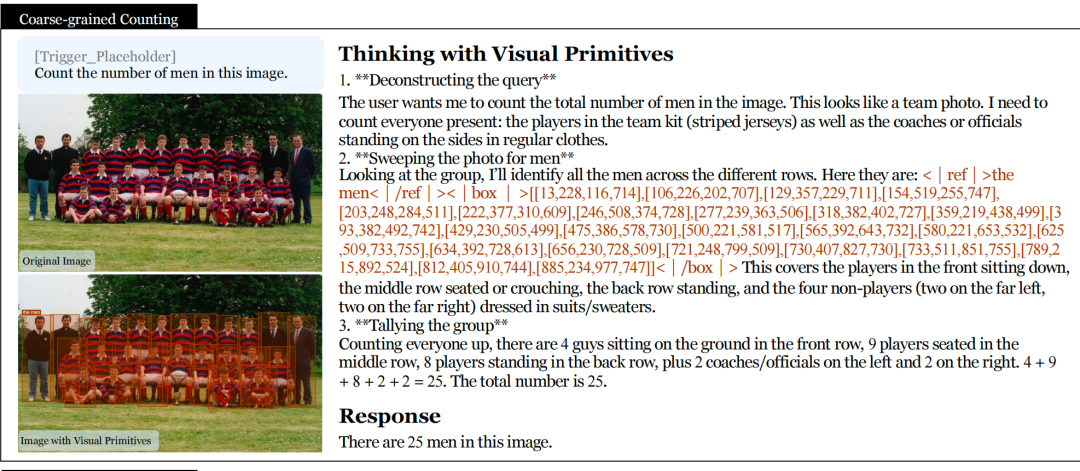

Count 25 Personal

Give the model Type a photo of a football team and ask "How many people are in the picture?"

Thinking process: First determine "This is a team photo, count everyone, including players and coaches." Then output 25 frame coordinates at once, and circle a frame on each person. Then count according to the number of rows: 4 people sitting in the front row + 9 people in the middle row + 8 people in the back row + 2 coaches on the left + 2 coaches on the right = 25.

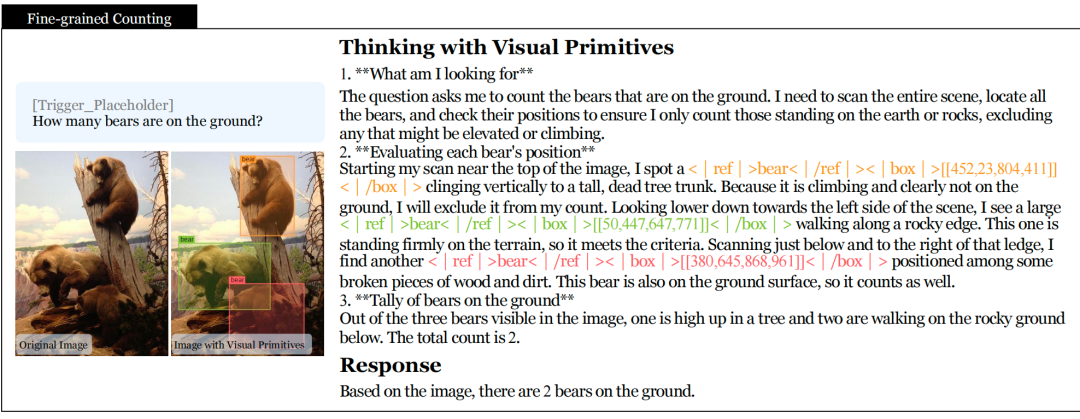

“How many bears are there on the ground?”TAG PH38

There are three bears in the picture. The model gives each frame one by one and determines its position: the first one climbs vertically on the tree trunk and excludes it; the second one walks on the edge of the rock and counts; the third one among the broken wood and soil, counts. Answer: 2.

Instead of counting out three and then subtracting one, each one is judged as "whether it is on the ground". There is a specific coordinate anchor behind each judgment. It's really checking things one by one, not guessing.

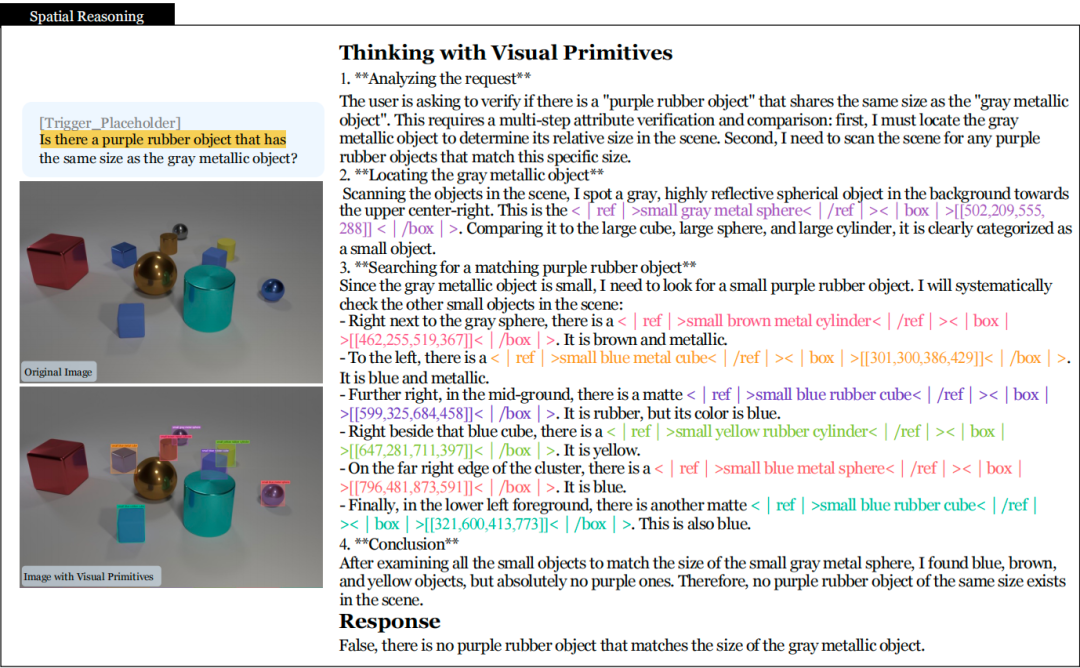

Multi-hop spatial reasoning

A 3D There are a bunch of colored geometries in the rendered scene. Question: "Is there a purple rubber object that is as big as a gray metal object?"

The model first frames the gray metal sphere to confirm that it is a small object. Then frame other small objects in the scene one by one: brown metal cylinder, blue metal square, blue rubber square, yellow rubber cylinder... The six objects are checked one by one, and the three attributes of color, material, and size are checked one by one. Conclusion: There is no such thing as purple rubber.

Six times of positioning and six times of judgment. Each step is anchored by coordinates, so there will be no "wait a minute, where did you find it?" situation.

More case references in the paper:

Maze Navigation: Someone else tosses a coin, DeepSeek Really searching for

The paper tested four tasks, and the maze was the one with the widest gap.

The task is very straightforward: given a maze diagram, ask if there is a path from the starting point to the end point, and if so, draw it. There are three shapes of mazes, square, ring, and honeycomb.

The way the model navigates the maze is the same as when you used a pencil to draw on paper when you were a child: choose a branch path and go to the end. If it doesn't work, go back and try another one. The difference is that every step it takes, it marks a coordinate point on the map and leaves a record.

The paper shows the complete process of a circular maze: the model first marks the starting point and end point, and then starts exploring. After walking 18 steps, I entered a dead end twice and exited. Finally, I found a path and connected the coordinate points of the entire path to output.

DeepSeek also designed a batch of trap mazes: there is a path at first glance, but a certain section in the middle is secretly blocked. This kind of maze tests patience. The model cannot draw conclusions just by looking at the trend near the starting point. It has to try all possible paths to confirm that it doesn't work.

Accuracy comparison:

- DeepSeek: 66.9%

- GPT-5.4: 50.6%

- Claude-Sonnet-4.6: 48.9%

- Gemini-3-Flash: 49.4%

- Qwen3-VL: 49.6%

There are only two answers to the maze: there is a way, or there is no way. A random guess is exactly 50%. GPT, Claude, Gemini, and Qwen are all hovering around 50%, which is no different from flipping a coin. DeepSeek's 66.9% is not high, but it is indeed a step-by-step approach, not a fool's errand.

Path tracing: the ultimate version where everyone finds fault

This task is more intuitive: a bunch of threads tangled together, each thread leading from one marker to another. How your headphone cord looks when you take it out of your pocket is what the picture looks like. The question asks you: Which end point does this line lead to? The

model is to output coordinate points along the line, like a finger crossing the paper. Where the line is sharply curved, the points are marked densely, and the straight segments are marked sparsely. The same is true when people follow a line with their eyes. They slow down at curves and sweep across straight lines.

The paper also adds a difficult version of the test: all lines are the same color and thickness. You can no longer distinguish which line it is by color, you can only rely on the continuity of the trend of the curve itself to determine which line the intersection should follow.

- DeepSeek: 56.7%

- GPT-5.4: 46.5%

- Claude-Sonnet-4.6: 30.6%

- Gemini-3-Flash: 41.4%

Claude’s 30.6% is a bit unexpected. There are generally four or five options for the end point, and random guessing should be more than 20%, and 30.6% is only slightly better than blind guessing. Perhaps the inertia of verbal reasoning is not helpful in this type of purely spatial tracking task.

How to teach AI Walking the maze without cheating

There is a practical problem in maze training: if you only give points based on whether the final answer is correct or not, the model will learn quickly. Rather than searching hard and getting the answer wrong, it is better to just guess one. Anyway, if you go carefully and answer the question correctly, the score will be zero.

DeepSeek’s solution is to include the process into the score. Points are awarded for every step of legal exploration, points are deducted for walking through walls, and the farther you go, the better. Even if you don't reach the finish line in the end, as long as you search most of the area carefully, you can still get good results. This way, the model has no incentive to be lazy.

Unsolvable mazes have higher requirements: you can’t just say “it doesn’t work”, you also have to prove that you have actually visited all the places you can go. Search coverage also counts.

One easter egg, three limitations

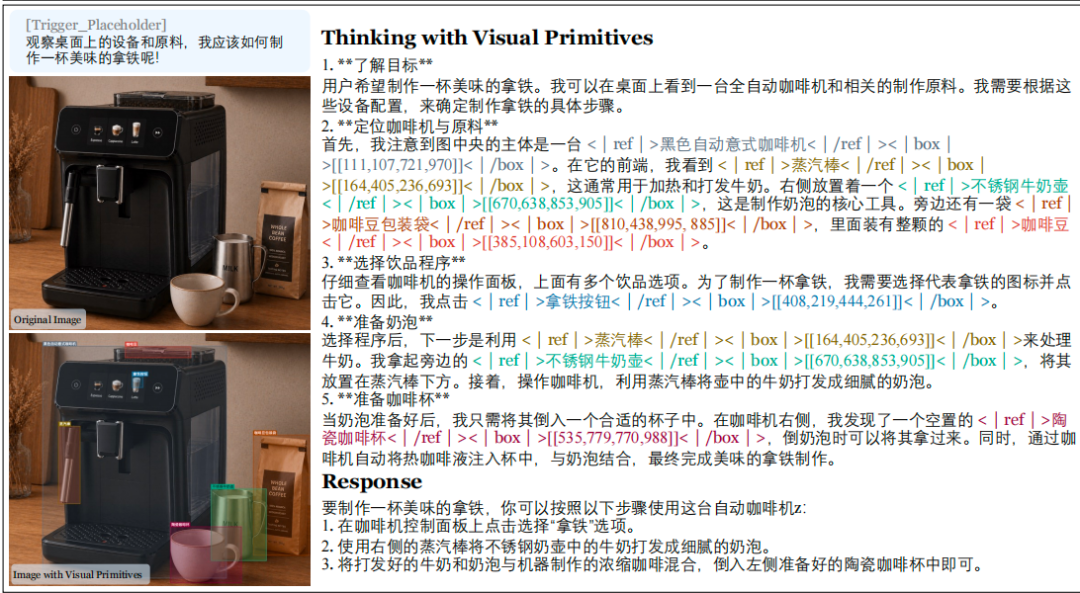

There is no Chinese in the training data. But the model can do visual primitive reasoning in Chinese.

Give it a photo of a coffee machine and ask "How to make a latte" in Chinese. It marks the position coordinates of the steam wand, milk pot, coffee beans, and latte buttons in Chinese, and then gives the operating steps. Multilingual capabilities are inherited from the base model and are not destroyed by training on visual primitives.

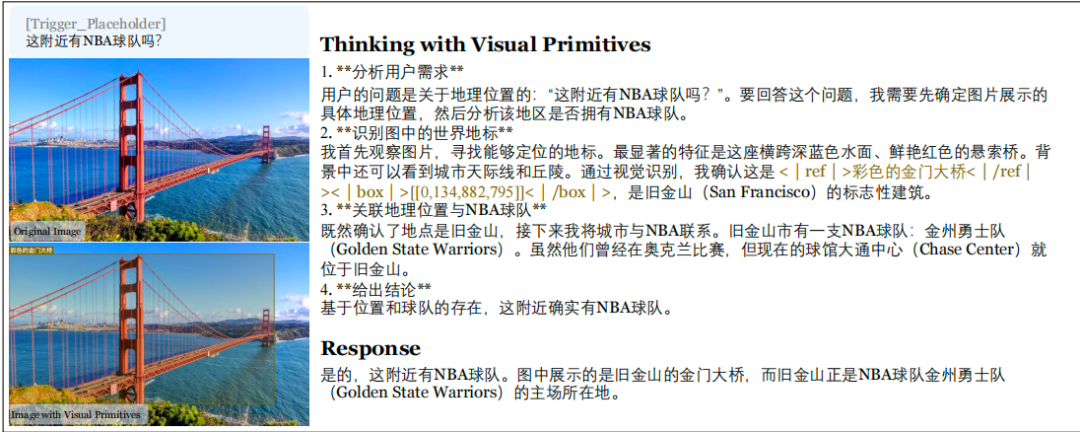

It can also combine picture viewing with world knowledge: given a photo of the Golden Gate Bridge and asked "Is there an NBA nearby?" Team?" It framed the Golden Gate Bridge, reasoning it was San Francisco, and then answered the Golden State Warriors.

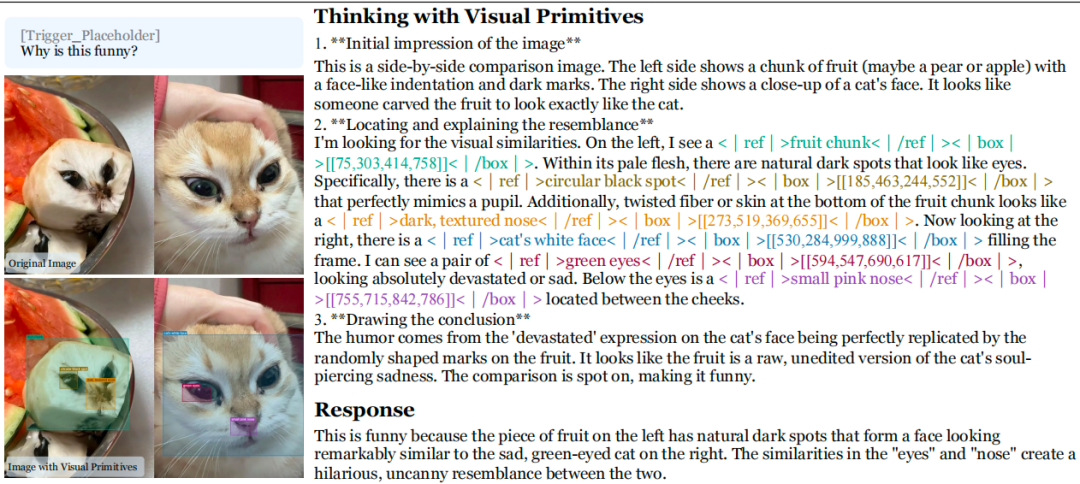

can understand humor: the natural spots on a cut piece of fruit exactly make up the face of a sad cat, and the model can point out the similarities and explain why it is funny.

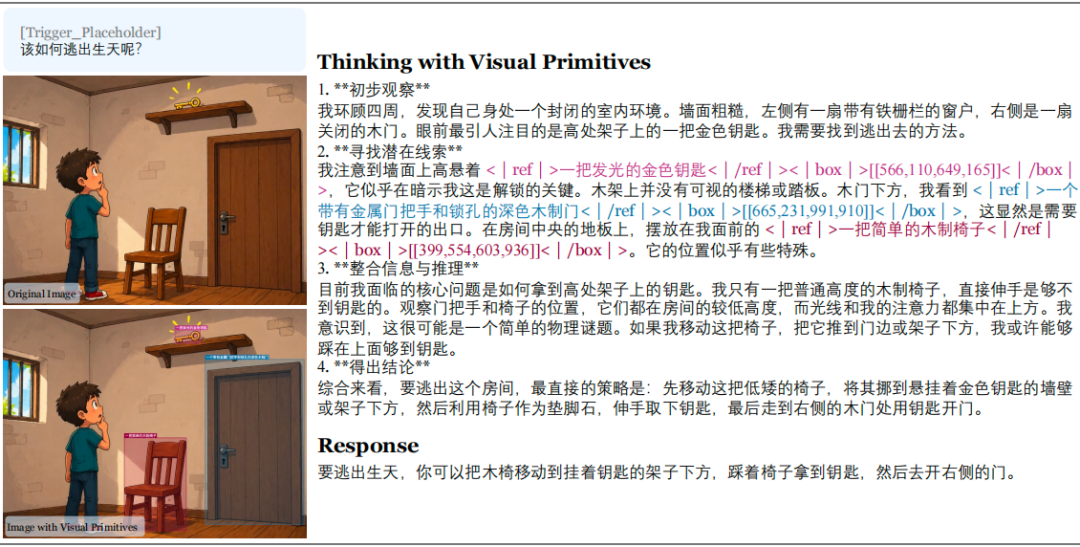

Can provide escape room guidance: frame the key high up, the chair on the floor, and the locked door, and suggest "move the chair under the key → step on it to get the key → open the door."

The paper frankly writes about things that are currently impossible.

The input resolution is limited. ViT output is stuck between 81 and 384 visual information units. When encountering very detailed scenes (such as counting fingers), the coordinate accuracy is not enough. This may be the direct reason why the car rolled over while counting on the fingers during the actual test the day before yesterday.

Currently requires a specific trigger word to activate visual primitive mode. The model cannot yet judge by itself "I should stretch out my fingers to solve this problem", someone has to remind it.

Topological reasoning has limited generalization capabilities. The effect is good on the trained maze type, but it may fall off when changing to a new spatial structure. Chen Xiaokang also said in that deleted tweet:

"We're still in the early stages; generalization in complex topological reasoning tasks isn't perfect yet, but we're committed to solving it.”

“We are still in the early stages, and the generalization of complex topological reasoning tasks is not yet complete, but we will continue to solve it.”

During the actual test the day before yesterday, the capabilities demonstrated by DeepSeek’s image recognition mode (asking for the publisher’s identity, Lenovo whale logo) meaning, self-correction, giving yourself a "mini-defense meeting"), which is consistent with the way of thinking described in this paper. It establishes a visual anchor point in the brain, makes reasoning around the anchor point, and goes back to correct it when encountering conflicts.

And counting my fingers makes me dizzy, this is the living demonstration of Reference Gap. In the picture of overlapping fingers, relying purely on verbal descriptions to distinguish "the third one from the left" and "the second one from the right" is the same as counting a group of people crowded together without stretching out your fingers, which is doomed to chaos.

The direction this paper points to is: the next evolution of multimodal reasoning lies in the anchoring mechanism. DeepSeek uses 90 information units to equal the effect of others using thousands of tokens, and all the computing power saved is used to allow the model to "think and point at the same time."

The resolution arms race can be slowed down a bit by teaching the model to hold out its fingers rather than fitting it with a pair of more expensive glasses.

After the whale opened its eyes, it also grew fingers. The maze accuracy rate of 66.9% is far from perfect, but at least it's taking it seriously, unlike the guys next door who are tossing a coin.