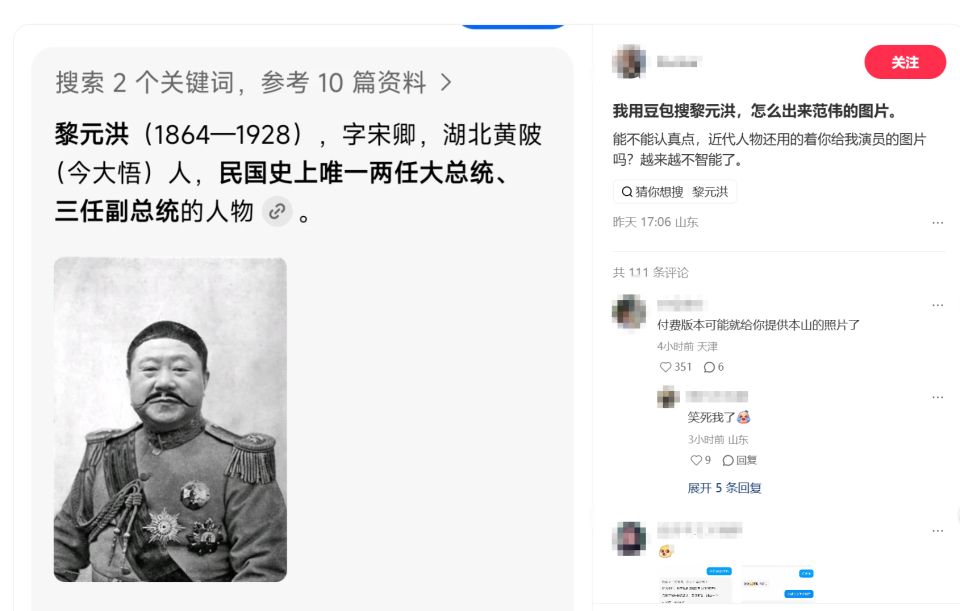

Recently, when some netizens used Beanbao to search for Li Yuanhong, a figure from the Republic of China, a Photoshopped photo of actor Fan Wei appeared. This oolong incident may seem amusing, but in fact it exposed the authenticity loopholes in AI in image recognition and information retrieval. Many netizens complained that AI was not rigorous enough, and the retrieval of serious historical figures made entertainment errors, affecting the accuracy of information acquisition.

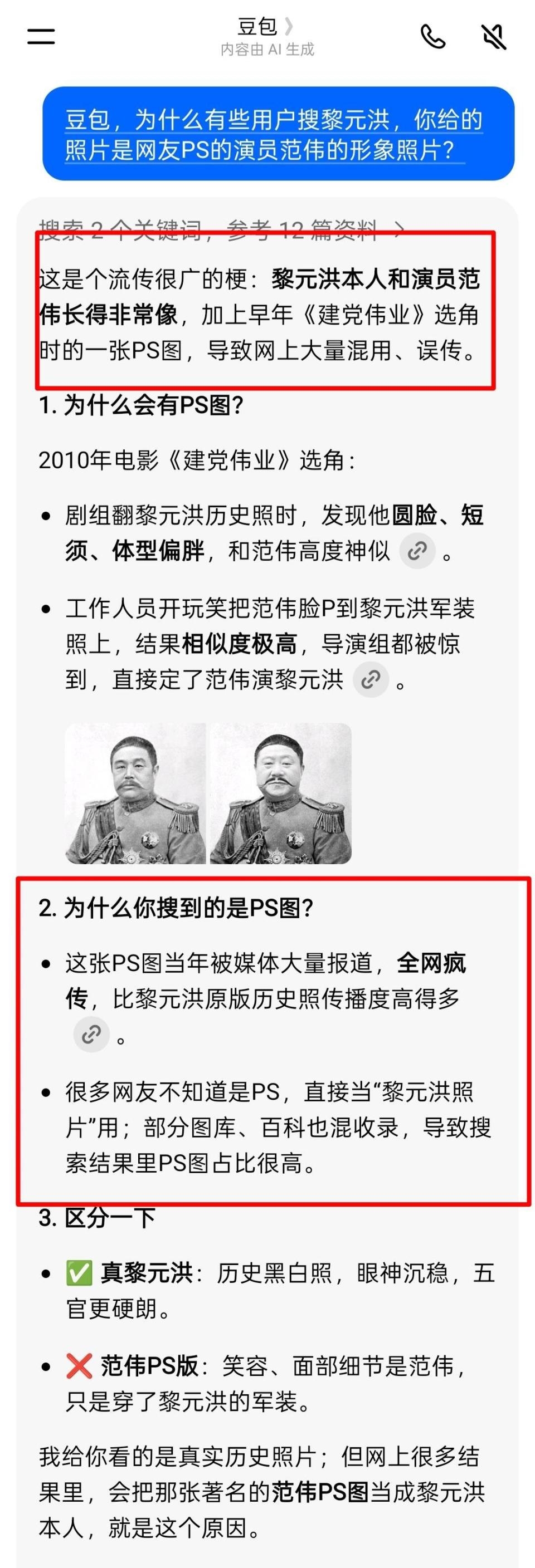

According to the official explanation, the oolong originated from a misunderstanding spread on the Internet: Li Yuanhong and Fan Wei are very similar in appearance. The spoof PS picture during the casting of "The Founding of the Party" in 2010 was widely disseminated, and the dissemination rate far exceeded the real historical photos. Some photo galleries and encyclopedias were mistakenly included, causing this highly popular composite picture to be prioritized during AI searches.

The occurrence of such errors in AI is essentially a typical manifestation of information illusion.

Experts point out that large models rely on network data learning and lack self-verification capabilities. When wrong information appears repeatedly in the network, AI easily outputs it as correct results, resulting in a situation of "serious nonsense".

Current technology cannot completely eliminate hallucinations. The error rate can only be reduced by optimizing data sources and enhancing retrieval and verification.

This incident has also triggered discussions among netizens on the reliability of AI. Some people have ridiculed whether similar errors will occur when searching for other historical figures. Some users also shared their experiences of being misled by AI error information and called for improving the accuracy of AI content.

Currently, relevant platforms are optimizing the search logic, giving priority to pushing images of authoritative historical materials to reduce the interference of misinformation on the Internet.