At a special Android event on the eve of this year's I/O Developers Conference, Google once again focused on its own general-purpose large model Gemini and announced a series of new features focused on "helping you control your phone." These features will appear in the Chrome browser, system automatic form filling, and more application scenarios.

Google also launched a new concept name - "Gemini Intelligence". According to Ben Greenwood, Google Android Experience Director, this name represents "releasing the best capabilities of Gemini on the most advanced Android devices." It is essentially a packaged integration of existing and new features, and is mainly targeted at high-end flagship models such as the Galaxy S26, forming a feature set similar to an "advanced Android experience."

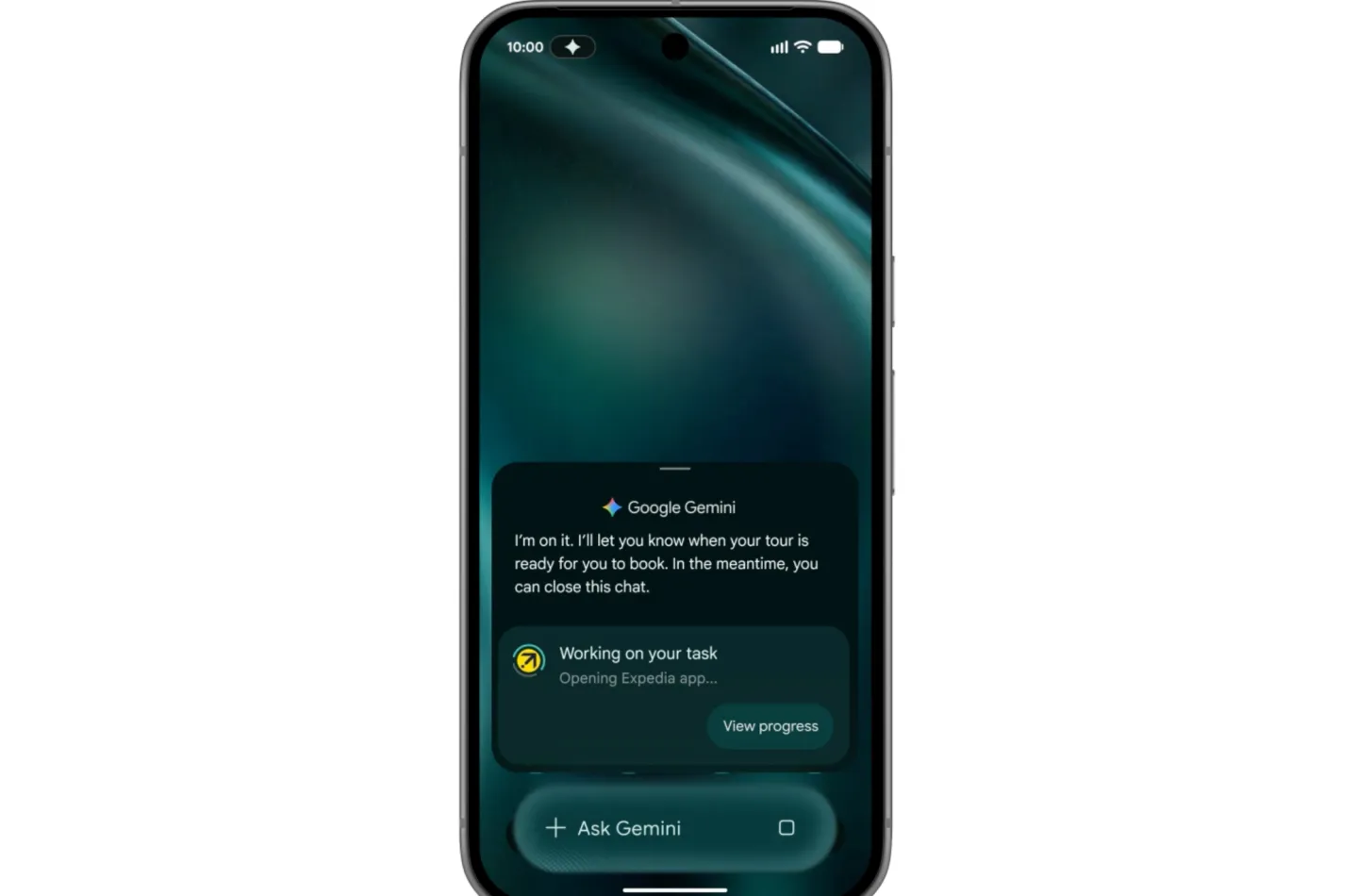

At the heart of this collection are task automation features that have been previously tested on some new Pixel and Samsung Galaxy phones. This feature allows Gemini to operate specific applications directly on the phone. Previously, it mainly supported a few apps such as ride-hailing and food delivery. Now Google has announced that it will be opened to a wider application ecosystem "soon". In addition, task automation will also be upgraded from supporting only voice and text commands to multi-modal input. Users can trigger actions through screenshots or photos. For example, give Gemini a screenshot of a shopping list in the note application, and it will automatically add a shopping cart to the e-commerce or grocery shopping platform - provided the device itself supports Gemini Intelligence.

Under Gemini Intelligence, Google also announced for the first time a new feature called "Create My Widget", which is officially regarded as the first step towards "generative UI". Users only need to describe the widget functions they want in natural language, and Gemini will automatically generate the corresponding desktop components. Examples given by Google include: customizing a weather component that highlights wind speed and precipitation probability for cycling enthusiasts, or a recipe component that automatically recommends "three high-protein meal preparation recipes" every week. After generation, these components will not only appear on the mobile phone desktop, but will also be expanded to Wear OS smart watches simultaneously, forming a unified experience across devices.

From the perspective of interaction concept, Google hopes that users will regard components as "mini applications that can be easily written on the desktop using AI", thus promoting the evolution of interface forms towards instant generation and on-demand changes. The industry is also paying attention to whether Google will further explain the long-term route of "generative UI" at the I/O conference: whether it will allow the interface to be truly reconstructed instantly according to the scene, or whether it will be more of a personalized attempt at the component level.

In addition to system-level functions, Google has also moved some of Gemini’s desktop capabilities to the Android version of the Chrome browser. In the future, users will see an independent Gemini button in Chrome, which can directly share the current web page content with Gemini, and ask questions or generate a summary of the page information within the browser. For users who subscribe to the Google AI Pro or Ultra plan, Chrome will also provide "auto browse" to assist in completing tasks, such as automatically jumping between websites, filling in information, and completing appointments. This feature is expected to be gradually rolled out in late June.

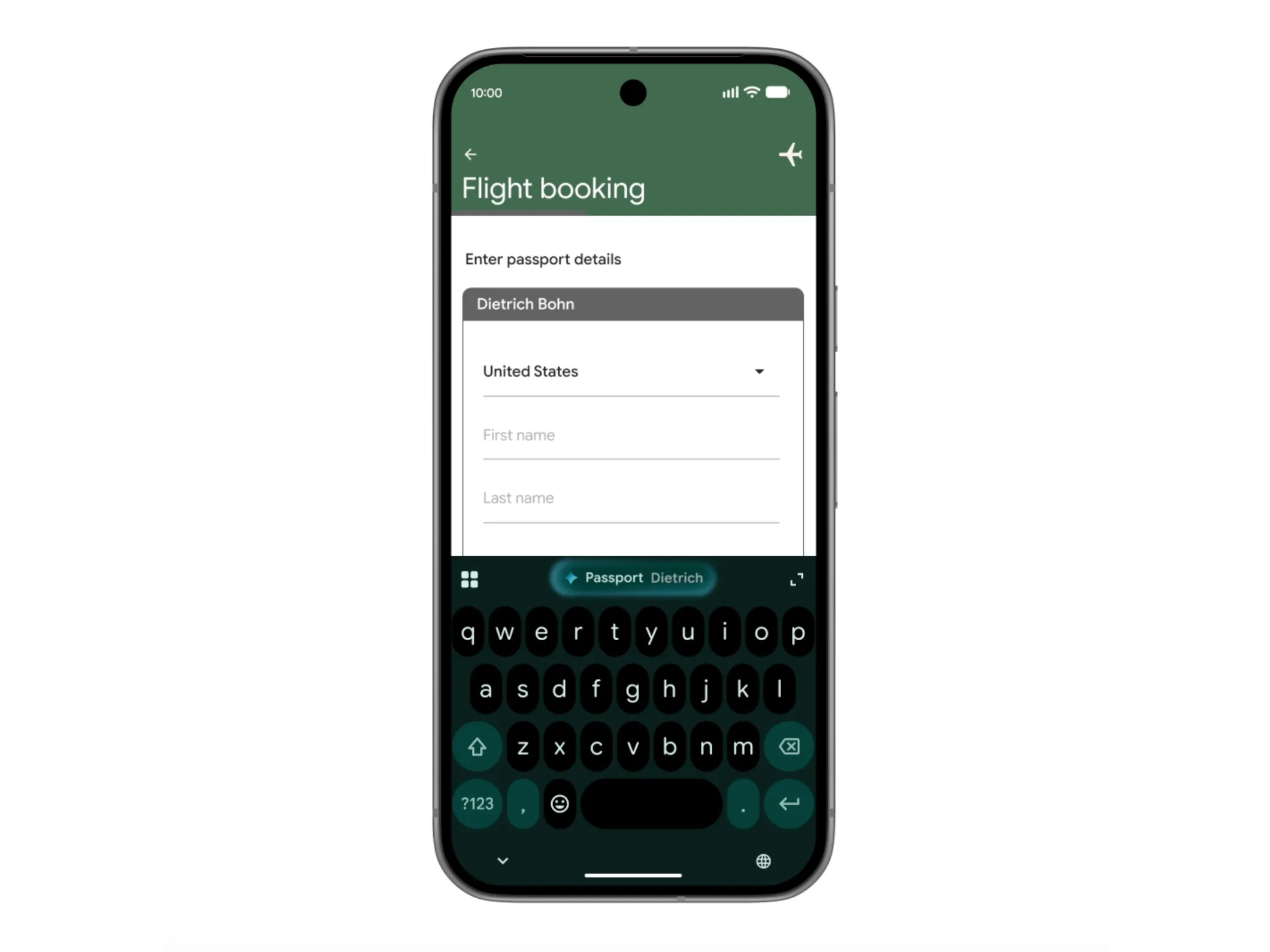

In terms of another important entrance to the system layer - automatic form filling, Gemini will also "conditionally" intervene more deeply. Google said that users can choose to connect Gemini to Android's autofill function to help fill in various forms. To do this, Gemini will use its so-called "Personal Intelligence" interface to retrieve relevant information from personal data sources such as Google Photos and Gmail with authorization, such as automatically identifying license plate numbers in photos and filling in forms. On the one hand, this approach has significantly improved the efficiency of filling in. On the other hand, it has also triggered a new discussion on the boundary between privacy and convenience - whether it is "extremely considerate" or "a bit weird" is left to the user to judge.

According to Google's plan, various functions of Gemini Intelligence will be launched "in batches and according to maturity" this year. The first batch of push objects will still be high-end Android models such as Samsung Galaxy and Google Pixel, and it is expected to receive updates starting this summer.