OpenAI's intelligent agent Codex directly competes with Claude Cowork this time. Codex is OpenAI's flagship code generation model, supports products such as GitHub Copilot, and has become an indispensable AI assistant for developers around the world. This update is very important. YouTube creator Mike Russell released a test video, and the effect was explosive.

He completely handed over his Mac to OpenAI's latest upgraded Codex, letting GPT-5.5 control Adobe Audition to repair the audio, use Photoshop to make the cover, and then use Adobe Firefly to generate AI video.

From start to finish, there is zero human intervention.

This is not a demo or a PPT. It is a real creator who has completely handed over his productivity tool chain to AI to run it again.

Greg Brockman, co-founder and president of OpenAI, directly said: "Everyone can use Codex, and all computer tasks can be done!"

Yes, a tool for writing code suddenly wants to steal everyone’s keyboard.

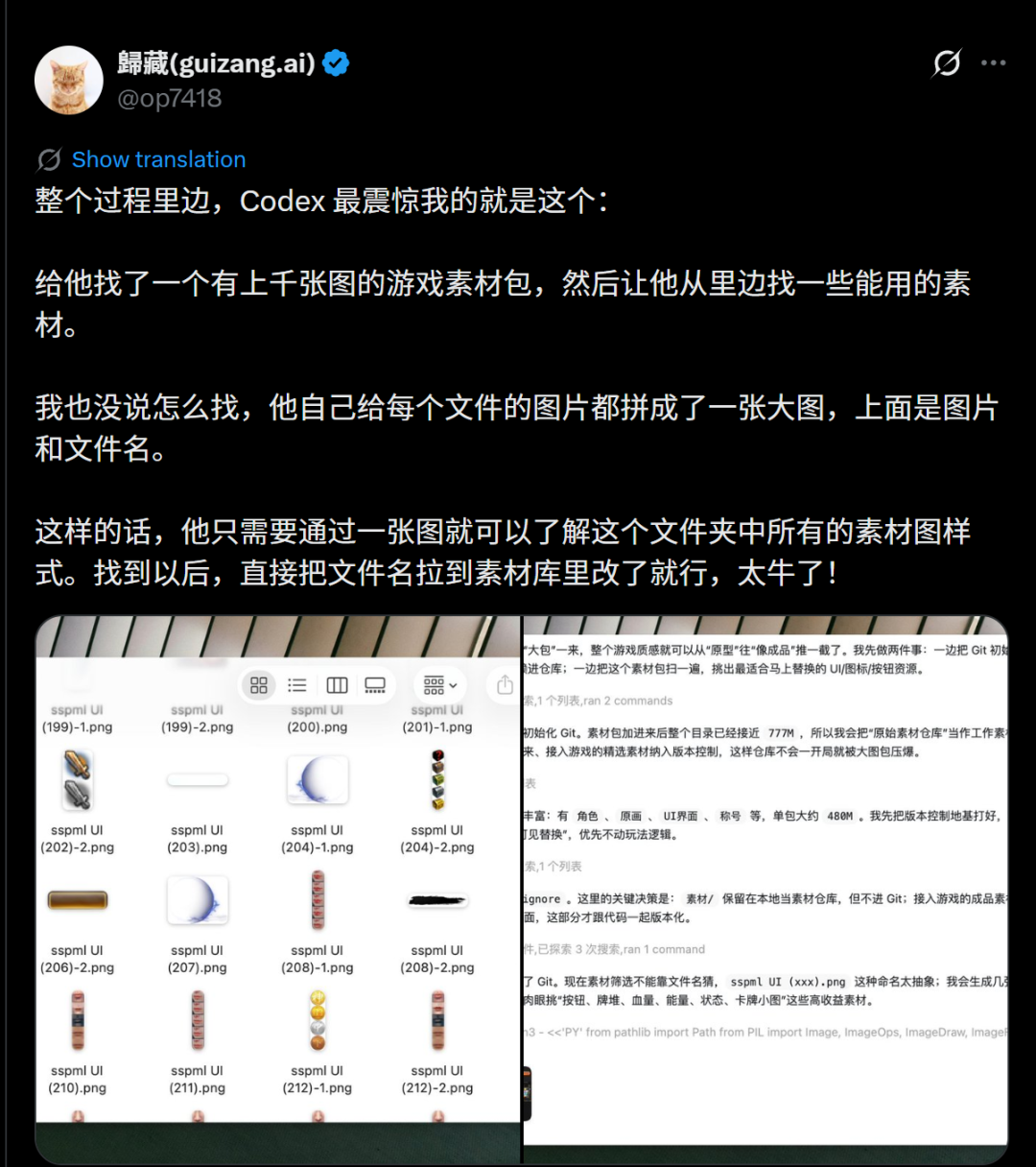

AI V Guizang said that in one afternoon, Codex helped him develop a complete game in just one sentence.

The most surprising thing is the way Codex handles materials: it provides a material package containing thousands of pictures, without explaining the screening method.

Codex automatically integrates the pictures in each folder into an overview picture with file names.

In this way, you can grasp the style of all the materials by just looking at one picture, and then directly call the file after selecting it. This operation was so shocking that he called Codex awesome!

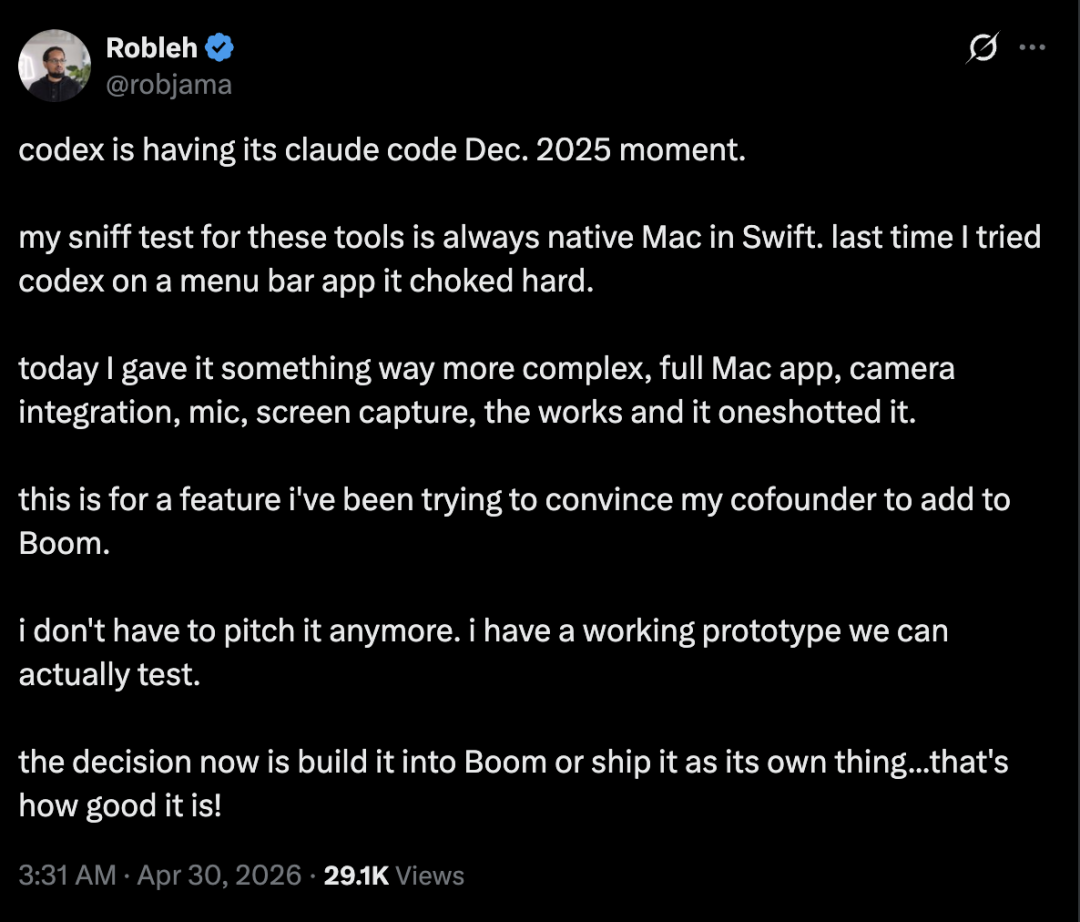

Netizens said that Codex has finally ushered in its "Claude Code highlight moment" - a complex and complete Mac application that integrates a camera, microphone, and screen recording, and it can be done in one go.

Netizens who have used Codex simply cannot stop!

Codex has changed: from code assistant to computer manager

In short, in the past, everyone had a clear understanding of Codex - it was a tool for writing code. It can help you complete functions, debug bugs, and generate scripts. It is the programmer's co-pilot.

This upgrade directly blew up the border.

The core sentence in the official announcement of OpenAI: Codex now supports Slack integration and Google Workspace integration. Translated into human words - it can not only write code, but also read your emails, reply to your Slack messages, and operate your Google Docs and Sheets.

This sentence makes it impossible for OpenAI to hide its ambitions: it no longer positions Codex as a developer tool, but as a general computer control agent.

Just yesterday, Codex suddenly announced a big wave of updates.

It can automatically summarize changes, perform data analysis, and assist decision-making across Slack, Gmail, and Calendar.

Can organize research materials, create spreadsheets and presentations.

It can analyze data export, mark changed content, and draft interpretation reports.

You can also compare multiple options based on criteria and track trade-offs.

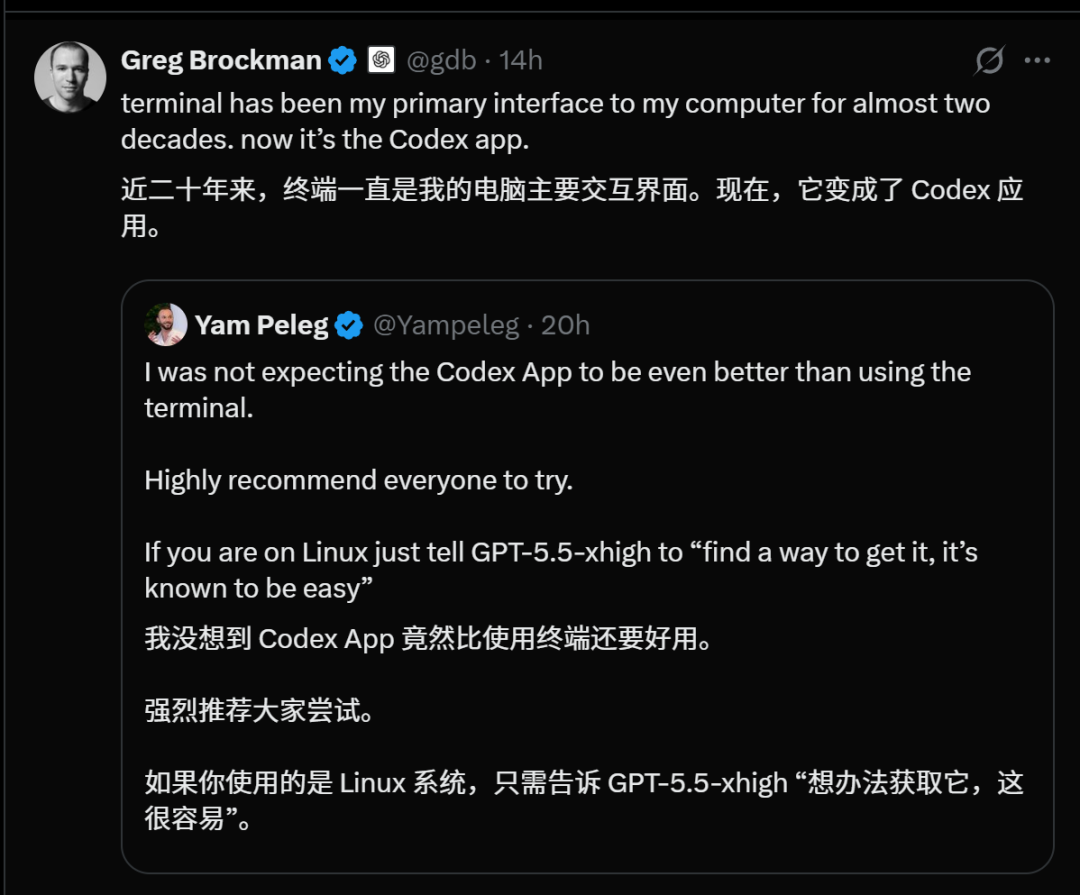

OpenAI co-founder Greg Brockman, a top hacker who has been used to black-screen command line terminals for 20 years and regards code as life, publicly announced:I completely fell in love with CodexApp, which has replaced my 20-year-old terminal.

Developers all know what this means.

Such a powerful update made Ultraman directly post: "Codex is experiencing a ChatGPT moment!"

Following yesterday's big wave of updates, early this morning, Tibo, a core member of OpenAI Codex, posted on X "Feeling codexy today", indicating that Codex will usher in another epic update.

As soon as this post came out, the programmer circle was instantly excited!

Sure enough, it didn't take long for OpenAI to start releasing new cases again.

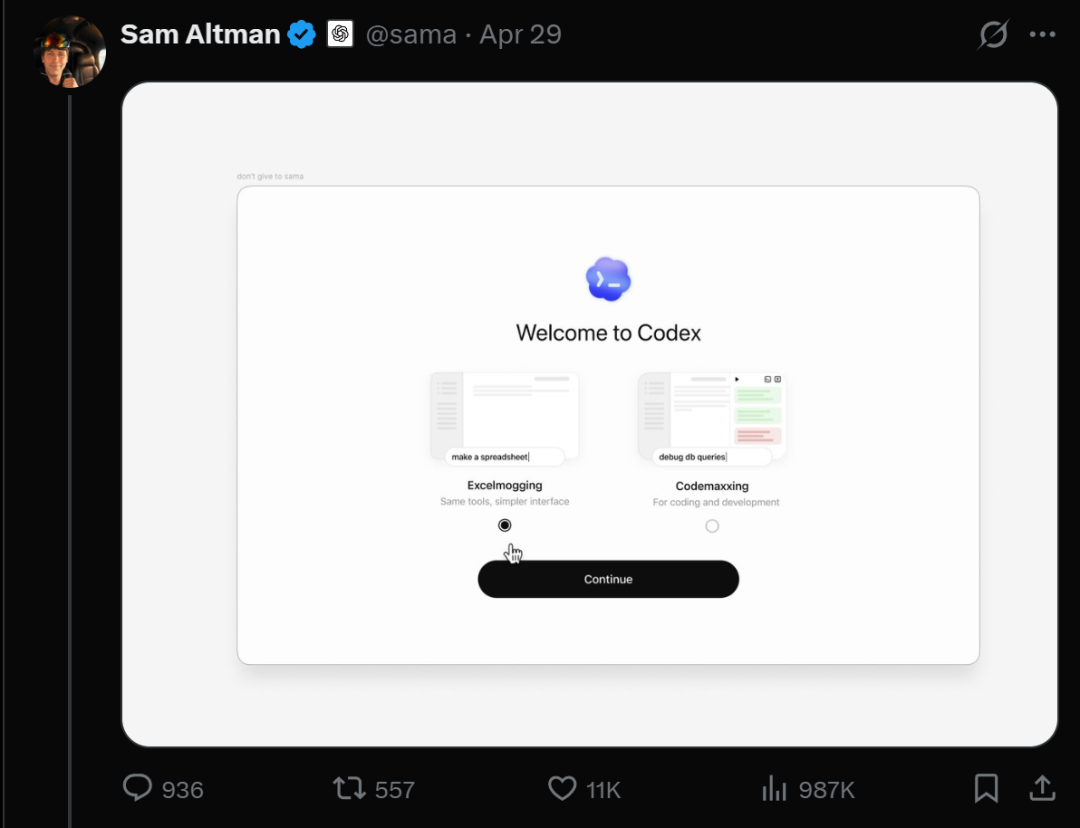

Handling your daily tasks with Codex has never been easier. You can choose your character, connect the apps you use every day, and try out recommended prompt words.

Whether it's research and planning, or documents, presentations, spreadsheets, etc., Codex can help.

Codex will recommend useful plug-ins based on your role and guide you in connecting various applications, such as SlackHQ, Google Workspace, Microsoft 365, and more.

It acts like your personal assistant, able to aggregate data from different applications and documents, plan next steps, draft work, organize research, or create project plans.

You can see at a glance what's going on, including task progress, files and tools used, and what to do next.

From draft to finished article, you can review the content as it takes shape in Codex. Open the file, suggest changes, and continue to optimize and adjust in the same conversation thread.

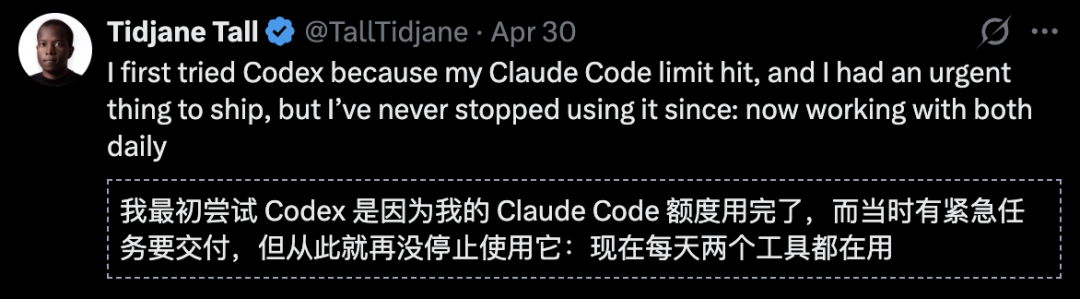

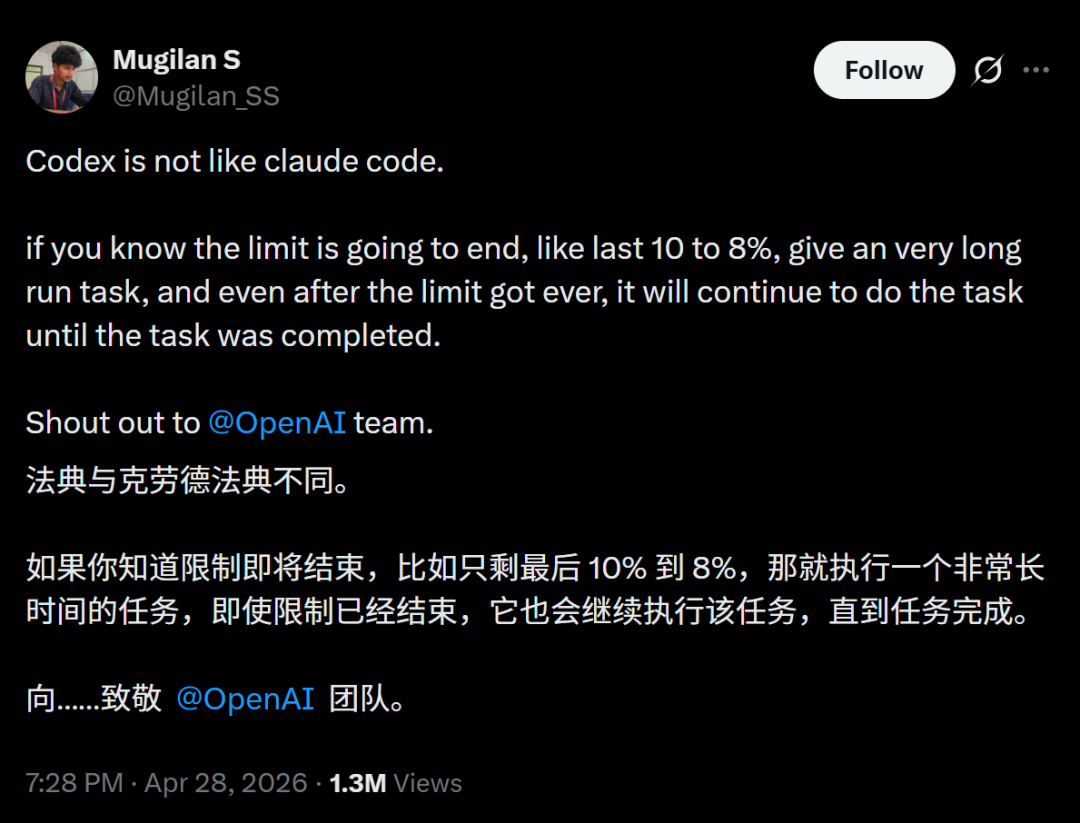

Developer V said that Codex and Claude Code are very different.

If the quota is about to end, you can execute a long-term task. Even if the quota has expired, Codex will continue to execute the task until the task is completed.

This post was forwarded directly by Ultraman.

Tibo also said that between good user experience and optimizing profit margins, OpenAI chose the former.

OpenAI even released an official blog guide to introduce how to use Codex in daily work.

Claude Code's number one fan turns to Codex, Ultraman applauds

On the same day that Codex was upgraded, another good show started.

On the

Ultraman soon appeared and responded with a Star Wars meme: "Welcome to the light side!"

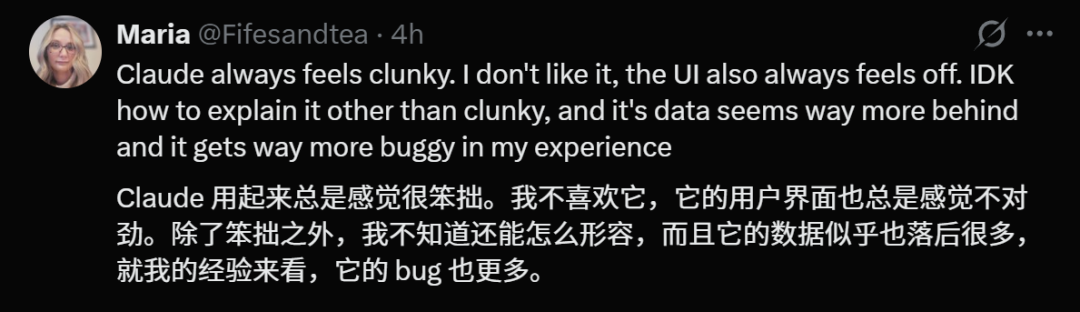

Sure enough, more developers came forward and said that they really didn't like using Claude because it was clumsy, the user interface was always wrong, and there were many bugs.

This time, the developers themselves voted with their feet.

Codex testing is crazy!

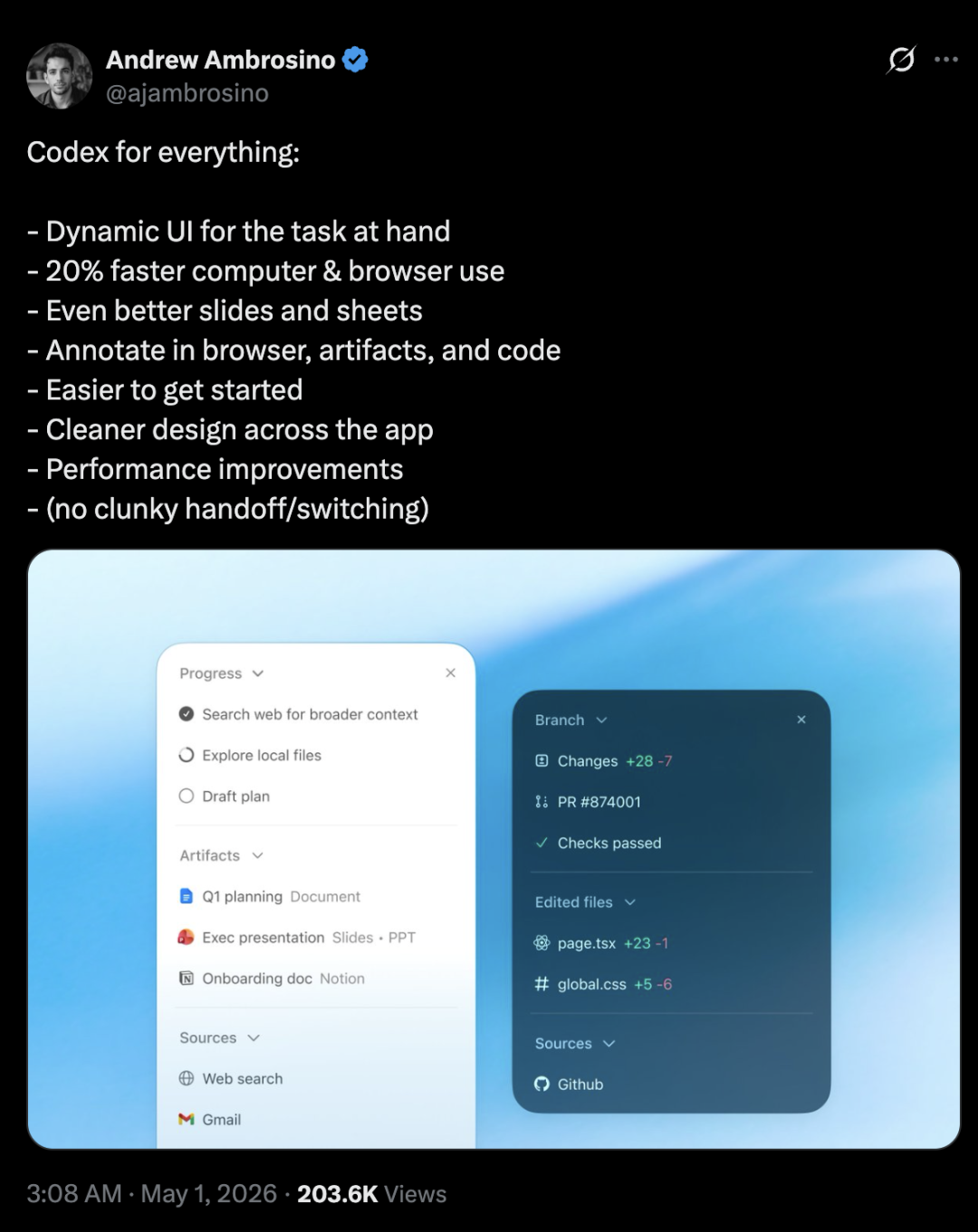

Codex App developer Andrew Ambrosino said bluntly: "Codex handles everything!"

In this update, Codex automatically adapts a dynamic UI to the current task for a better experience:

Better slide and table experience

Supports direct annotation in browsers, artifacts, and code

Getting started is easier

The overall design is simpler

Overall performance improvement

A device toolbar has also been added to the Codex in-app browser, making it easier to build and test responsive applications -

Browser usage speed (approximately 30% increase in subjective testing).

However, "Hello everyone is really good." The first wave of actual testing on the entire network has arrived. Let’s take a peek!

Taking over the entire Mac, all humans watch with zero operations

Mike Russell's actual measurement is the most intuitive proof of this upgrade.

He gave Codex three tasks:

Task 1: Audio restoration.One recording had significant background noise and sibilance issues. Codex automatically opens Adobe Audition, identifies noise characteristics, applies noise reduction filters, adjusts EQ parameters, and exports the finished product.

Russell later commented: "Professional-grade restoration, cleaner than my manual adjustment."

Task 2: Podcast cover design.Codex opens Photoshop, automatically selects a color scheme according to the podcast theme, formats the title text, adjusts the layer blending mode, and outputs a cover image that can be directly uploaded.

Task three:AIVideo generation.Codex calls Adobe Firefly to generate video clips based on text descriptions, and automatically splices and adds transitions.

Three tasks, across three Adobe professional software, are completed automatically.

Russell repeatedly emphasized one detail in the video: he did not touch the mouse, keyboard, or even switch windows during the entire process. Codex itself completes the switching and coordination of all software at the operating system level.

“This is not AI working for me,” Russell said. “This is AI working for me.”

This upgrade of Codex does not hit programmers, but all people who rely on computers for work.

When AI can control your entire computer, the skill itself of "being able to use software" will become devalued.

Of course, Russell's actual measurements are not perfect.

The video material generated by Firefly had obvious screen jitter in several frames, and Codex did not automatically identify and correct it. The text layout of the Photoshop cover had an issue with inconsistent font sizes during the first attempt. Codex discovered it and made a second adjustment before it passed.

Russell's summary is very realistic: "It's not 100 points, it's about 85 to 90 points. But the problem is - it took 8 minutes to reach this level, and it would have taken me 2 hours to do it myself."

85 points times 8 minutes, and 100 points times 2 hours. In most scenarios, the former wins.

Codex helps you take unlimited shots at zero cost

Netizen Matthew Berman directly introduced how to use Codex to take unlimited photos of products, which can be converted into complete e-commerce photos with just one network connection:

before:A set of e-commerce product graphics costs US$5,000-25,000 and takes 4 weeks.

Now:Enter a URL and the video will be released in 10 minutes at a cost of 0.

He packaged the entire system into「Brand ownerBrand Shoot Kit".

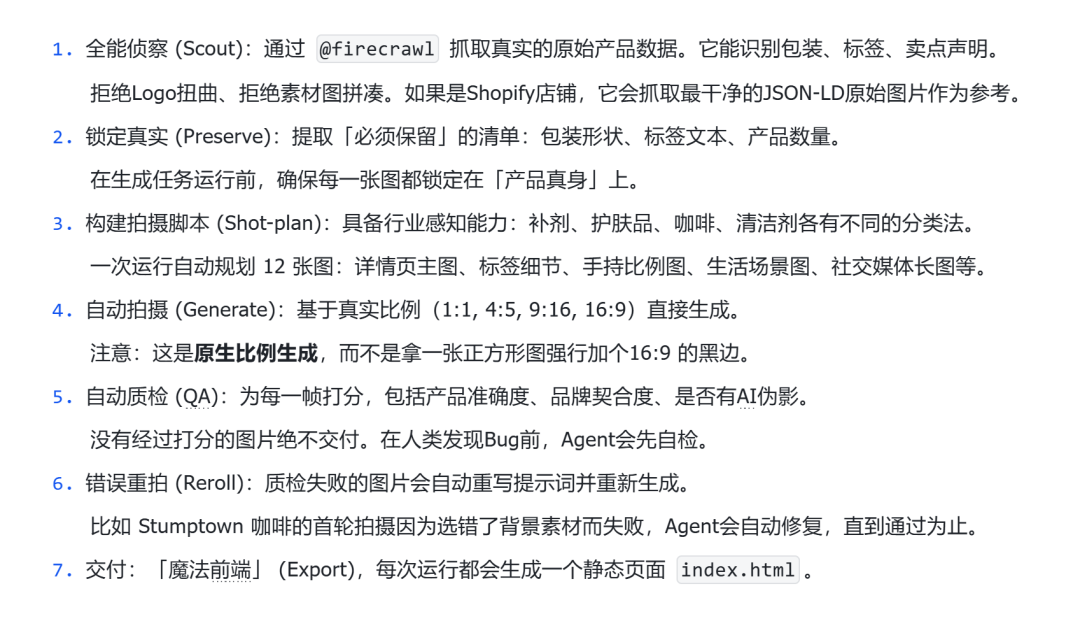

How does it turn a web link into an entire e-commerce photography library?

All you need are the following 7 Agent skills:

Is the human keyboard finally going to be eliminated?

In the past, manually debugging the UI was often very labor-intensive.

There is a silent pressure to check the AI bit by bit each time to see if it has destroyed other unrelated parts.

But if we can also hand over runtime UI behavior testing to AI, then the burden on humans can be reasonably reduced.

Now, Codex finally brings hope!

Obviously, Codex can already use the mouse to check whether the UI interface or behavior is normal one by one - the entire process is completely automated.

Netizens lamented: “This feels like “what people have been waiting for AI to do” has finally arrived. "I feel like we're getting closer to the tipping point of the next big shift."

At the end of the video, Russell said this: "When AI can control your entire computer, the skill of using software will become devalued."

This time, Codex didn’t hit programmers. After all, programmers have long been accustomed to AI writing code.

This time the target is all those who rely on computers for work - those who make PPT, write emails, cut audio, edit pictures, and make reports.

The previous logic was that people learn to use tools, and tools magnify people's abilities. Now the logic has begun to change: AI has learned to use tools, and people only need to clearly explain what they want.

It can be said that Codex is not upgrading its functions, it is redefining "using computers" itself.

During Russell's 45-minute actual test, everything that happened on that Mac - the mouse was moving by itself, the software was switching by itself, and the audio was rendering by itself - this scene will probably become the most vivid scene in 2026.

In the past, humans used the mouse to call software, but now AI uses APIs to call software.

What’s next? Unthinkable.