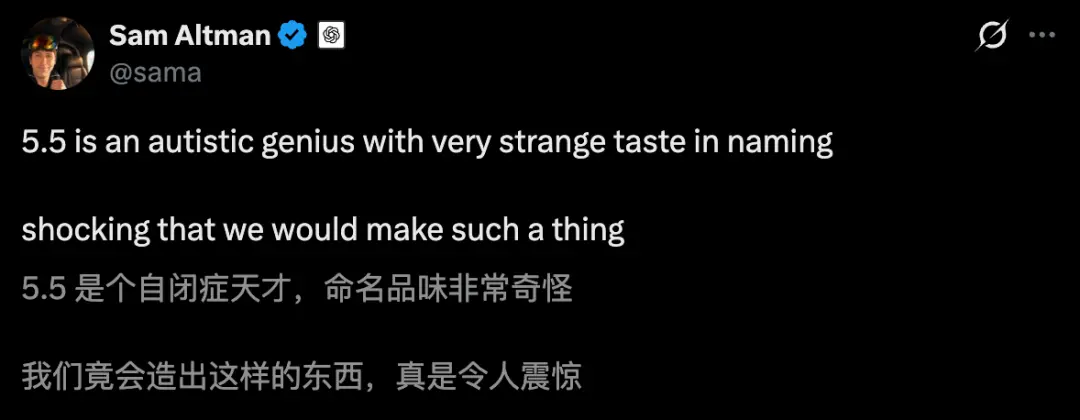

Just now, Ultraman personally gave GPT-5.5 a nickname that shocked the entire Internet - "Autistic Genius." Half a month after GPT-5.5 went online, Ultraman unabashedly expressed his excitement on social platforms many times. He couldn't help but sigh, I can't believe we actually created such an AI!

In Ultraman's own words, GPT-5.5's "original intelligence" has opened up a fault-level gap:

The running scores are overwhelming, the token savings are soaring, and the aesthetics of performance and violence are perfect.

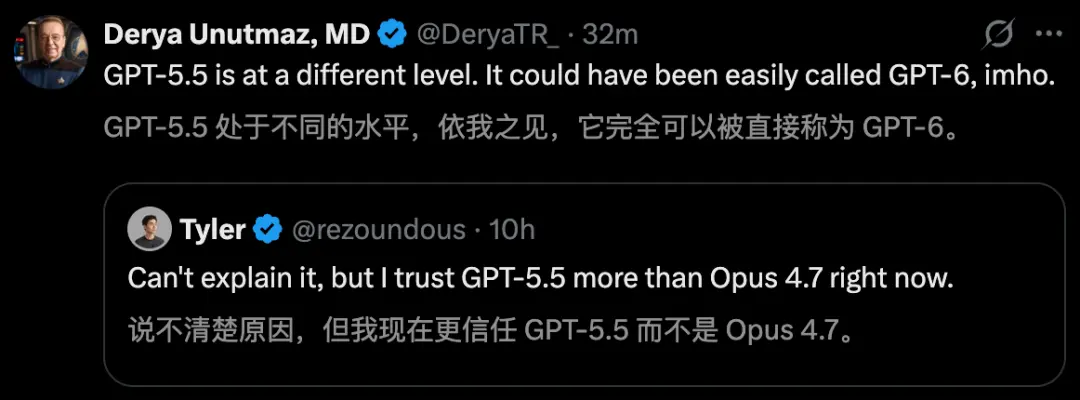

Big names in the AI circle voted for GPT-5.5 with their feet. Even AI professor Derya Unutmaz said bluntly that it can be called GPT-6!

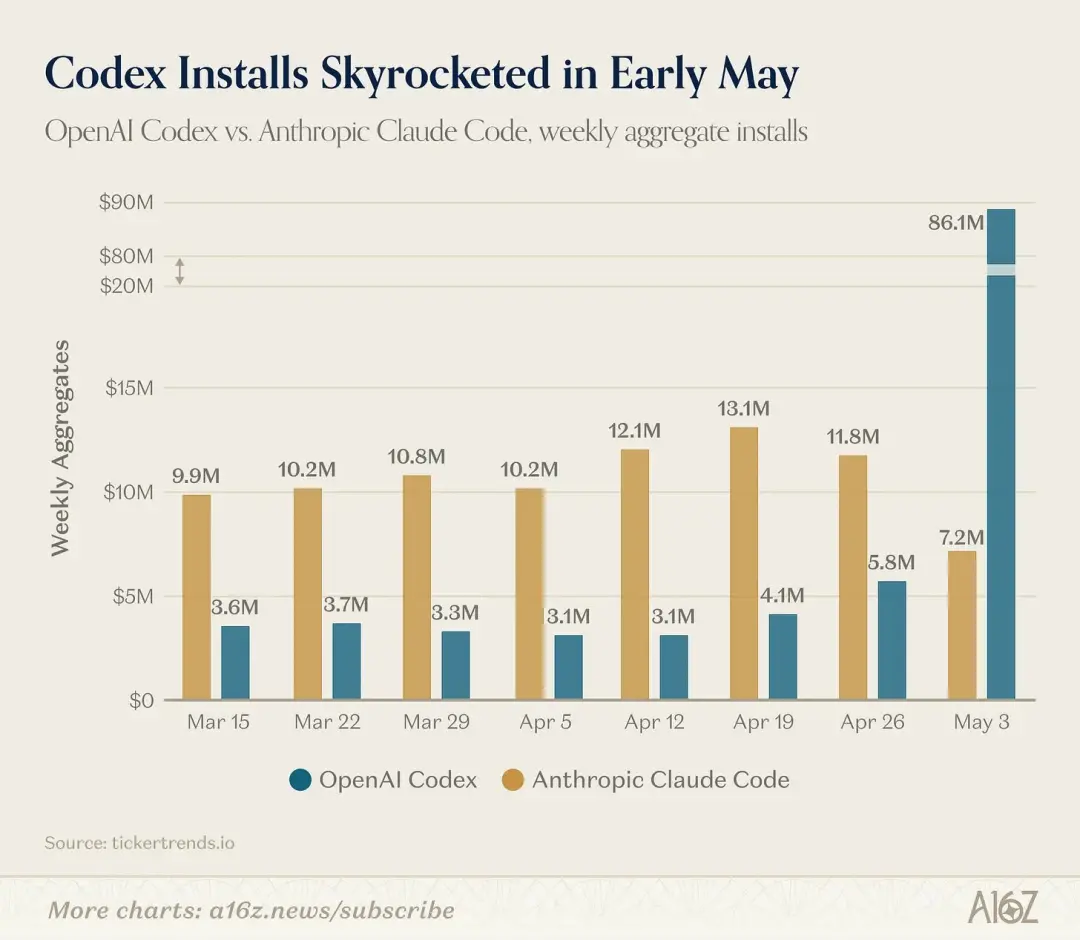

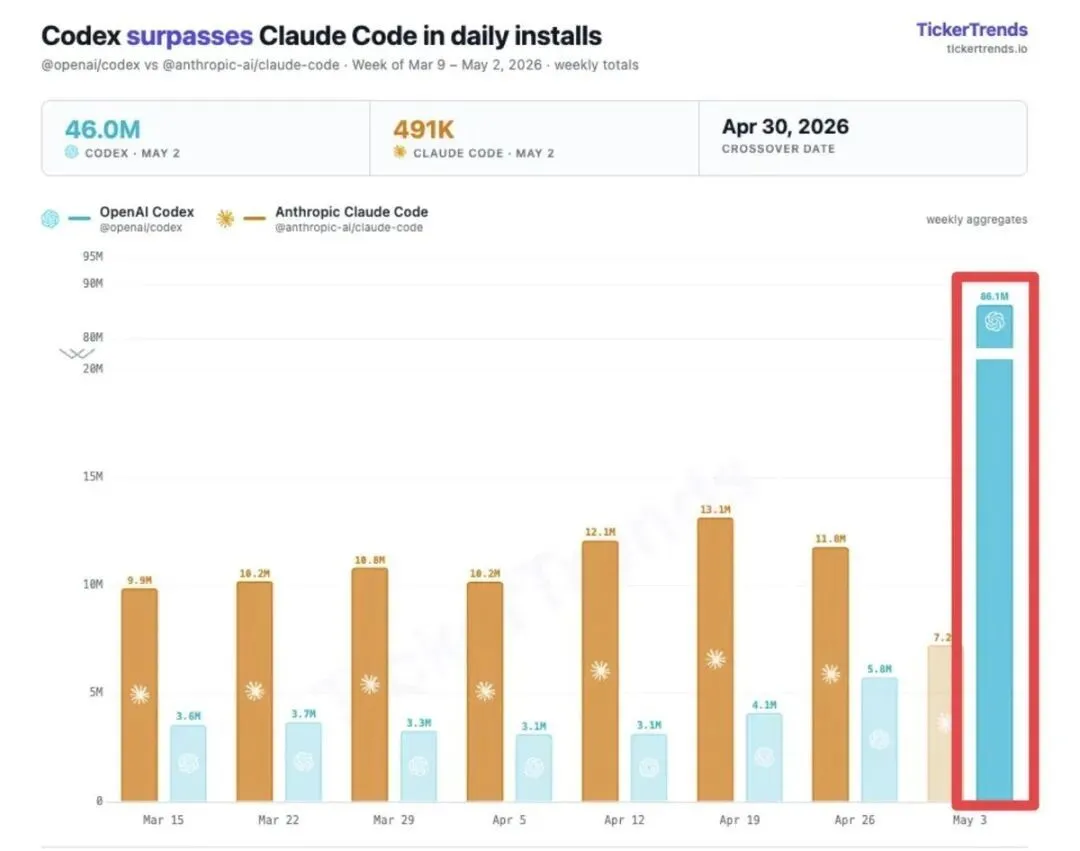

Also today, a chart went viral on the entire Internet. Codex downloads supported by GPT-5.5 soared in May, reaching 86.1 million, far exceeding Claude Code.

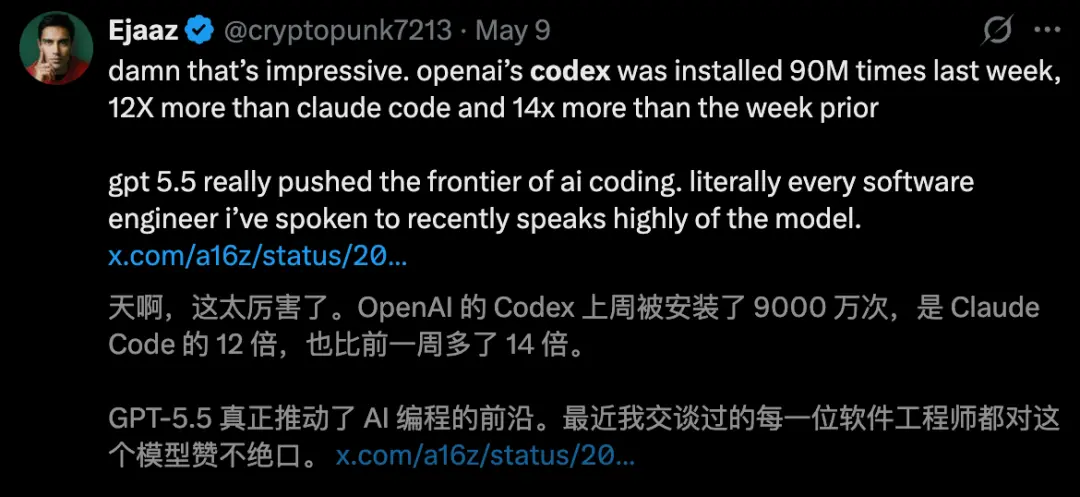

Last week alone, the number of downloads exceeded 90 million, 12 times that of Claude Code.

At the same time, feedback from developers is also proving this point.

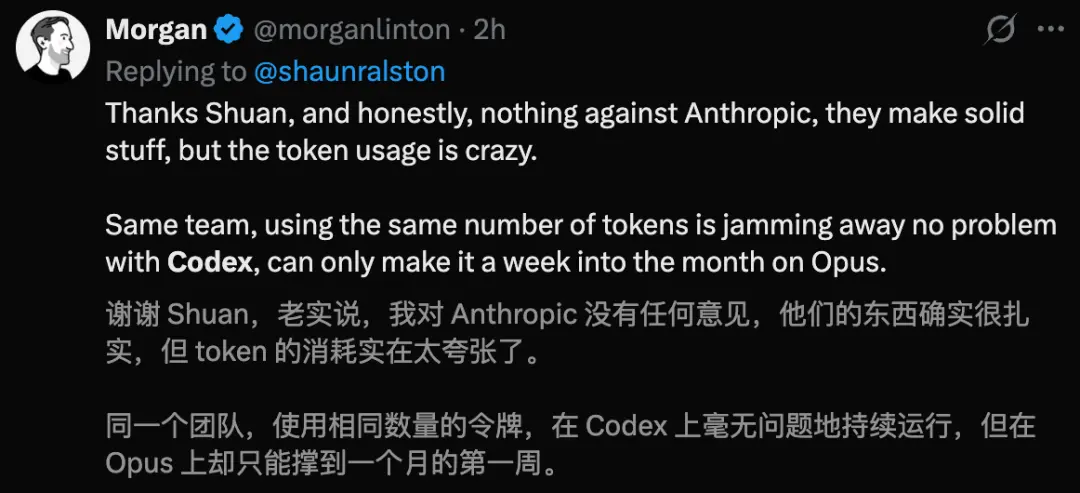

Many people have publicly stated that the performance of GPT-5.5 has surpassed Claude Opus 4.7 in actual encoding tasks, especially token consumption.

For the same task, GPT-5.5 uses nearly 40% less tokens than Claude.

I have to say that the metaphor of "autistic genius" is so accurate that it makes people feel a little distressed.

A team of 16 people can save $32,000 per month by unsubscribing from Claude

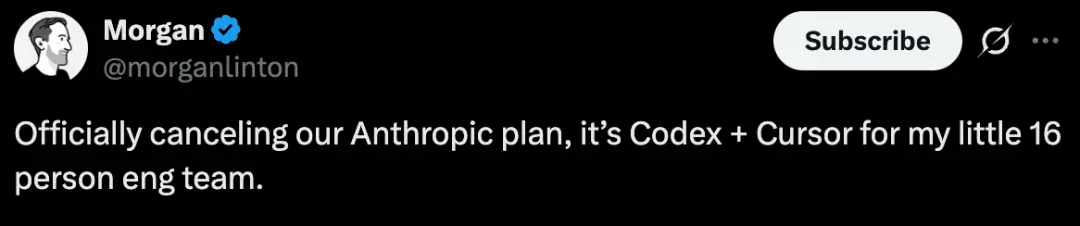

Morgan Linton, founder of a startup company Bold Metrics, posted a post with a calm tone but explosive content:

Officially say goodbye to Anthropic!

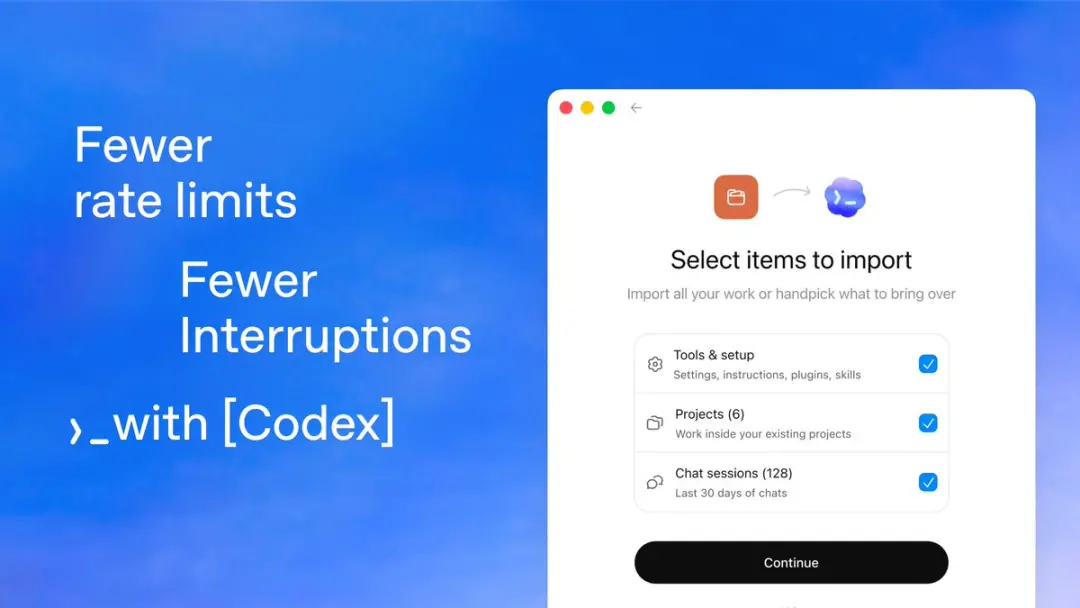

For my small engineering team of 16 people, the combination of Codex + Cursor has completely replaced the original solution.

The reason is simple and crude: Claude Code is too expensive!

In contrast, with the support of GPT-5.5, Codex's recent performance has been amazing, and its token utilization rate is extremely high, which is very cost-saving.

In actual work, Bold Metrics still frequently uses Cursor for code review.

The most important thing is that the team's use of Cursor has never triggered table restrictions so far, and its built-in Composer 2 functionality is sufficient to handle the vast majority of development scenarios.

Regarding the consumption of Claude Token, Linton made a calculation——

Each engineer costs more than $2,000 per month, and 16 people spend more than $32,000 per month.

After switching to Codex + Cursor, the token efficiency of GPT-5.5 has drastically reduced the cost without compromising performance.

Even more disturbing is his prediction that more and more engineering leaders will issue similar decisions.

It has to be said that this post was like a depth bomb that directly hit Anthropic’s lifeline——The product is good, but Token consumption is simply a money grab..

What about Codex? The data already provides the answer.

With 90 million downloads a week, Codex has become a myth

TickerTrends data shows that as of May 3, Codex has been downloaded an eye-popping 86.1 million times, a 1397% increase week-on-week.

By May 8, this number further climbed to 90 million in a single week.

At the same time, Anthropic’s Claude Code was downloaded 7.2 million times during the same period, which dropped 38% week-on-week.

One is racing and the other is losing blood. The speed of one ebb and flow is suffocating.

The tipping point of this wave of growth is clear——

On April 30, Codex released version v0.128.0, which introduced a persistent/goal workflow and supported cross-session multi-step task planning.

Coupled with the millions of token context brought by GPT-5.5 and the 40% token efficiency improvement, developers vote with their feet more honestly than any evaluation.

Altman himself also used one word to describe the growth of Codex in an internal letter: crazy!

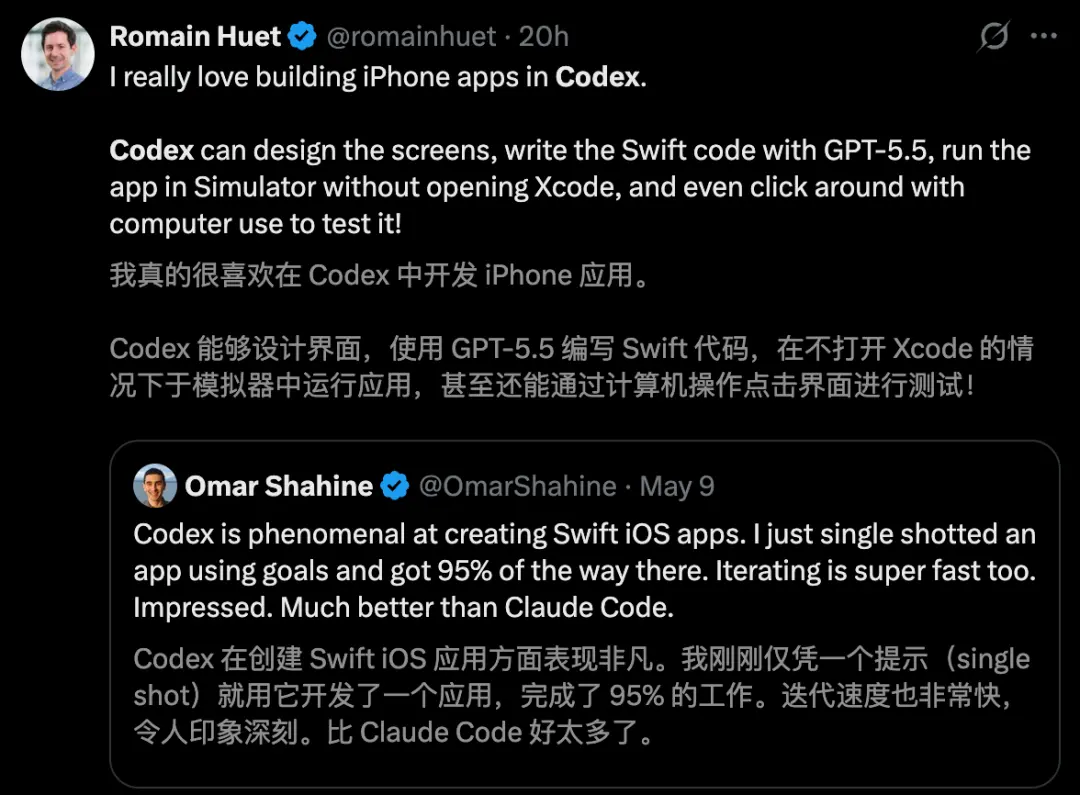

Microsoft Vice President Omar Shahine couldn't help but praise, "Codex has performed exceptionally well in creating Swift iOS applications."

He only used one prompt, and Codex came directly out of the application and solved 95% of the work. It was much easier to use than Claude Code.

Immediately afterwards, Romain Huet, head of developer experience at OpenAI, said——

Codex can design the interface, use GPT-5.5 to write Swift code, and you can run the app directly in the simulator without even opening Xcode. You can even use "computer control" to test it everywhere!

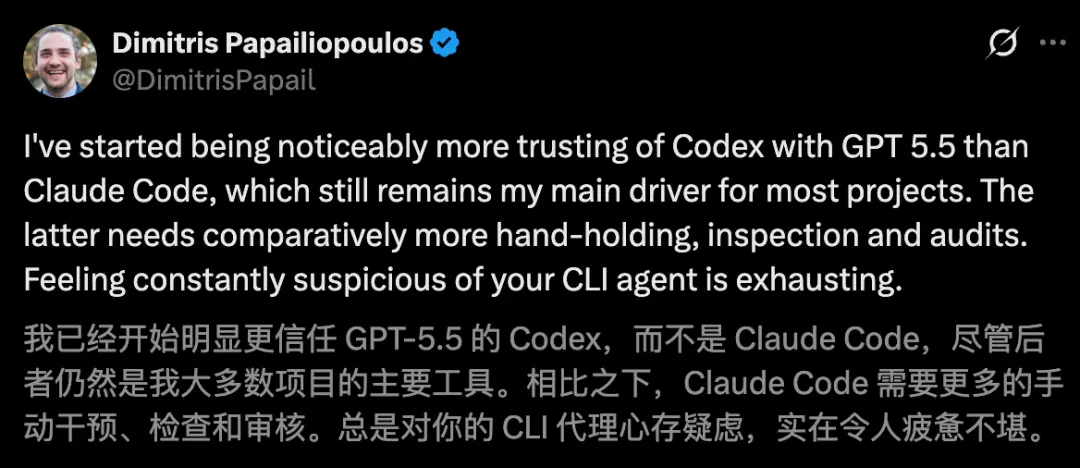

Developer Dimitris Papailiopoulos also said that he trusts Codex more.

Now, with Codex, Ultraman said his time is freer.

Ultraman's "truth" late at night, and the comment section got out of control

Also today, Allman started soliciting opinions online, "What do you most want to improve on OpenAI's next-generation model?"

For a time, the comment area exploded with suggestions.

A high praise comment nailed OpenAI to the wall

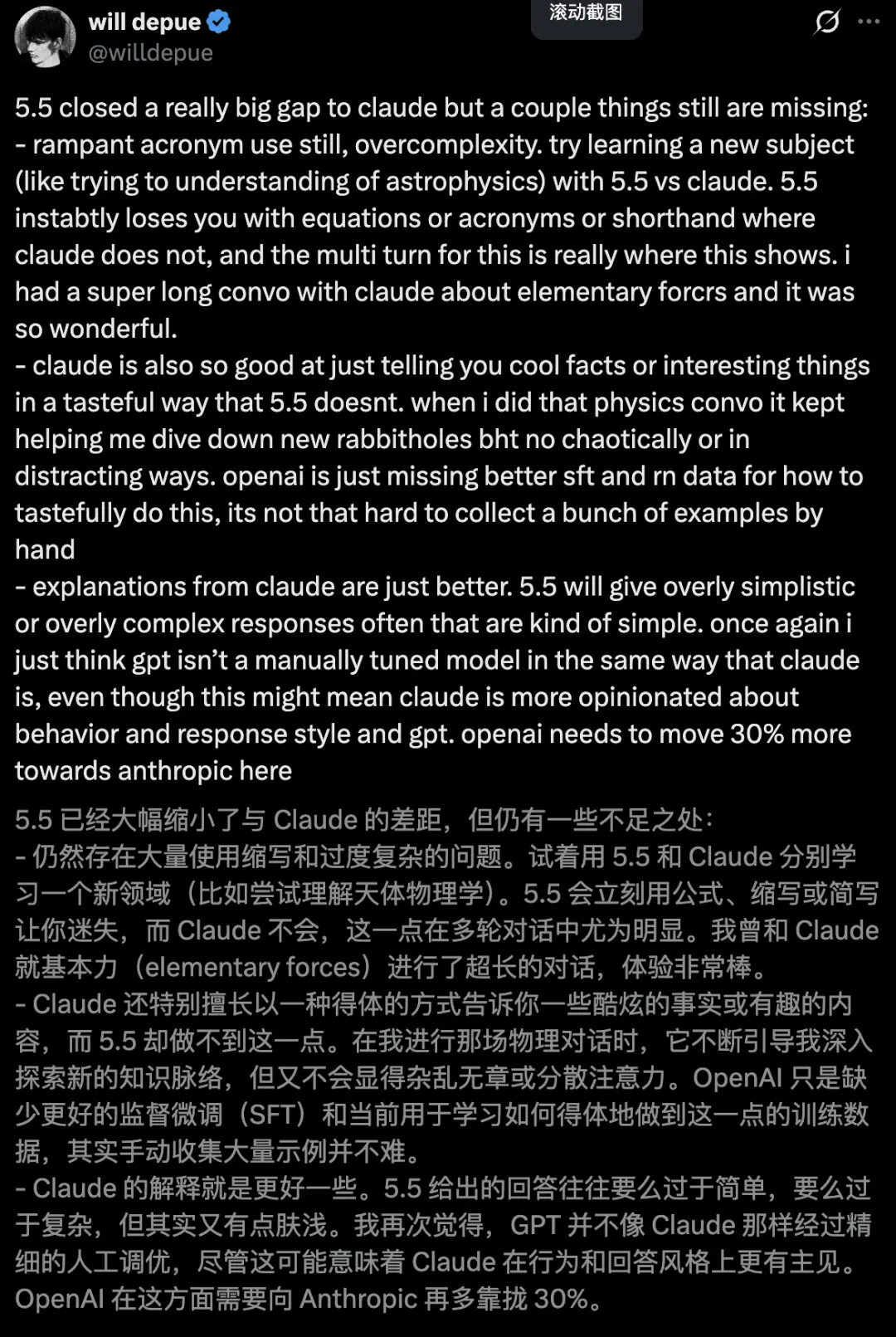

Former OpenAI researcher Will Depue’s reply became the focus of the audience.

GPT-5.5 narrowed the gap with Claude, but lost completely in the "human touch".

He gave an example. When you want to learn astrophysics, GPT-5.5 will immediately throw out a bunch of cold abbreviations and formulas, confusing you;

And Claude is like a knowledgeable and elegant tutor, who can lead you into the rabbit hole of various knowledge, which is both interesting and not messy.

Not only that, he shouted that OpenAI's data tuning is too mechanical, so he quickly learned from Anthropic and reduced the model's "personality" and "explanatory power" by 30%.

The most powerful model on the Internet is actually disliked for being "like a human being".

Others hope that ChatGPT can improve the ability to follow instructions and write.

In addition, the front end is what netizens mentioned the most, and they hope to see significant improvements.