Memory prices have increased 3-5 times this year, which has seriously affected everyone's willingness to consume PCs and mobile phones. The culprit of this huge increase in memory prices is the strong demand for AI. Everyone knows that AI has very high requirements for the capacity and bandwidth of memory (including video memory on GPUs), but how high can it be? The eighth-generation TPU released by Google a few days ago is the best example.

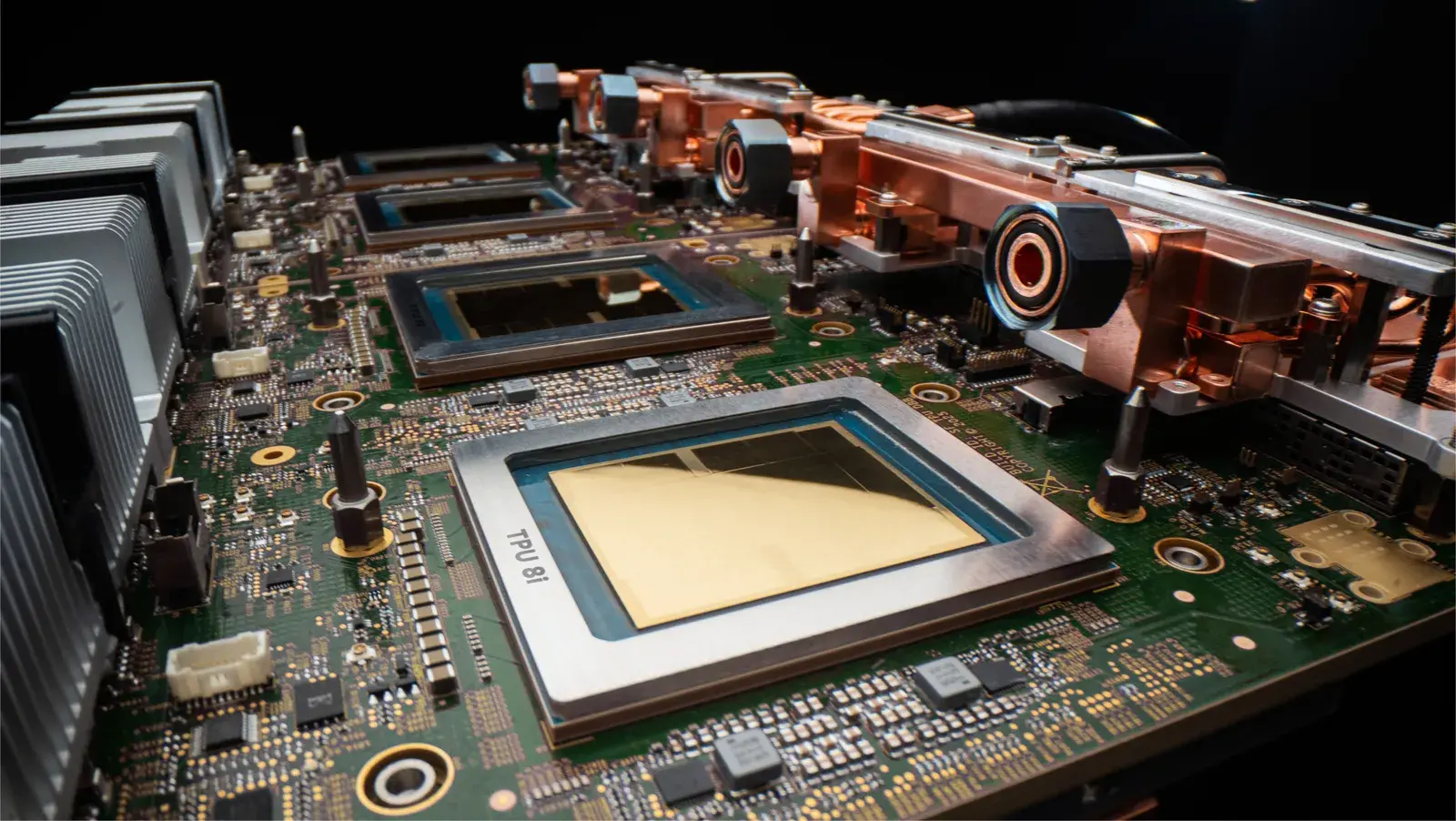

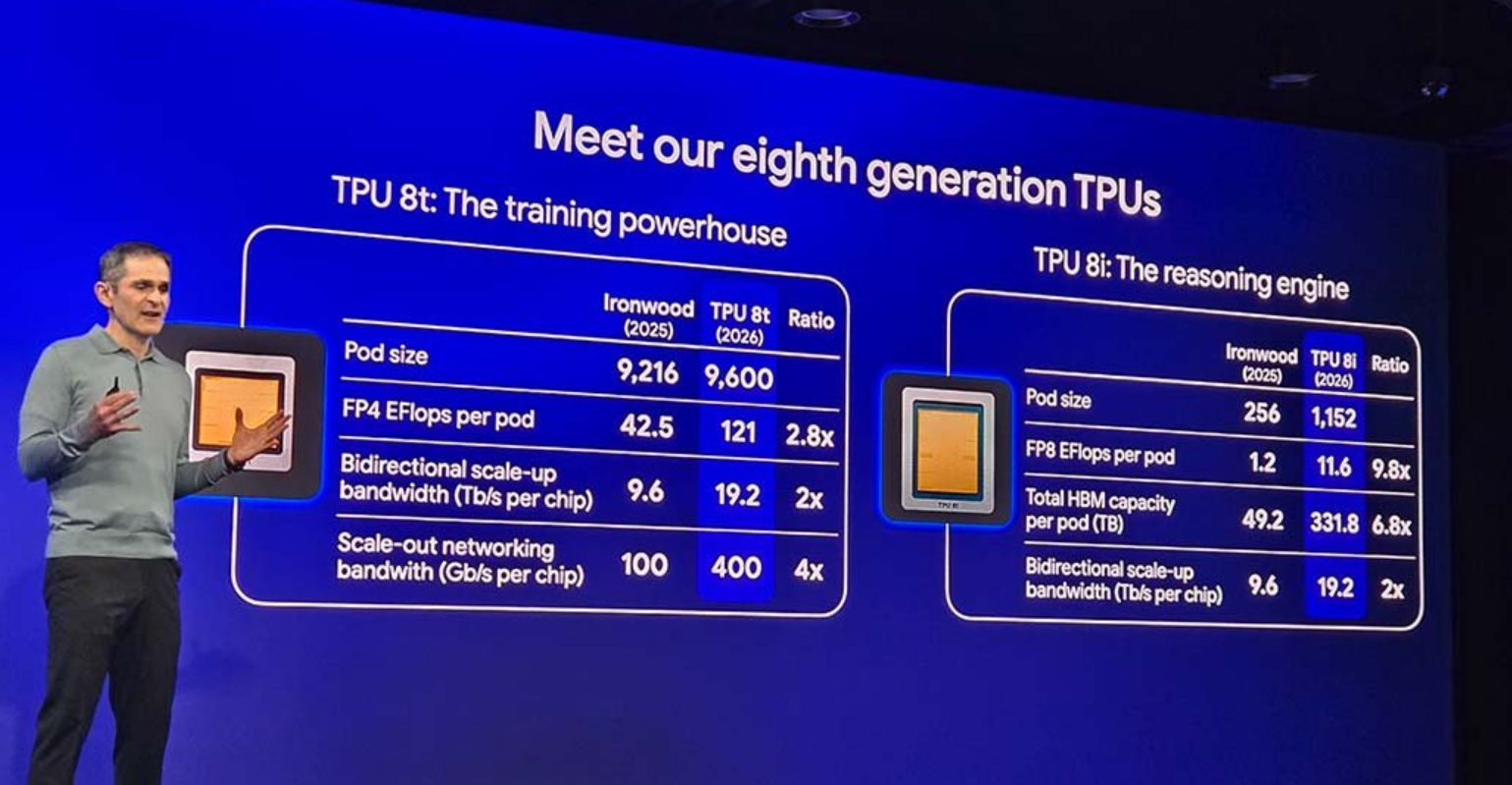

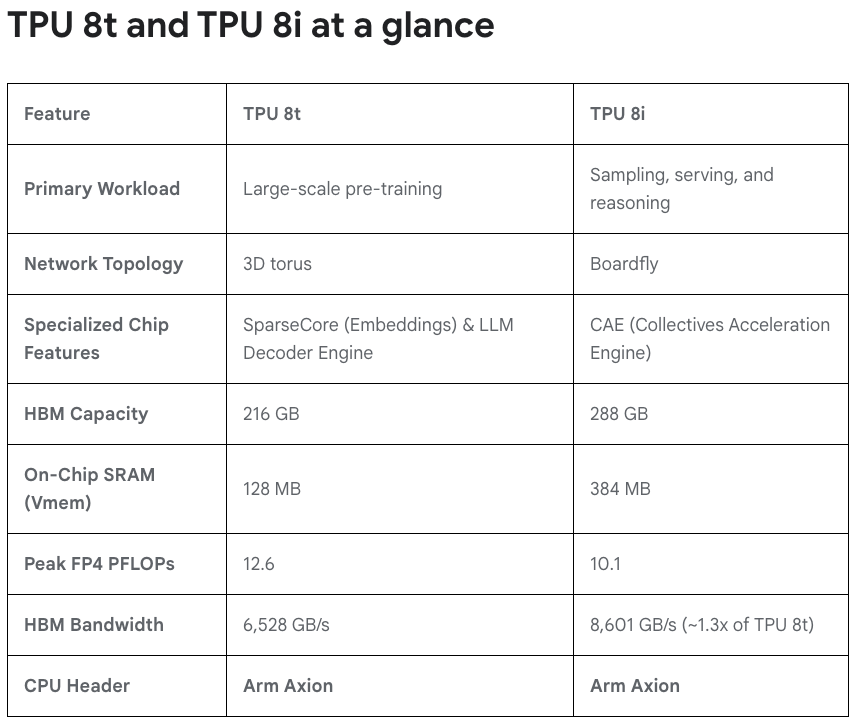

This year's TPU v8 differentiates between training and reasoning for the first time. V8T focuses on AI training. Although Google says it can also do reasoning, it is mainly used for training.Each Pod node is stacked with 9,600 V8T chips, and the FP4 performance reaches 121EFlops., the memory bandwidth is 19.2TB/s, and the internal chip bandwidth is 400GB/s, which is almost a 2-4 times change.

V8i is mainly aimed at AI inference loads, and the specifications have to be reduced a lot. Each node has only 1152 V8i chips, the computing power is reduced to 11.6EFlops, and the memory bandwidth remains unchanged at 19.2TB/s.

It is worth noting that the memory capacity has increased significantly this time.V8i also reaches 331.8TB HBM memory, and V8T has an exaggerated 2PB HBM memory. Each V8T chip is equipped with 216GB HBM memory.

Google's design concept this time is to break the memory wall of AI bottlenecks. The 2PB HBM is not only a super large total capacity, but also used as a single global address within a node. Although NVIDIA's GPU has previously been able to stack PB-level HBM memory through technologies such as NVLink, the connection cannot bypass the traditional data center network, which will cause performance and latency bottlenecks.

Larry Carvalho, chief consultant at RobustCloud, said breaking the "memory wall" marks a potentially major competitive shift for Google in the field of AI chips.

But for ordinary people, Google’s launch of 2PB HBM memory is not a good sign, because it means that AI’s demand for memory is still rising.You must know that HBM memory usually consumes 2-4 times more DRAM chip production capacity than conventional DDR memory.The more HBM is used, the more DDR memory capacity is occupied.

Even if demand rises, Samsung, SK Hynix, Micron and other companies will give priority to ensuring HBM demand, but they have previously made it clear that they will not significantly increase chip production capacity. Obviously, the shortage of memory chips will become more serious, and prices cannot be expected to drop quickly.