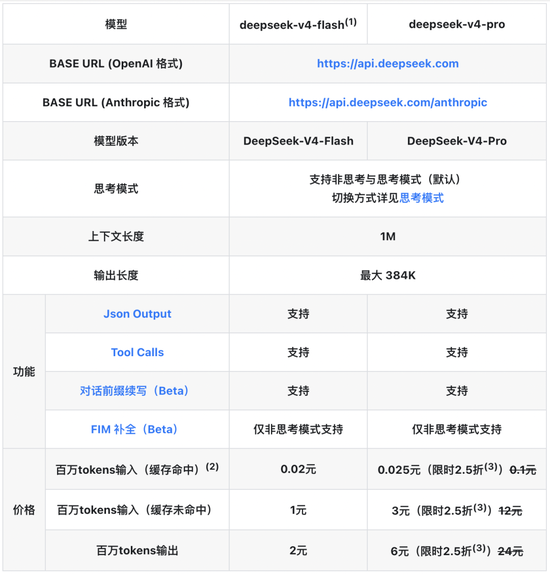

DeepSeek is redefining the boundaries of large model inclusion. On April 26, DeepSeek officially released an API price adjustment announcement. The price of all API input cache hits has been reduced to one-tenth of the initial price. The V4‑Pro upgrade is 25% off for a limited time, and the input cache hits of one million Tokens are as low as 0.025 yuan, setting a new low in the price of large models in the world.

According to the announcement on DeepSeek’s official API pricing page, this price reduction covers all models of the V4 series, and the core adjustments focus on input cache hit scenarios. Among them, the DeepSeek-V4-Flash input cache hit price dropped from 0.2 yuan/million Tokens to 0.02 yuan/million Tokens.

DeepSeek-V4-Pro for enterprise-level users has even greater discounts. The original price of 1 yuan/million Tokens is reduced to 0.1 yuan for cache input. A 25% off limited-time special offer is added before May 5, 2026, which is actually only 0.025 yuan/million tokens. The cache miss input is reduced from 12 yuan to 3 yuan, and the output is reduced from 24 yuan to 6 yuan.

Image source: DeepSeek official website

DeepSeek mentioned that the two model names DeepSeek-Chat and DeepSeek-Reasoner will be deprecated in the future. For compatibility reasons, the two correspond to the non-thinking and thinking modes of DeepSeek-V4-Flash respectively.

Comparing the prices before and after the price adjustment, it is easy to find that the cost of high-frequency calls and long text processing scenarios has dropped by more than 90%. Applications with high cache hit rates such as RAG knowledge base, intelligent customer service, and document analysis can directly realize a cliff-like drop in commercial costs, helping to break the cost shackles of large-scale implementation of AI.

The significant price reduction of DeepSeek is related to the technological upgrade of DeepSeek‑V4 and the in-depth collaboration with the Shengteng ecosystem.

On April 24, the preview version of DeepSeek‑V4 was officially released. Both open source Pro and Flash models support 1 million token ultra-long contexts. The self-developed sparse attention architecture greatly reduces the consumption of inference computing power. The Pro version's single-token computing power is only 27% of V3.2, and the KV cache is reduced to 10%, achieving cost optimization from the bottom up.

The parameters announced by DeepSeek show that DeepSeek‑V4‑Pro has 49B activation parameters and 33T pre-training data, positioning it as a high-performance flagship; DeepSeek‑V4‑Flash has 13B activation parameters and 32T pre-training data, focusing on high speed and low cost.

Compared with the previous generation model, the Agent capabilities of DeepSeek-V4-Pro are significantly enhanced. In the Agentic Coding evaluation, V4-Pro has reached the best level of current open source models, and also performed well in other Agent-related evaluations. It is reported that DeepSeek-V4 has become the Agentic Coding model used by DeepSeek internal employees. According to evaluation feedback, the usage experience is better than Sonnet 4.5, and the delivery quality is close to Claude Opus 4.6 non-thinking mode, but there is still a certain gap with Opus 4.6 thinking mode.

In the world knowledge evaluation, DeepSeek-V4-Pro is significantly ahead of other open source models and slightly inferior to the top closed source model Gemini-Pro-3.1. In the evaluation of mathematics, STEM, and competitive codes, DeepSeek-V4-Pro surpassed all currently publicly evaluated open source models and was comparable to the world's top closed source models.

Compared with DeepSeek-V4-Pro, DeepSeek-V4-Flash is slightly inferior in terms of world knowledge reserve, but it shows close reasoning capabilities. Because the model parameters and activations are smaller, V4-Flash can provide faster and more economical API services.

DeepSeek-V4 also pioneered a new attention mechanism that compresses in the token dimension and combines it with DSA sparse attention (DeepSeek Sparse Attention) to achieve world-leading long context capabilities and significantly reduce computing and graphics memory requirements compared to traditional methods.

What is even more noteworthy is that the entire range of Ascend super node products supports the DeepSeek V4 series models. This also means that DeepSeek releases more localization signals.

DeepSeek-V4 mentioned in a technical report, "The fine-grained EP (Expert Parallel) scheme was verified on two platforms, NVIDIA GPU and Huawei Ascend NPU. Compared with the powerful non-fused baseline, the scheme achieved 1.50-1.73 times acceleration in general reasoning tasks; in latency-sensitive scenarios (such as reinforcement learning (RL) rollout and high-speed Agent services), it can achieve up to 1.96 times acceleration."

DeepSeek emphasized that as the full range of Ascend super node products are launched in batches in the second half of the year, the price of the Pro version is expected to be significantly reduced.

After the release of DeepSeek-V4, Goldman Sachs released an analysis report pointing out that the core significance of DeepSeek V4 is to support the implementation of more complex agent applications at a lower cost, thereby opening up a new space for the scale of AI applications. Regarding the inclusion of Ascend super nodes, Goldman Sachs believes that DeepSeek’s cost competitiveness will be further strengthened, creating conditions for a wider range of applications. In addition, against the background of continued tightening of chips, the trend of migrating China's top AI models to domestic computing power has been clearly endorsed by leading players.

The Goldman Sachs report also cited news reports that Tencent and Alibaba are negotiating to invest in DeepSeek at a valuation of more than US$20 billion. The latest market values of Zhipu and MiniMax are approximately US$53 billion and US$31 billion respectively. This potential transaction reflects the logic of the giants' competition for scarce top-level AI capabilities.

Huatai Securities believes that the market easily interprets V4 as "cost reduction and reduced computing power and storage requirements", but the more important marginal change is that after the cost of long context decreases, the availability of complex agents, multi-document analysis, long-term tasks, online learning and other scenarios will increase, and the number of inference calls and storage access frequency is expected to expand.